Welcome to the

2024 AI Index Report

Welcome to the seventh edition of the AI Index report. The 2024 Index is our most comprehensive to date and arrives at an important moment when AI’s influence on society has never been more pronounced. This year, we have broadened our scope to more extensively cover essential trends such as technical advancements in AI, public perceptions of the technology, and the geopolitical dynamics surrounding its development. Featuring more original data than ever before, this edition introduces new estimates on AI training costs, detailed analyses of the responsible AI landscape, and an entirely new chapter dedicated to AI’s impact on science and medicine.

The AI Index report tracks, collates, distills, and visualizes data related to artificial intelligence (AI). Our mission is to provide unbiased, rigorously vetted, broadly sourced data in order for policymakers, researchers, executives, journalists, and the general public to develop a more thorough and nuanced understanding of the complex field of AI.

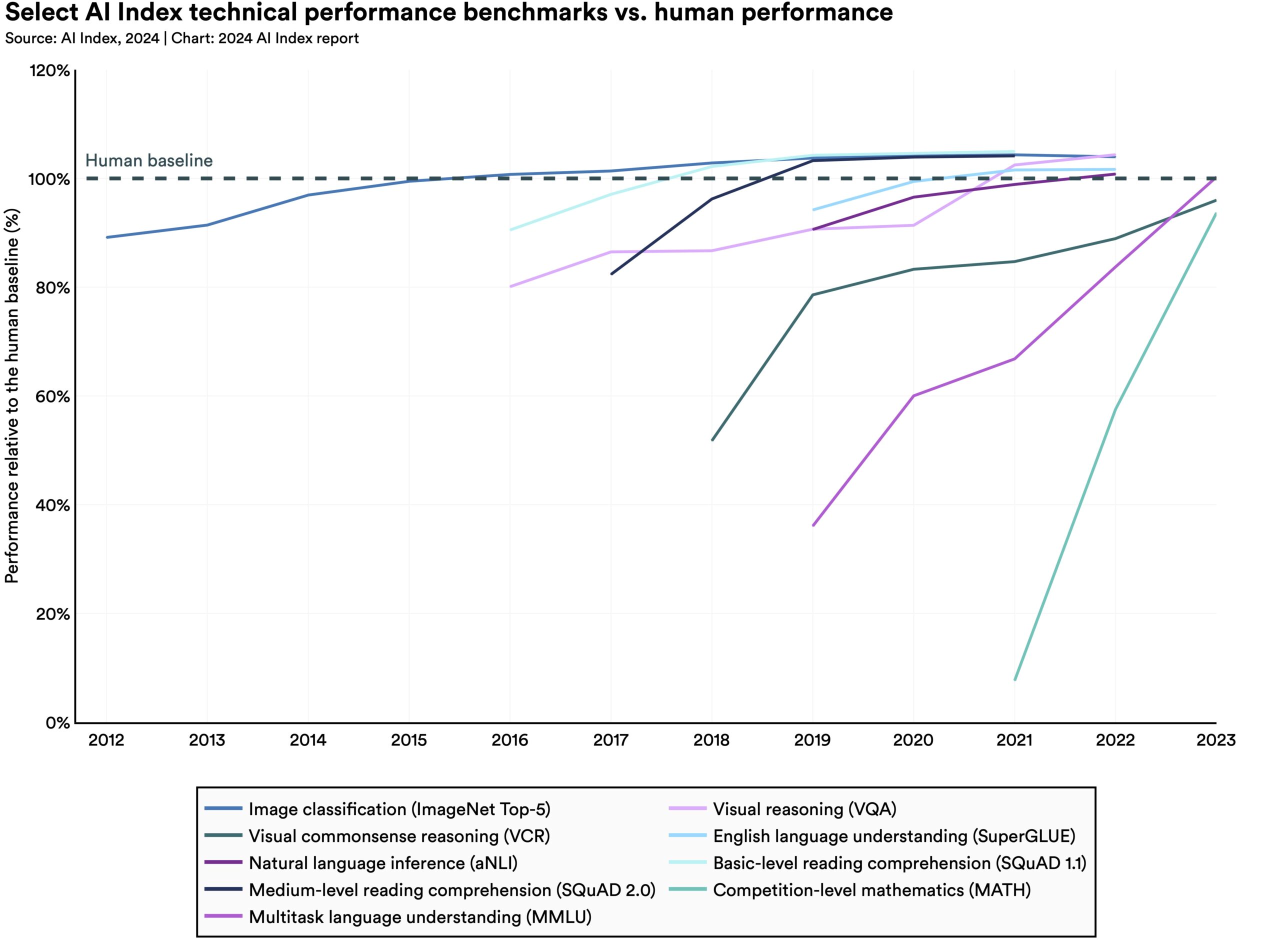

AI has surpassed human performance on several benchmarks, including some in image classification, visual reasoning, and English understanding. Yet it trails behind on more complex tasks like competition-level mathematics, visual commonsense reasoning and planning.

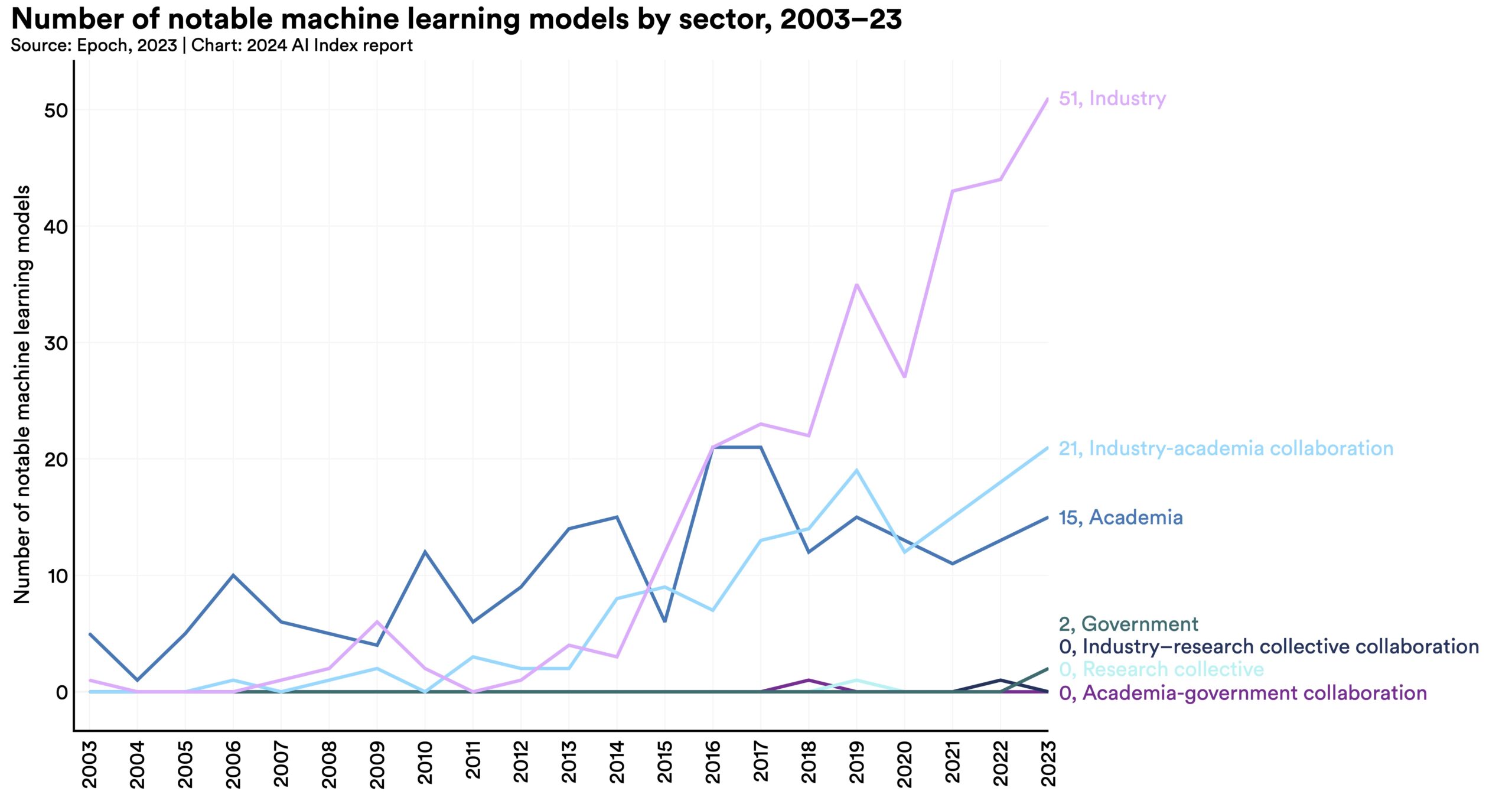

In 2023, industry produced 51 notable machine learning models, while academia contributed only 15. There were also 21 notable models resulting from industry-academia collaborations in 2023, a new high.

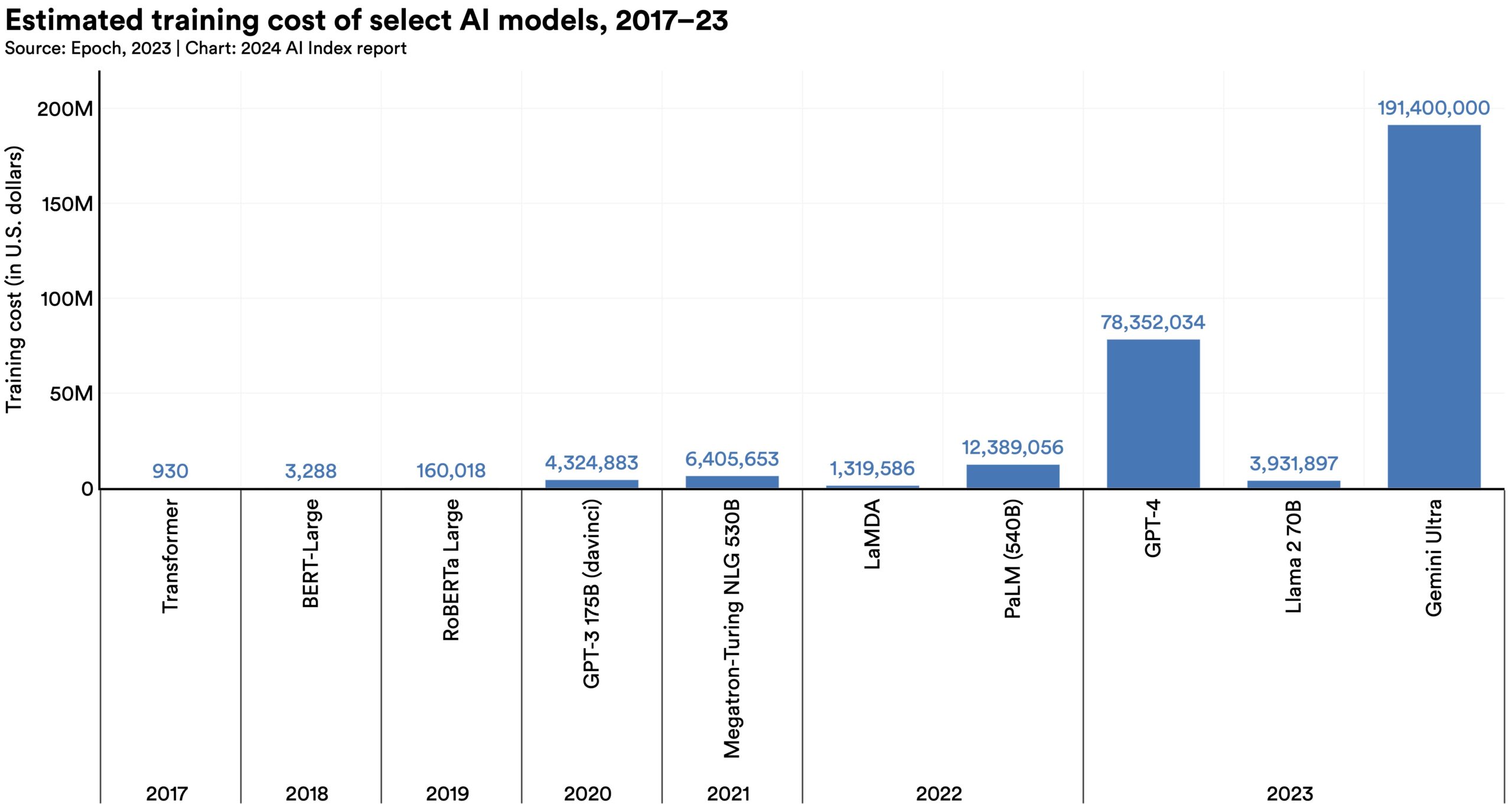

According to AI Index estimates, the training costs of state-of-the-art AI models have reached unprecedented levels. For example, OpenAI’s GPT-4 used an estimated $78 million worth of compute to train, while Google’s Gemini Ultra cost $191 million for compute.

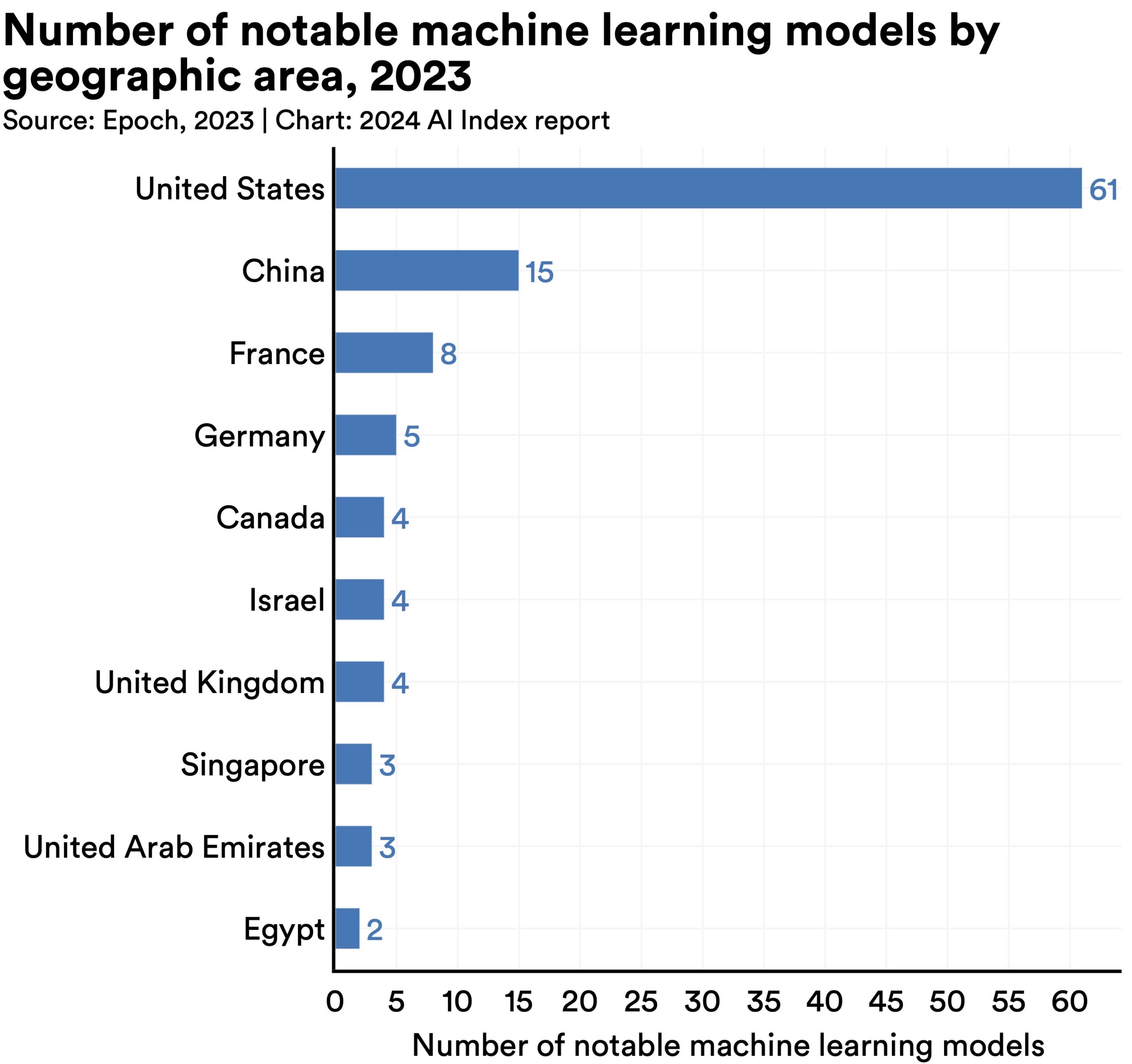

In 2023, 61 notable AI models originated from U.S.-based institutions, far outpacing the European Union’s 21 and China’s 15.

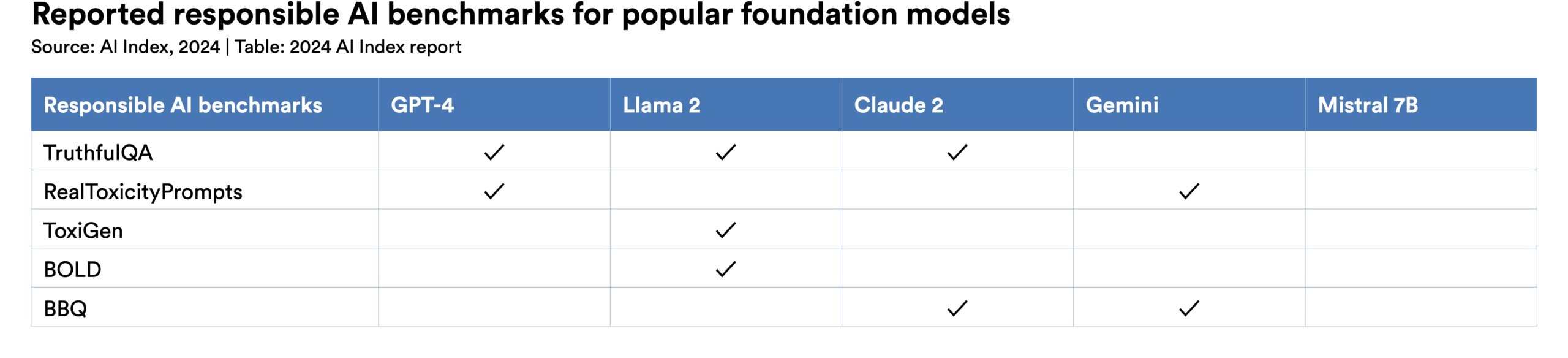

New research from the AI Index reveals a significant lack of standardization in responsible AI reporting. Leading developers, including OpenAI, Google, and Anthropic, primarily test their models against different responsible AI benchmarks. This practice complicates efforts to systematically compare the risks and limitations of top AI models.

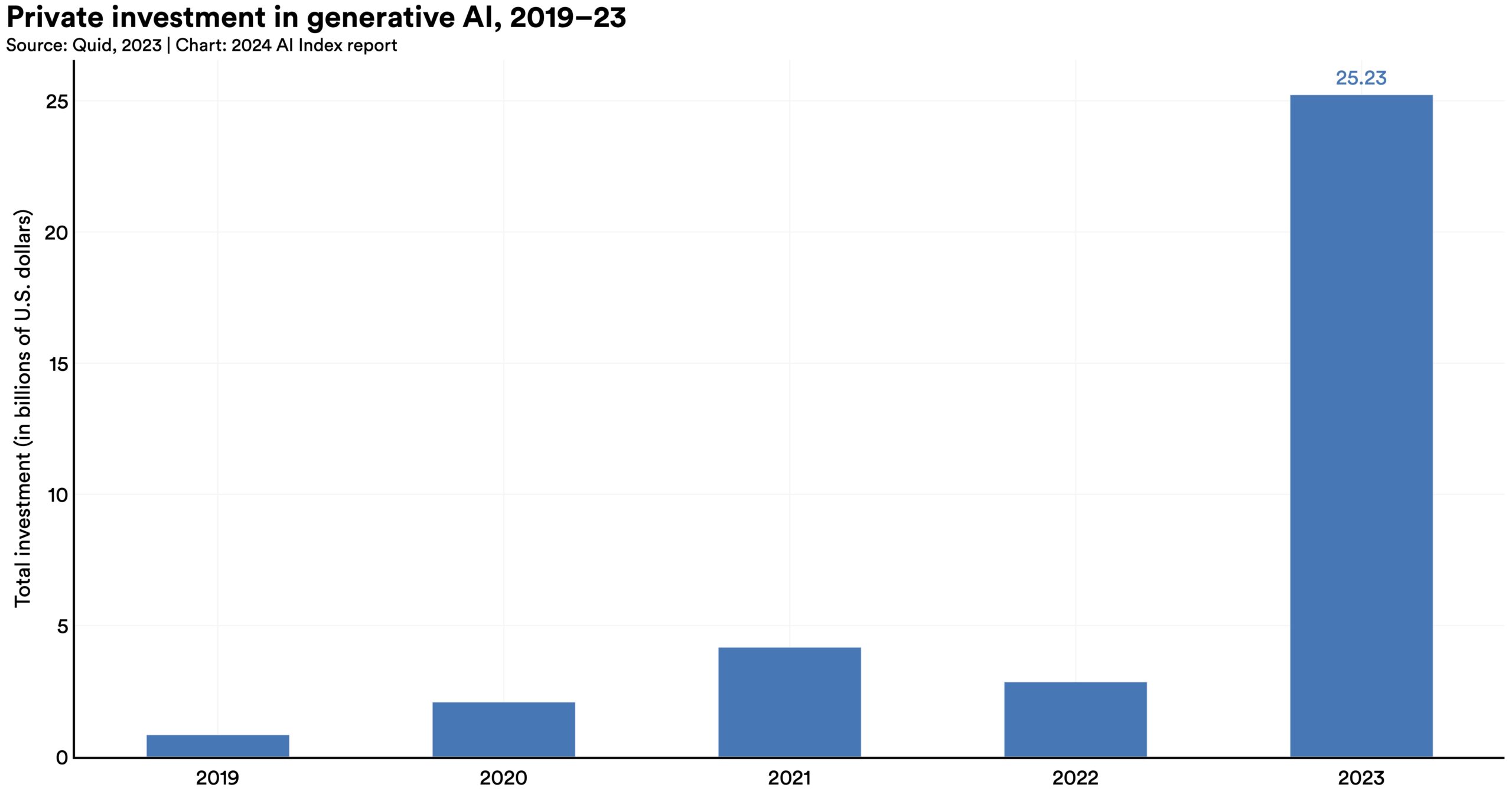

Despite a decline in overall AI private investment last year, funding for generative AI surged, nearly octupling from 2022 to reach $25.2 billion. Major players in the generative AI space, including OpenAI, Anthropic, Hugging Face, and Inflection, reported substantial fundraising rounds.

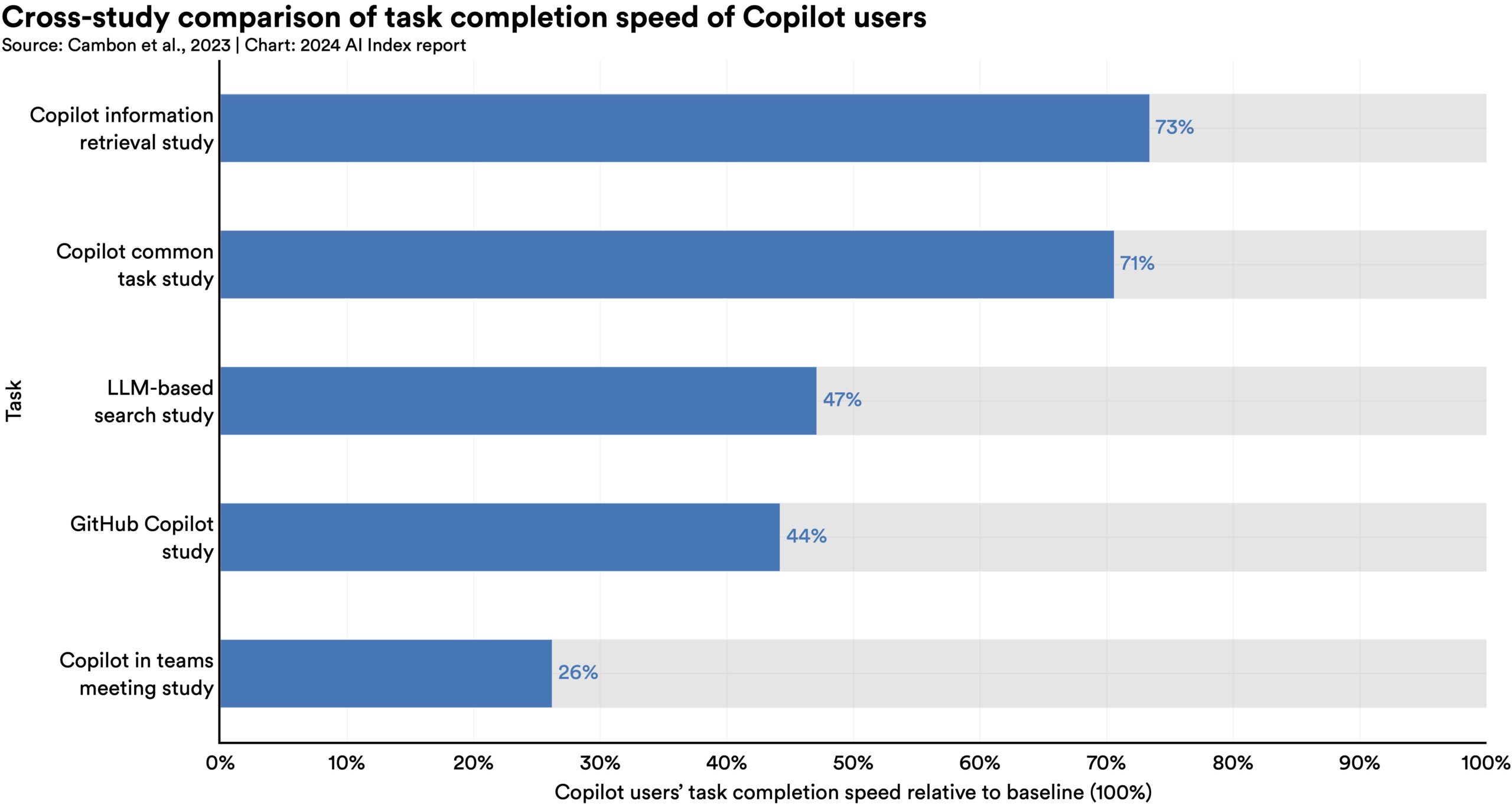

In 2023, several studies assessed AI’s impact on labor, suggesting that AI enables workers to complete tasks more quickly and to improve the quality of their output. These studies also demonstrated AI’s potential to bridge the skill gap between low- and high-skilled workers. Still other studies caution that using AI without proper oversight can lead to diminished performance.

In 2022, AI began to advance scientific discovery. 2023, however, saw the launch of even more significant science-related AI applications—from AlphaDev, which makes algorithmic sorting more efficient, to GNoME, which facilitates the process of materials discovery.

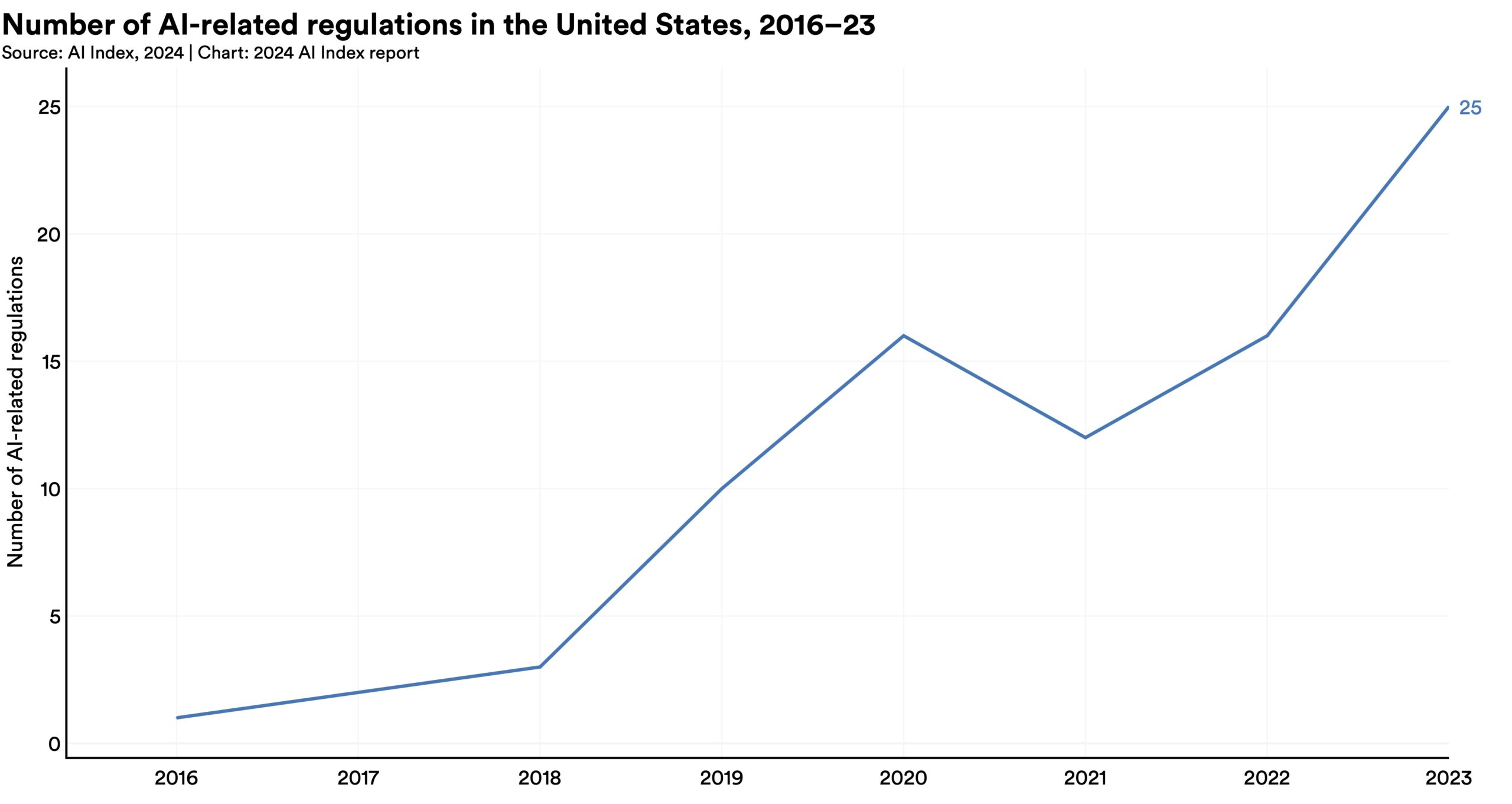

The number of AI-related regulations in the U.S. has risen significantly in the past year and over the last five years. In 2023, there were 25 AI-related regulations, up from just one in 2016. Last year alone, the total number of AI-related regulations grew by 56.3%.

A survey from Ipsos shows that, over the last year, the proportion of those who think AI will dramatically affect their lives in the next three to five years has increased from 60% to 66%. Moreover, 52% express nervousness toward AI products and services, marking a 13 percentage point rise from 2022. In America, Pew data suggests that 52% of Americans report feeling more concerned than excited about AI, rising from 38% in 2022.

This chapter studies trends in AI research and development. It begins by examining trends in AI publications and patents, and then examines trends in notable AI systems and foundation models. It concludes by analyzing AI conference attendance and open-source AI software projects.

In 2023, industry produced 51 notable machine learning models, while academia contributed only 15. There were also 21 notable models resulting from industry-academia collaborations in 2023, a new high.

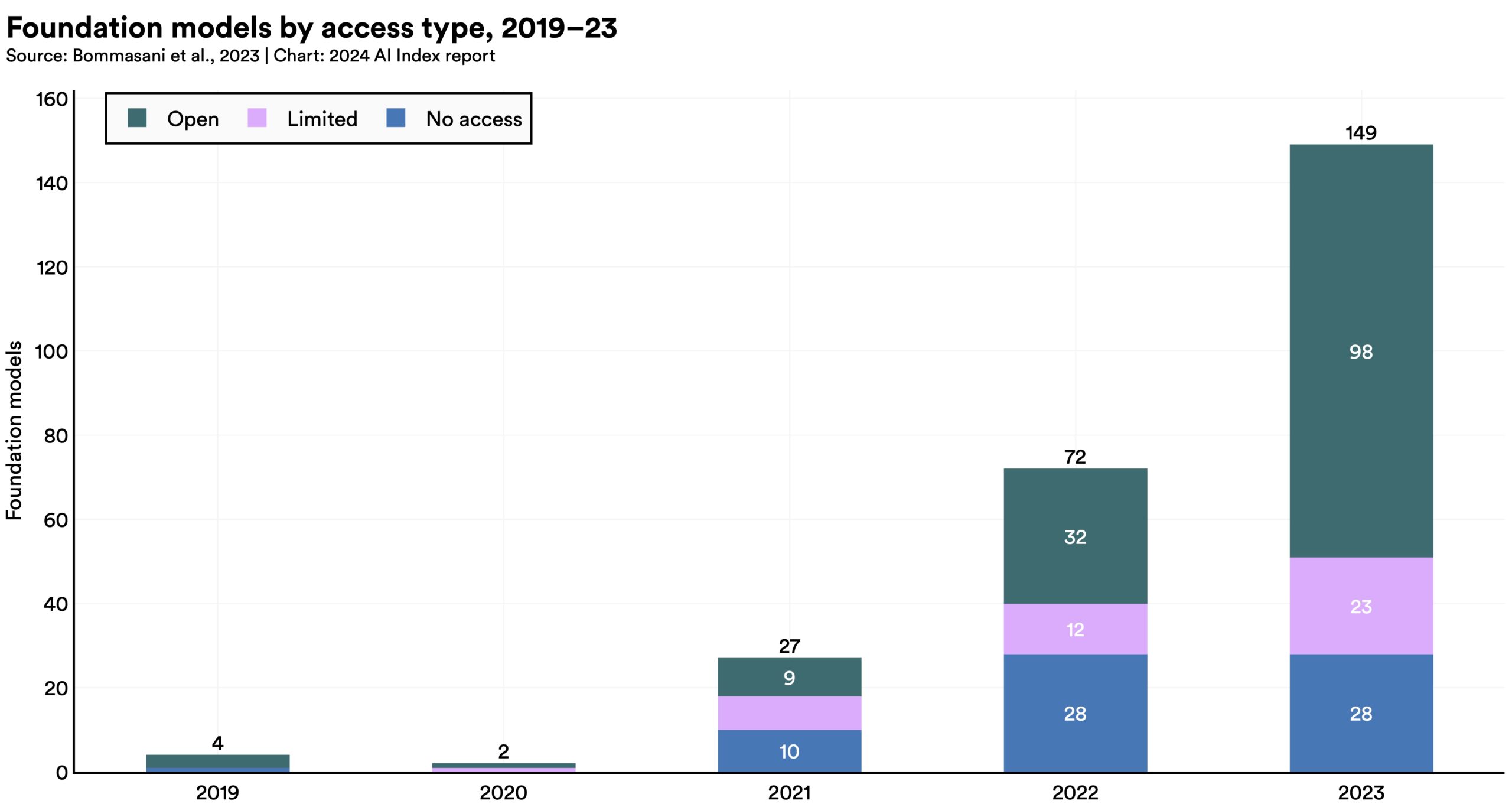

In 2023, industry produced 51 notable machine learning models, while academia contributed only 15. There were also 21 notable models resulting from industry-academia collaborations in 2023, a new high.  In 2023, a total of 149 foundation models were released, more than double the amount released in 2022. Of these newly released models, 65.7% were open-source, compared to only 44.4% in 2022 and 33.3% in 2021.

In 2023, a total of 149 foundation models were released, more than double the amount released in 2022. Of these newly released models, 65.7% were open-source, compared to only 44.4% in 2022 and 33.3% in 2021.  According to AI Index estimates, the training costs of state-of-the-art AI models have reached unprecedented levels. For example, OpenAI’s GPT-4 used an estimated $78 million worth of compute to train, while Google’s Gemini Ultra cost $191 million for compute.

According to AI Index estimates, the training costs of state-of-the-art AI models have reached unprecedented levels. For example, OpenAI’s GPT-4 used an estimated $78 million worth of compute to train, while Google’s Gemini Ultra cost $191 million for compute.  In 2023, 61 notable AI models originated from U.S.-based institutions, far outpacing the European Union’s 21 and China’s 15.

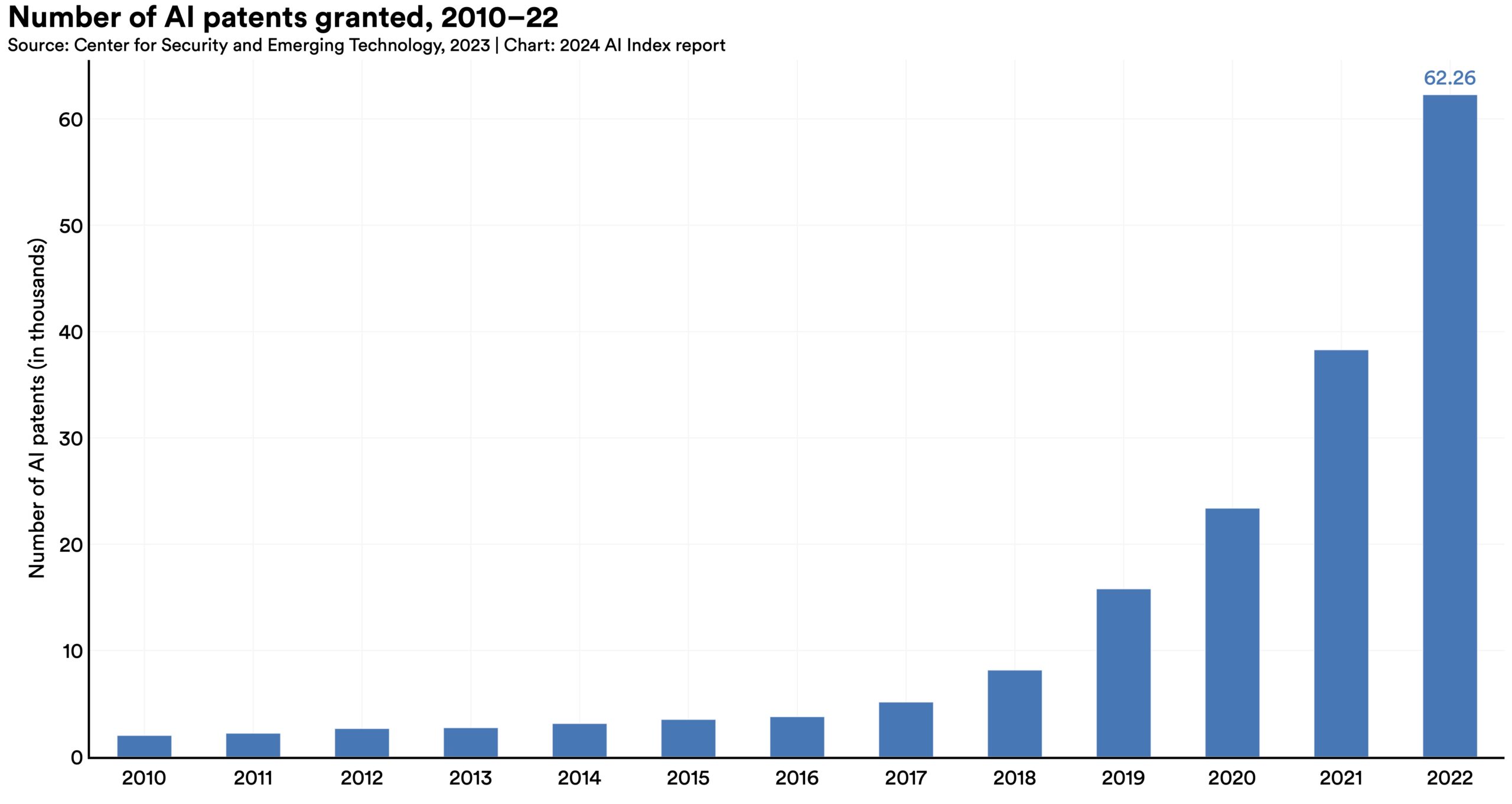

In 2023, 61 notable AI models originated from U.S.-based institutions, far outpacing the European Union’s 21 and China’s 15.  From 2021 to 2022, AI patent grants worldwide increased sharply by 62.7%. Since 2010, the number of granted AI patents has increased more than 31 times.

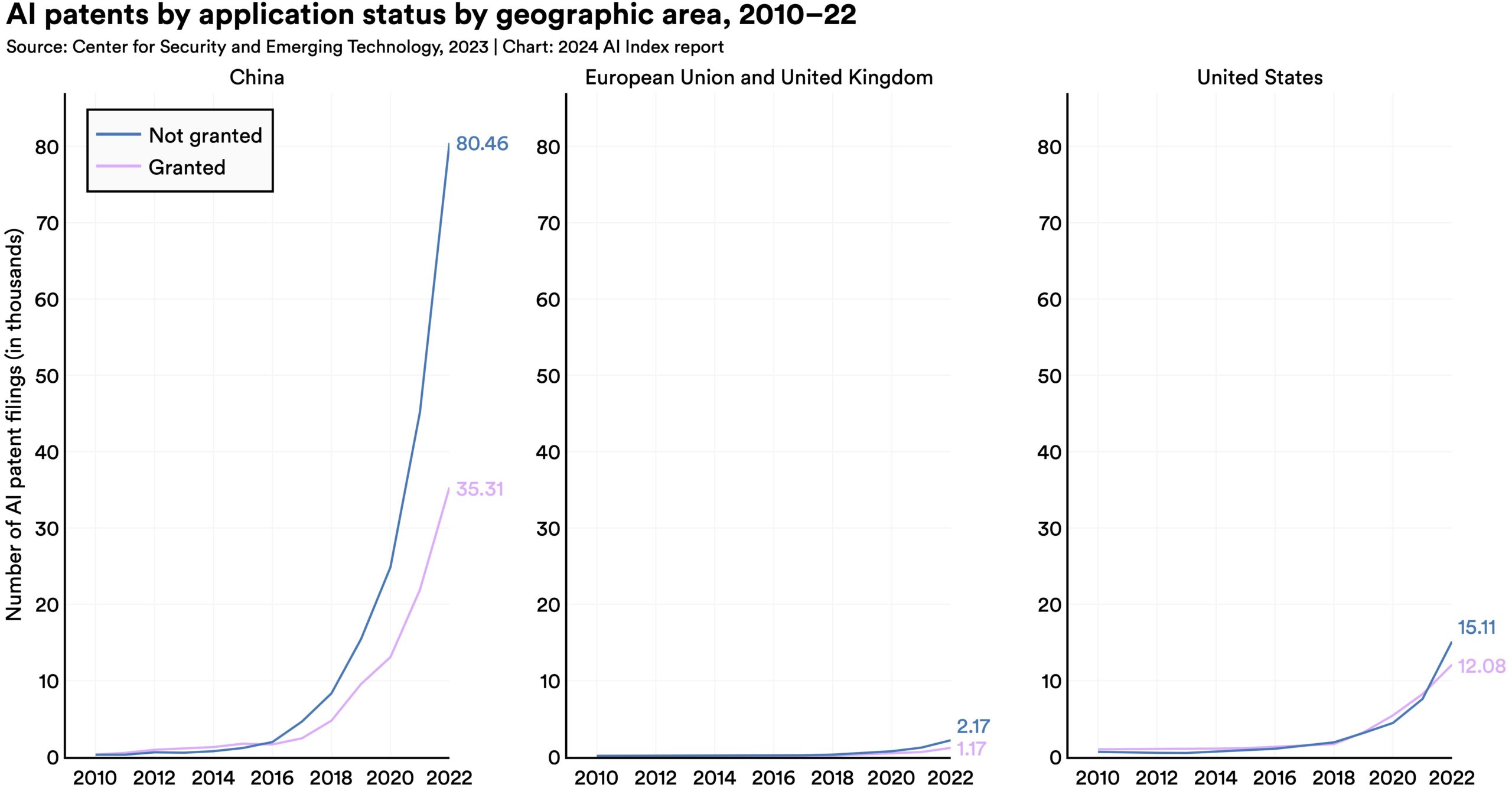

From 2021 to 2022, AI patent grants worldwide increased sharply by 62.7%. Since 2010, the number of granted AI patents has increased more than 31 times.  In 2022, China led global AI patent origins with 61.1%, significantly outpacing the United States, which accounted for 20.9% of AI patent origins. Since 2010, the U.S. share of AI patents has decreased from 54.1%.

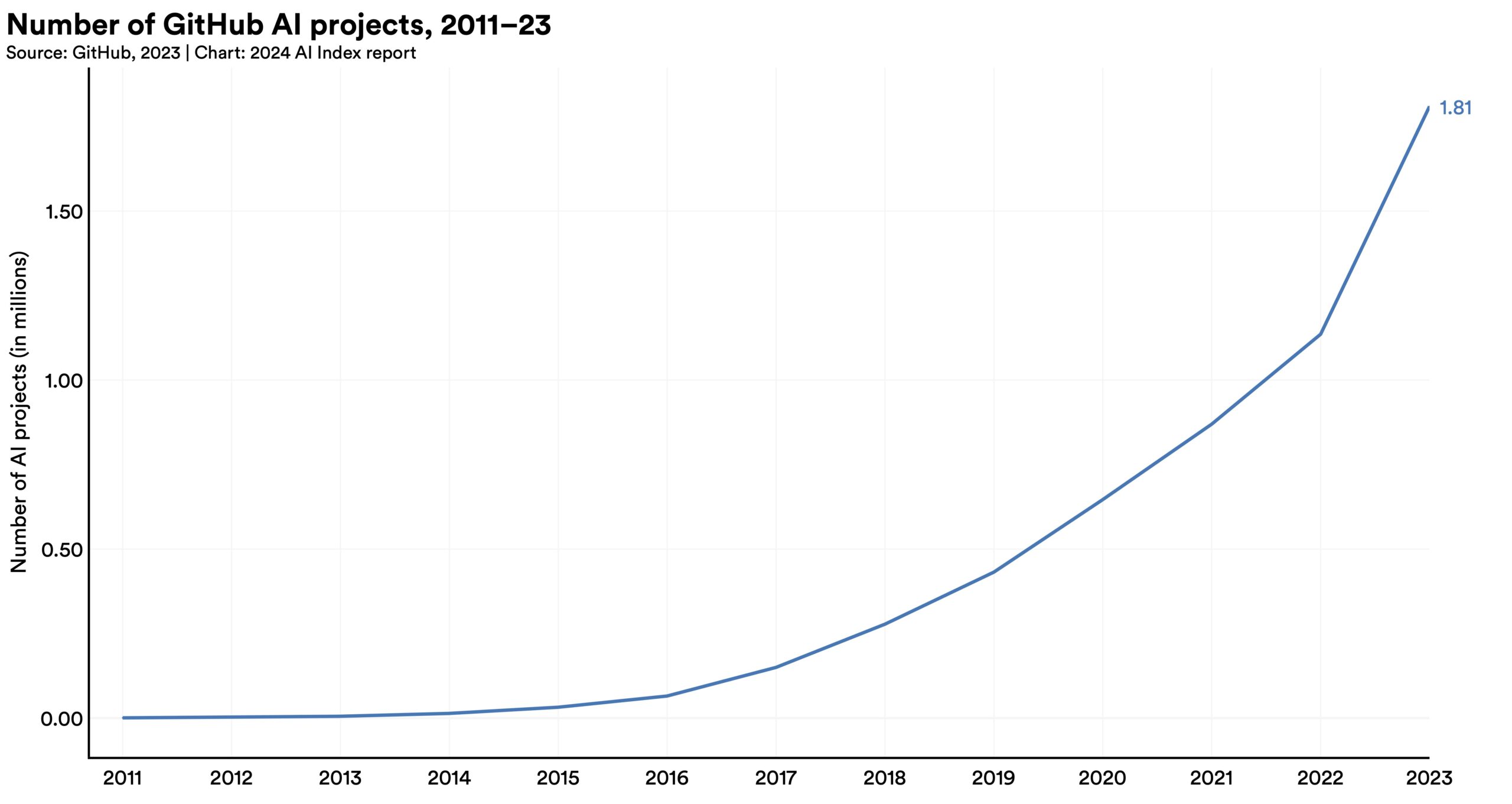

In 2022, China led global AI patent origins with 61.1%, significantly outpacing the United States, which accounted for 20.9% of AI patent origins. Since 2010, the U.S. share of AI patents has decreased from 54.1%.  Since 2011, the number of AI-related projects on GitHub has seen a consistent increase, growing from 845 in 2011 to approximately 1.8 million in 2023. Notably, there was a sharp 59.3% rise in the total number of GitHub AI projects in 2023 alone. The total number of stars for AI-related projects on GitHub also significantly increased in 2023, more than tripling from 4.0 million in 2022 to 12.2 million.

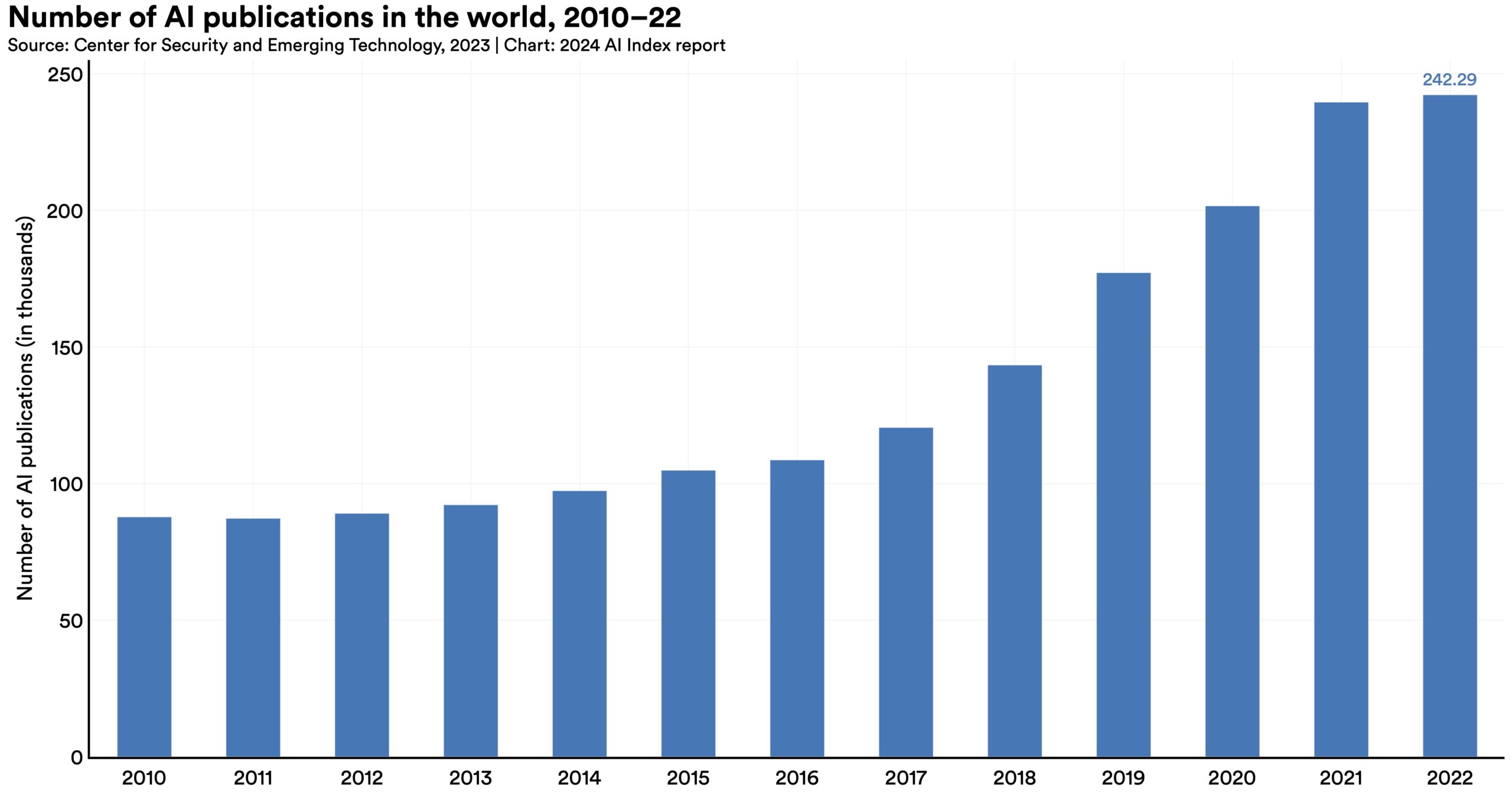

Since 2011, the number of AI-related projects on GitHub has seen a consistent increase, growing from 845 in 2011 to approximately 1.8 million in 2023. Notably, there was a sharp 59.3% rise in the total number of GitHub AI projects in 2023 alone. The total number of stars for AI-related projects on GitHub also significantly increased in 2023, more than tripling from 4.0 million in 2022 to 12.2 million.  Between 2010 and 2022, the total number of AI publications nearly tripled, rising from approximately 88,000 in 2010 to more than 240,000 in 2022. The increase over the last year was a modest 1.1%.

Between 2010 and 2022, the total number of AI publications nearly tripled, rising from approximately 88,000 in 2010 to more than 240,000 in 2022. The increase over the last year was a modest 1.1%. The technical performance section of this year’s AI Index offers a comprehensive overview of AI advancements in 2023. It starts with a high-level overview of AI technical performance, tracing its broad evolution over time. The chapter then examines the current state of a wide range of AI capabilities, including language processing, coding, computer vision (image and video analysis), reasoning, audio processing, autonomous agents, robotics, and reinforcement learning. It also shines a spotlight on notable AI research breakthroughs from the past year, exploring methods for improving LLMs through prompting, optimization, and fine-tuning, and wraps up with an exploration of AI systems’ environmental footprint.

AI has surpassed human performance on several benchmarks, including some in image classification, visual reasoning, and English understanding. Yet it trails behind on more complex tasks like competition-level mathematics, visual commonsense reasoning and planning.

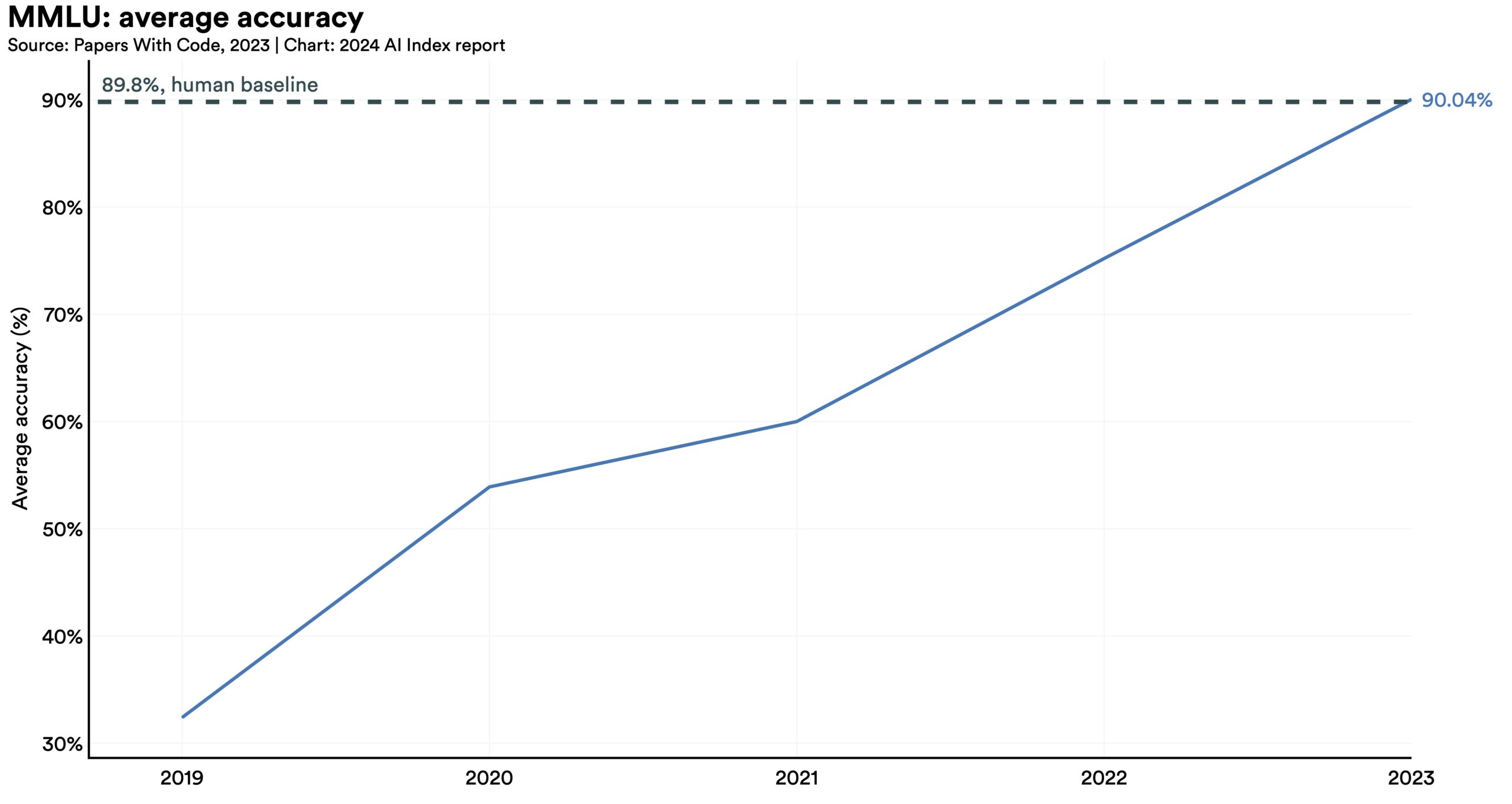

AI has surpassed human performance on several benchmarks, including some in image classification, visual reasoning, and English understanding. Yet it trails behind on more complex tasks like competition-level mathematics, visual commonsense reasoning and planning.  Traditionally AI systems have been limited in scope, with language models excelling in text comprehension but faltering in image processing, and vice versa. However, recent advancements have led to the development of strong multimodal models, such as Google’s Gemini and OpenAI’s GPT-4. These models demonstrate flexibility and are capable of handling images and text and, in some instances, can even process audio.

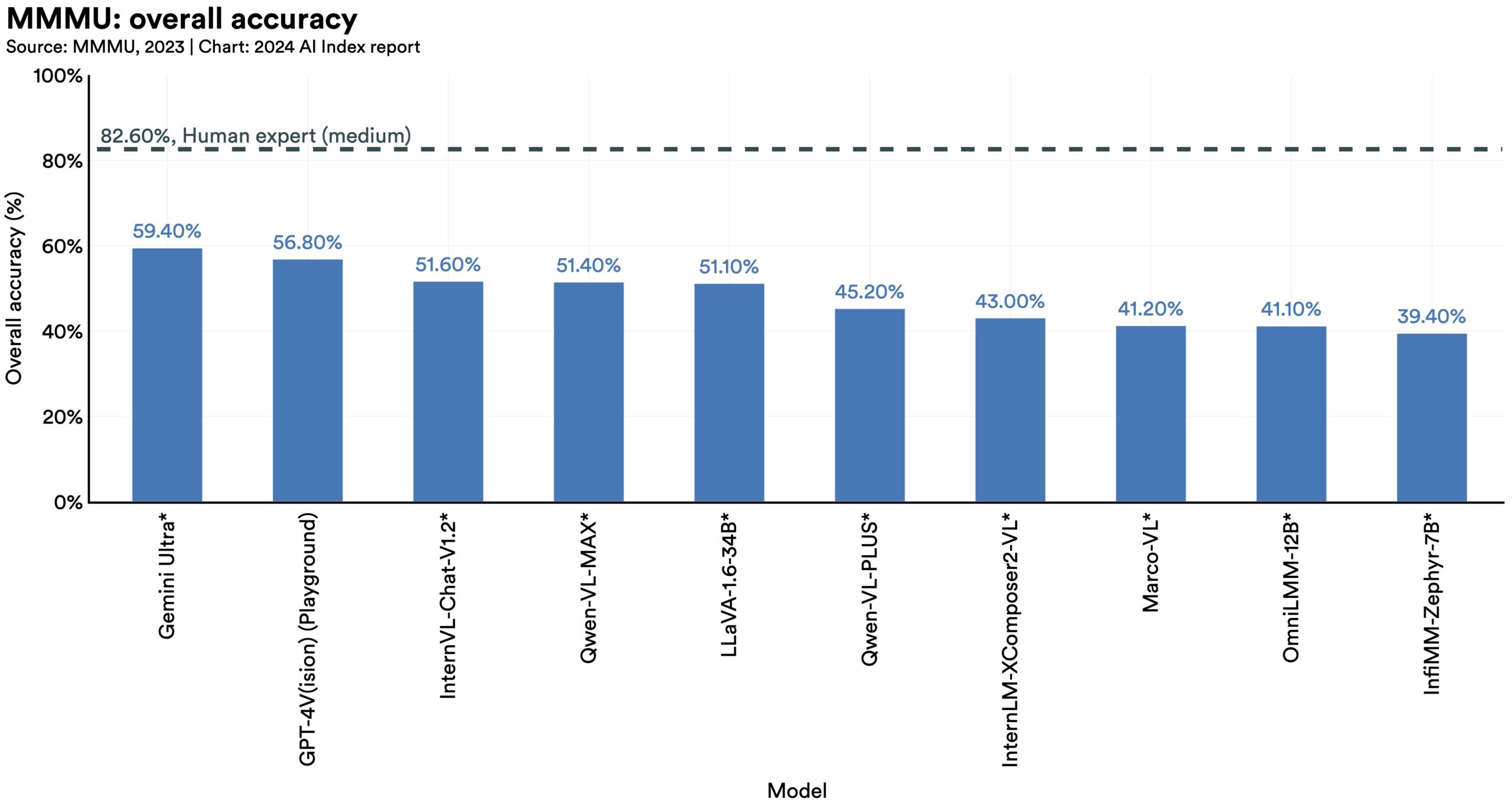

Traditionally AI systems have been limited in scope, with language models excelling in text comprehension but faltering in image processing, and vice versa. However, recent advancements have led to the development of strong multimodal models, such as Google’s Gemini and OpenAI’s GPT-4. These models demonstrate flexibility and are capable of handling images and text and, in some instances, can even process audio.  AI models have reached performance saturation on established benchmarks such as ImageNet, SQuAD, and SuperGLUE, prompting researchers to develop more challenging ones. In 2023, several challenging new benchmarks emerged, including SWE-bench for coding, HEIM for image generation, MMMU for general reasoning, MoCa for moral reasoning, AgentBench for agent-based behavior, and HaluEval for hallucinations.

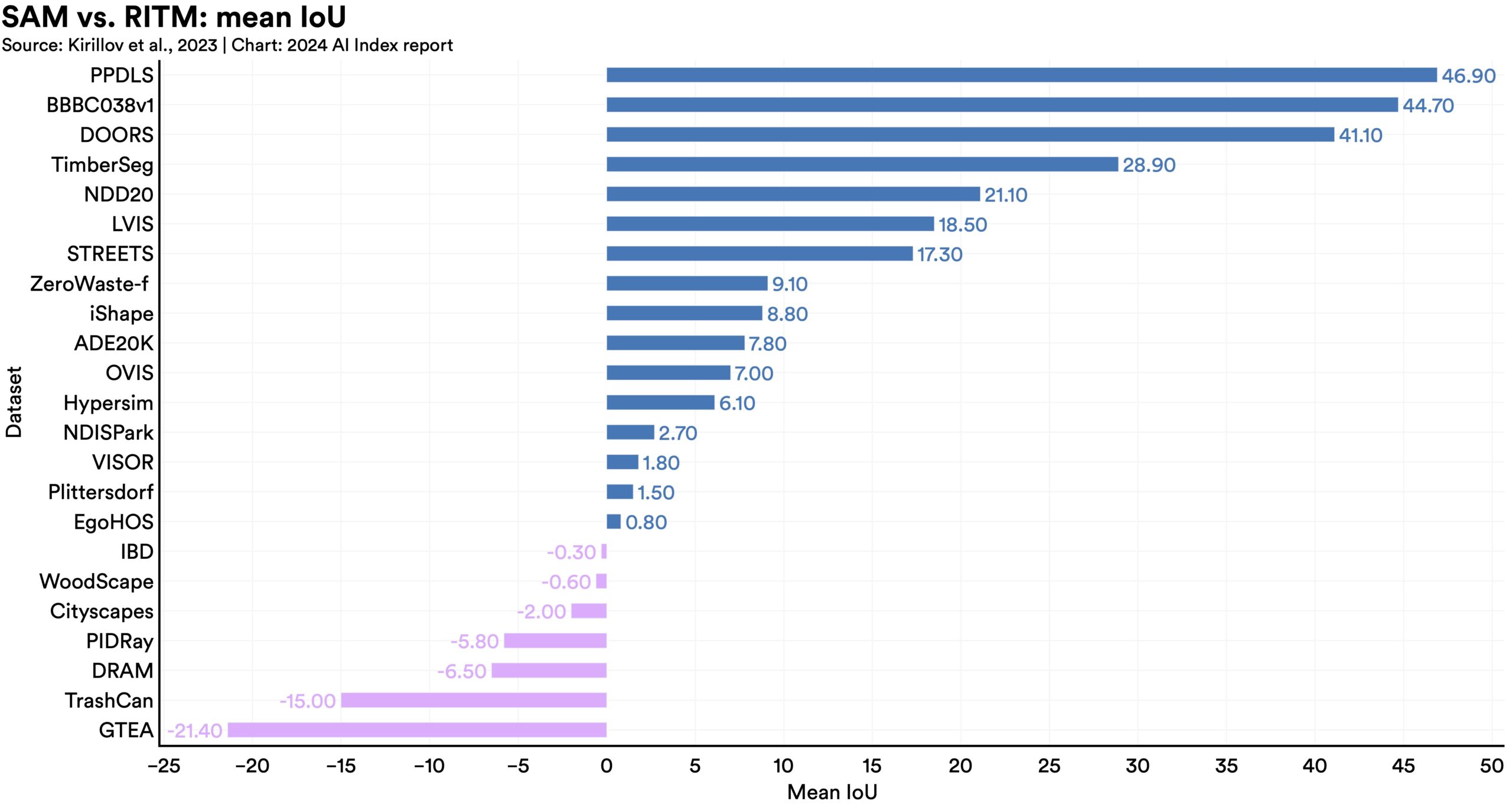

AI models have reached performance saturation on established benchmarks such as ImageNet, SQuAD, and SuperGLUE, prompting researchers to develop more challenging ones. In 2023, several challenging new benchmarks emerged, including SWE-bench for coding, HEIM for image generation, MMMU for general reasoning, MoCa for moral reasoning, AgentBench for agent-based behavior, and HaluEval for hallucinations.  New AI models such as SegmentAnything and Skoltech are being used to generate specialized data for tasks like image segmentation and 3D reconstruction. Data is vital for AI technical improvements. The use of AI to create more data enhances current capabilities and paves the way for future algorithmic improvements, especially on harder tasks.

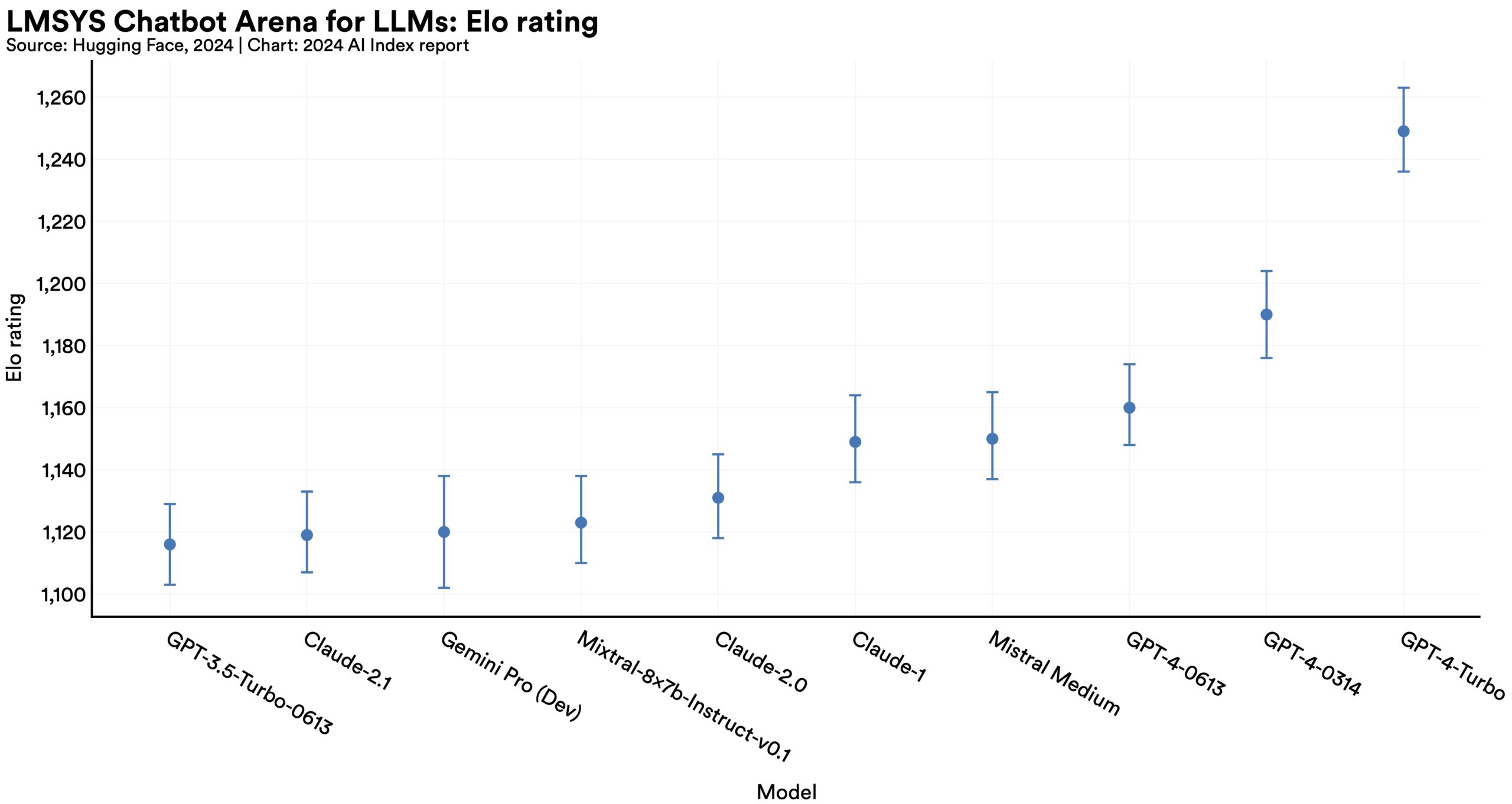

New AI models such as SegmentAnything and Skoltech are being used to generate specialized data for tasks like image segmentation and 3D reconstruction. Data is vital for AI technical improvements. The use of AI to create more data enhances current capabilities and paves the way for future algorithmic improvements, especially on harder tasks.  With generative models producing high-quality text, images, and more, benchmarking has slowly started shifting toward incorporating human evaluations like the Chatbot Arena Leaderboard rather than computerized rankings like ImageNet or SQuAD. Public feeling about AI is becoming an increasingly important consideration in tracking AI progress.

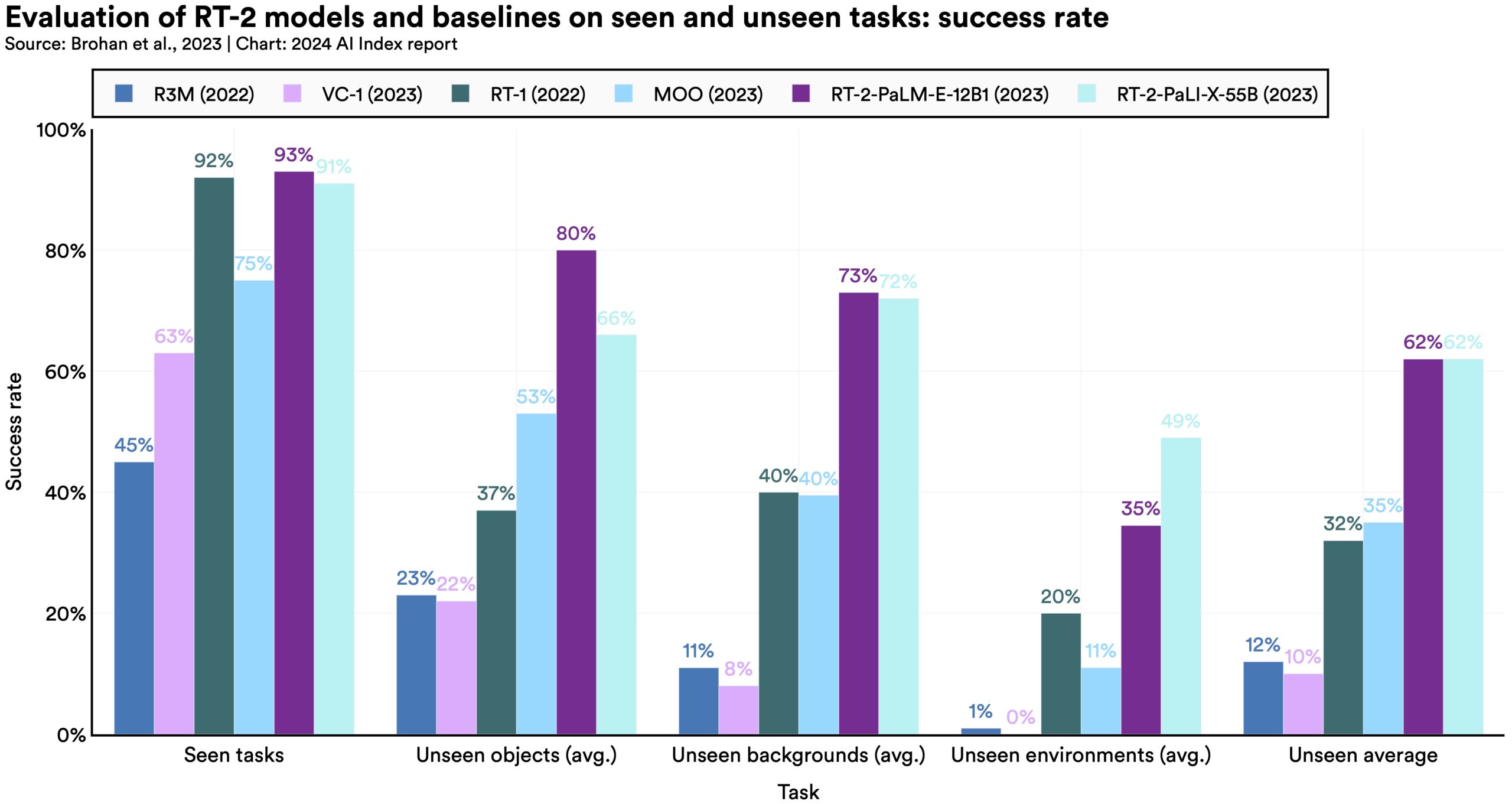

With generative models producing high-quality text, images, and more, benchmarking has slowly started shifting toward incorporating human evaluations like the Chatbot Arena Leaderboard rather than computerized rankings like ImageNet or SQuAD. Public feeling about AI is becoming an increasingly important consideration in tracking AI progress.  The fusion of language modeling with robotics has given rise to more flexible robotic systems like PaLM-E and RT-2. Beyond their improved robotic capabilities, these models can ask questions, which marks a significant step toward robots that can interact more effectively with the real world.

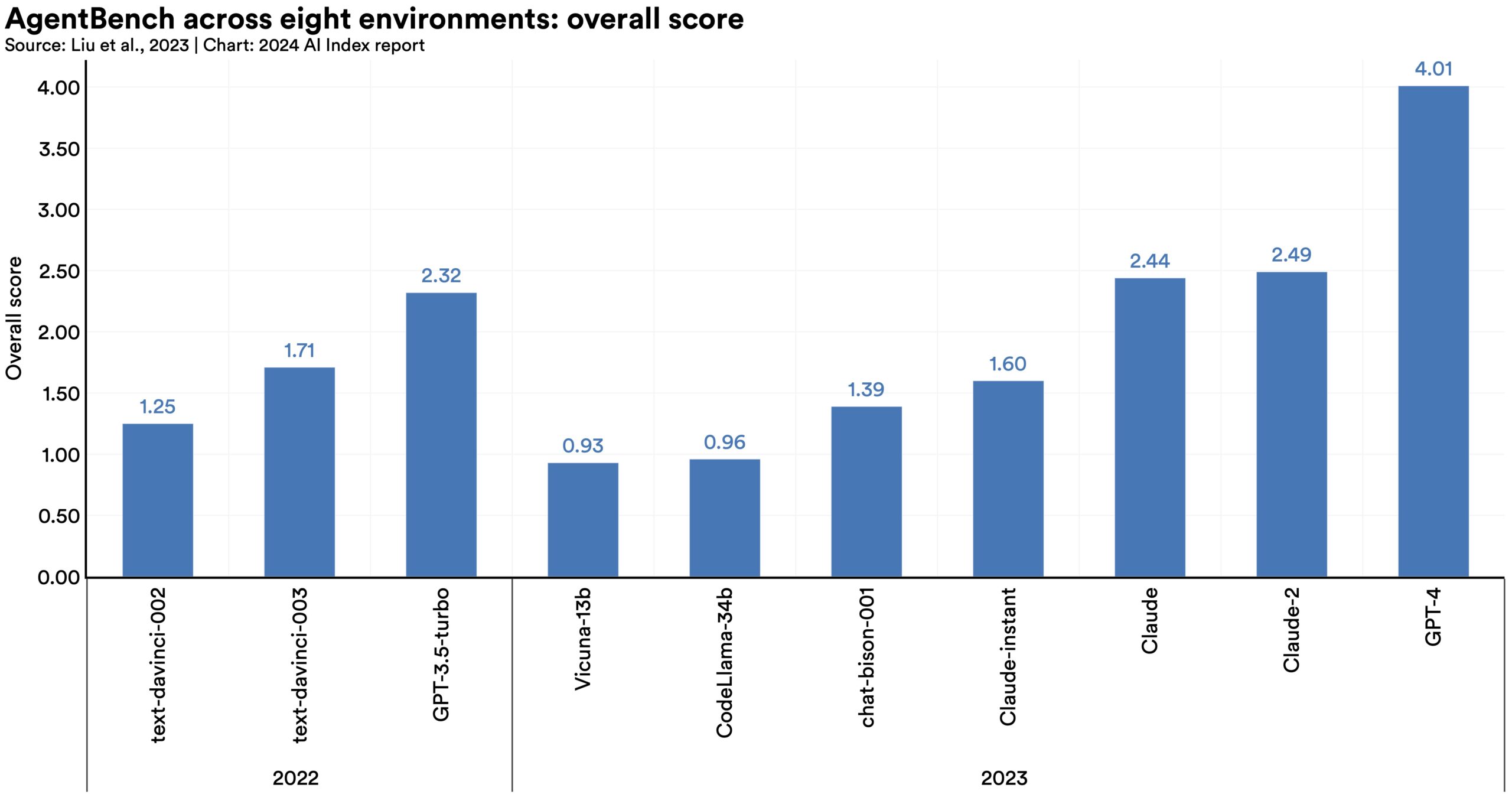

The fusion of language modeling with robotics has given rise to more flexible robotic systems like PaLM-E and RT-2. Beyond their improved robotic capabilities, these models can ask questions, which marks a significant step toward robots that can interact more effectively with the real world.  Creating AI agents, systems capable of autonomous operation in specific environments, has long challenged computer scientists. However, emerging research suggests that the performance of autonomous AI agents is improving. Current agents can now master complex games like Minecraft and effectively tackle real-world tasks, such as online shopping and research assistance.

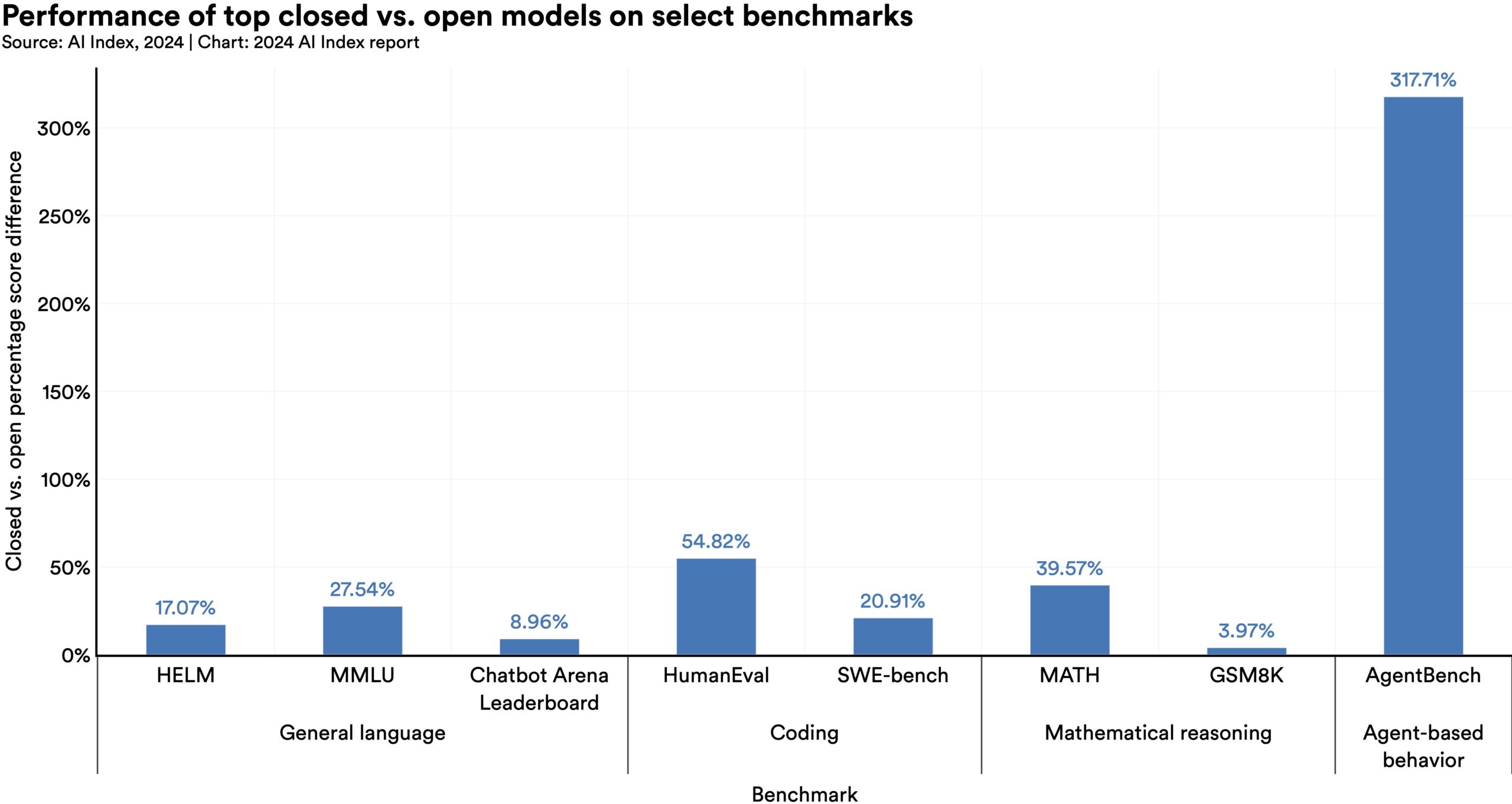

Creating AI agents, systems capable of autonomous operation in specific environments, has long challenged computer scientists. However, emerging research suggests that the performance of autonomous AI agents is improving. Current agents can now master complex games like Minecraft and effectively tackle real-world tasks, such as online shopping and research assistance.  On 10 select AI benchmarks, closed models outperformed open ones, with a median performance advantage of 24.2%. Differences in the performance of closed and open models carry important implications for AI policy debates.

On 10 select AI benchmarks, closed models outperformed open ones, with a median performance advantage of 24.2%. Differences in the performance of closed and open models carry important implications for AI policy debates. AI is increasingly woven into nearly every facet of our lives. This integration is occurring in sectors such as education, finance, and healthcare, where critical decisions are often based on algorithmic insights. This trend promises to bring many advantages; however, it also introduces potential risks. Consequently, in the past year, there has been a significant focus on the responsible development and deployment of AI systems. The AI community has also become more concerned with assessing the impact of AI systems and mitigating risks for those affected.

This chapter explores key trends in responsible AI by examining metrics, research, and benchmarks in four key responsible AI areas: privacy and data governance, transparency and explainability, security and safety, and fairness. Given that 4 billion people are expected to vote globally in 2024, this chapter also features a special section on AI and elections and more broadly explores the potential impact of AI on political processes.

New research from the AI Index reveals a significant lack of standardization in responsible AI reporting. Leading developers, including OpenAI, Google, and Anthropic, primarily test their models against different responsible AI benchmarks. This practice complicates efforts to systematically compare the risks and limitations of top AI models.

New research from the AI Index reveals a significant lack of standardization in responsible AI reporting. Leading developers, including OpenAI, Google, and Anthropic, primarily test their models against different responsible AI benchmarks. This practice complicates efforts to systematically compare the risks and limitations of top AI models.  Political deepfakes are already affecting elections across the world, with recent research suggesting that existing AI deepfake detection methods perform with varying levels of accuracy. In addition, new projects like CounterCloud demonstrate how easily AI can create and disseminate fake content.

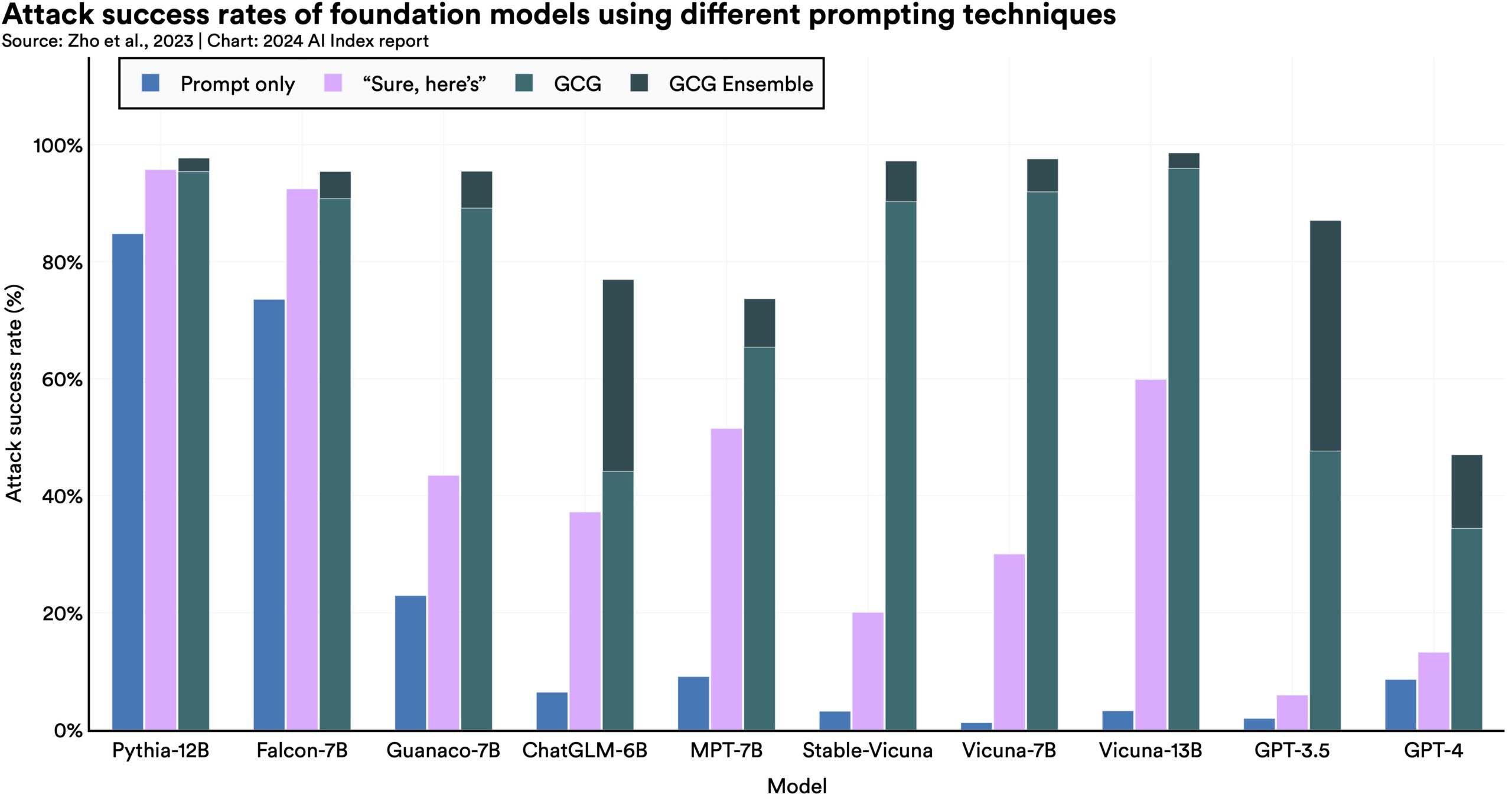

Political deepfakes are already affecting elections across the world, with recent research suggesting that existing AI deepfake detection methods perform with varying levels of accuracy. In addition, new projects like CounterCloud demonstrate how easily AI can create and disseminate fake content.  Previously, most efforts to red team AI models focused on testing adversarial prompts that intuitively made sense to humans. This year, researchers found less obvious strategies to get LLMs to exhibit harmful behavior, like asking the models to infinitely repeat random words.

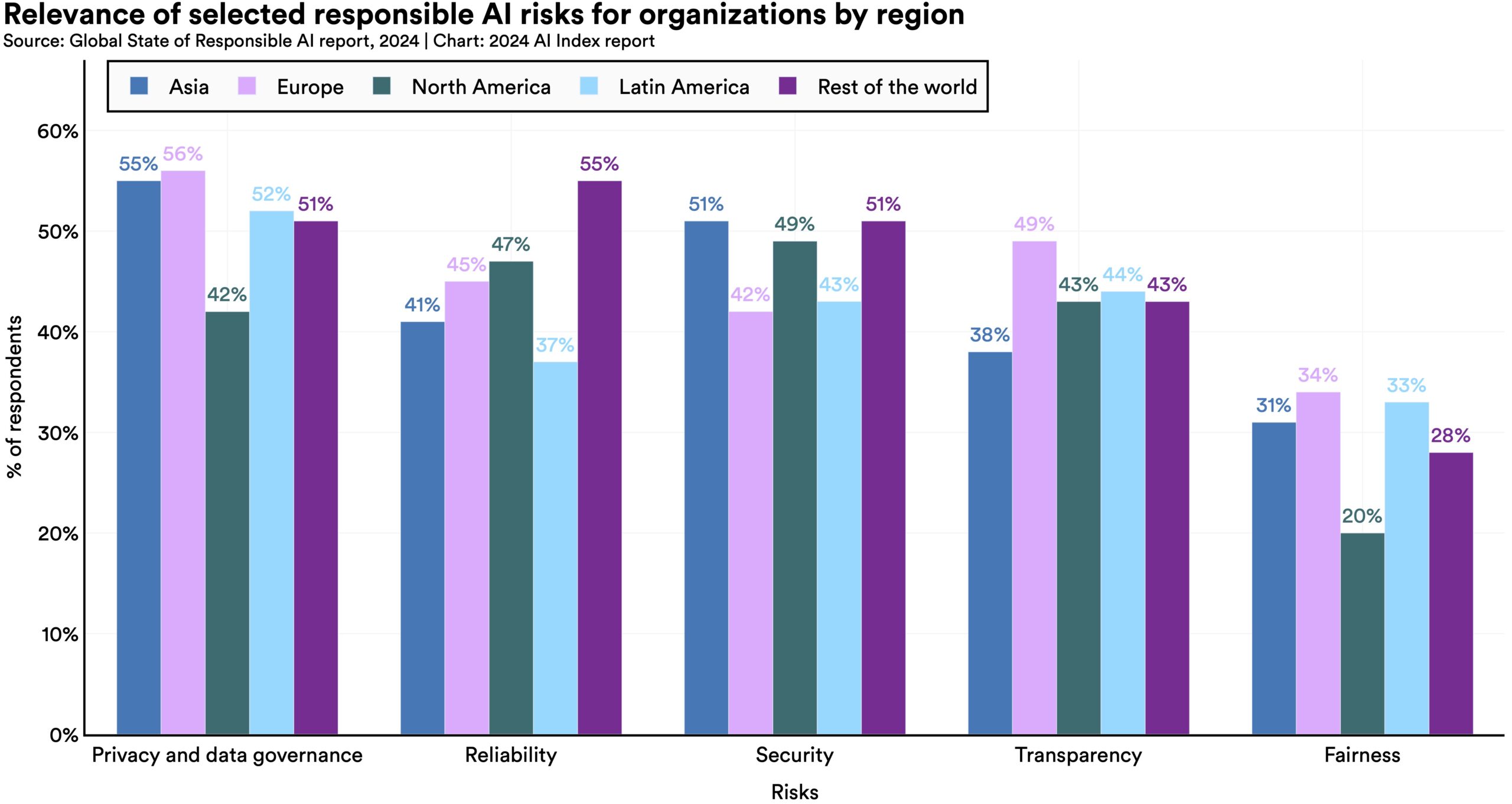

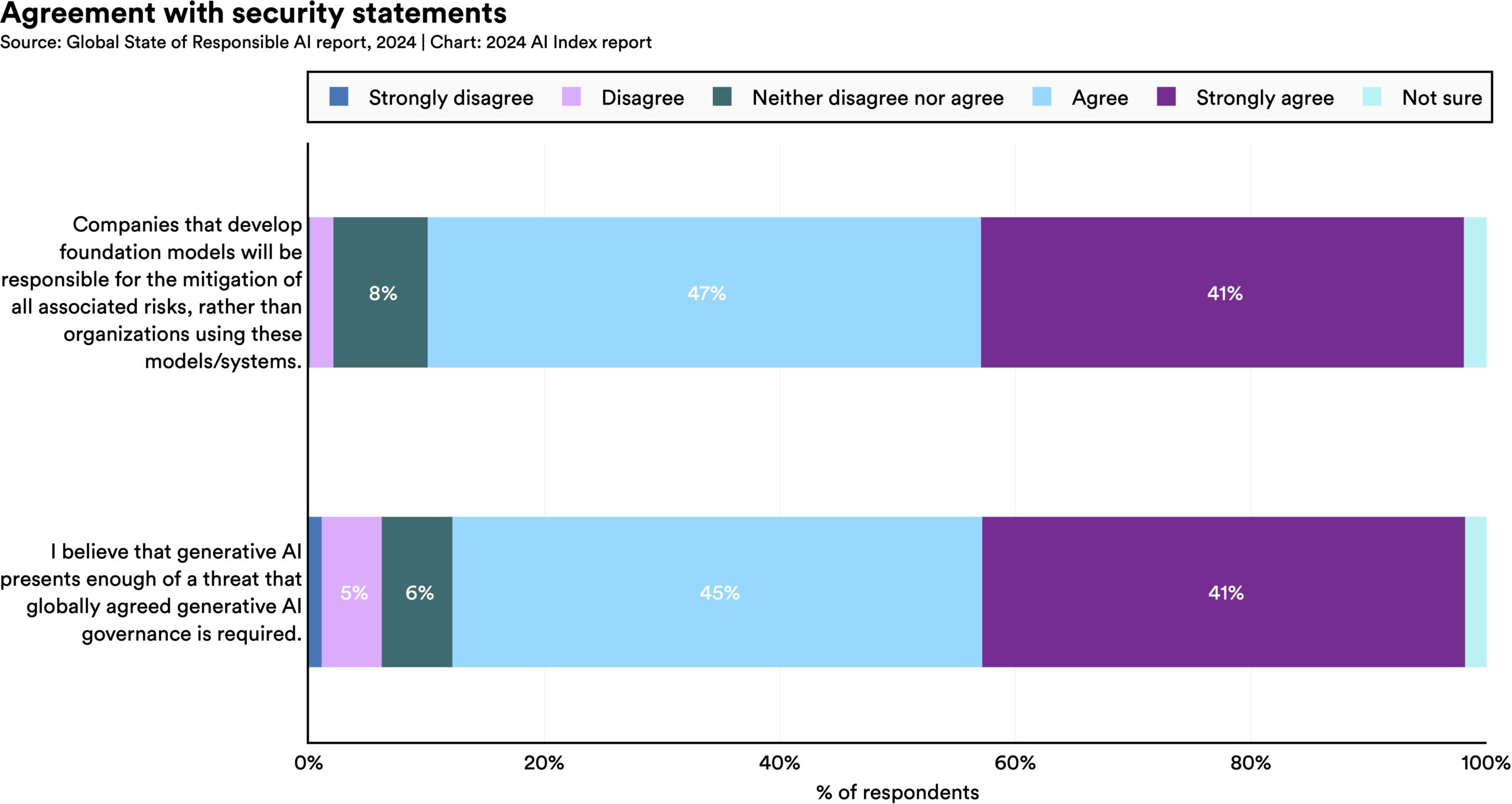

Previously, most efforts to red team AI models focused on testing adversarial prompts that intuitively made sense to humans. This year, researchers found less obvious strategies to get LLMs to exhibit harmful behavior, like asking the models to infinitely repeat random words.  A global survey on responsible AI highlights that companies’ top AI-related concerns include privacy, security, and reliability. The survey shows that organizations are beginning to take steps to mitigate these risks. However, globally, most companies have so far only mitigated a portion of these risks.

A global survey on responsible AI highlights that companies’ top AI-related concerns include privacy, security, and reliability. The survey shows that organizations are beginning to take steps to mitigate these risks. However, globally, most companies have so far only mitigated a portion of these risks.  Multiple researchers have shown that the generative outputs of popular LLMs may contain copyrighted material, such as excerpts from The New York Times or scenes from movies. Whether such output constitutes copyright violations is becoming a central legal question.

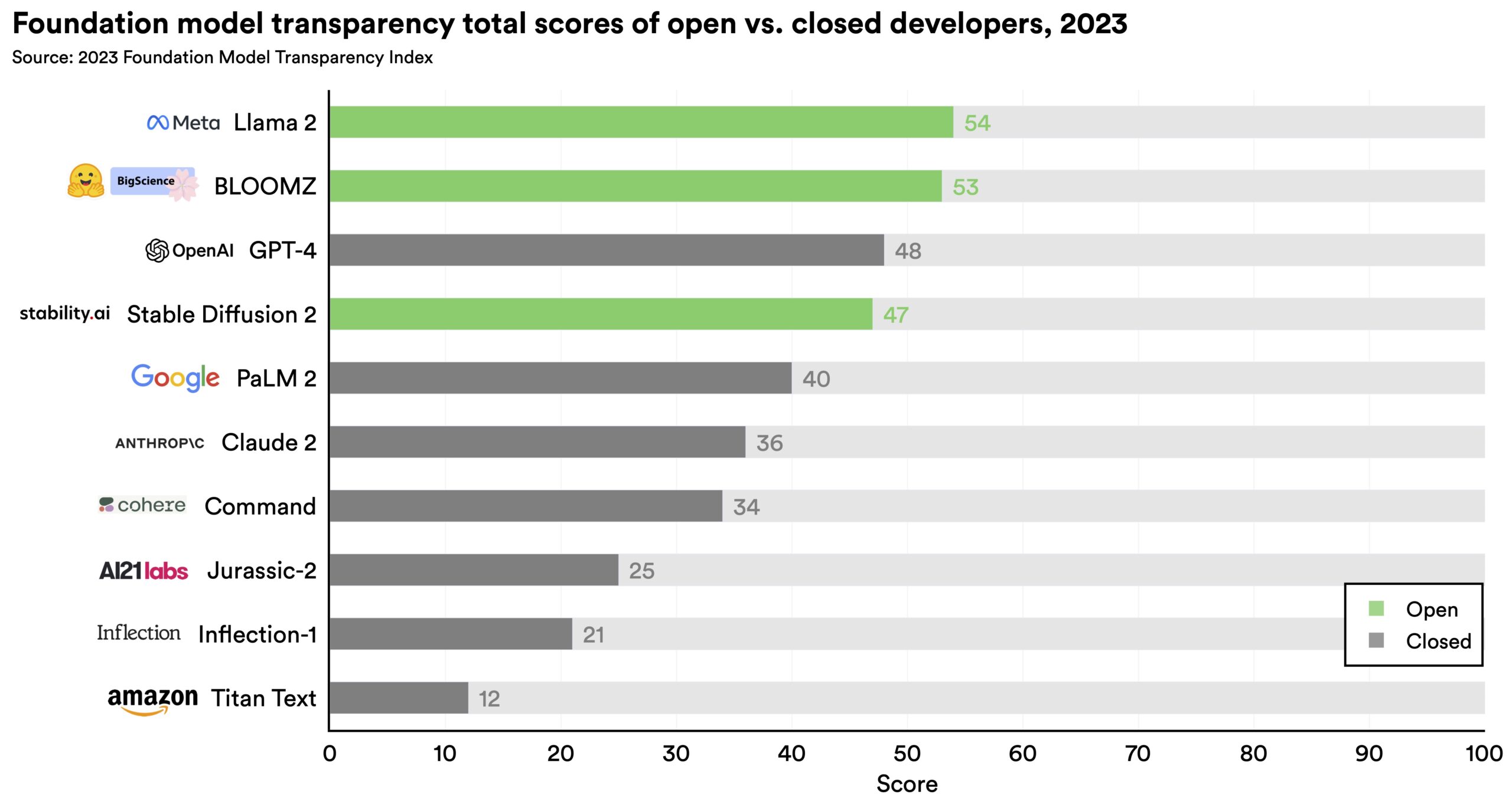

Multiple researchers have shown that the generative outputs of popular LLMs may contain copyrighted material, such as excerpts from The New York Times or scenes from movies. Whether such output constitutes copyright violations is becoming a central legal question.  The newly introduced Foundation Model Transparency Index shows that AI developers lack transparency, especially regarding the disclosure of training data and methodologies. This lack of openness hinders efforts to further understand the robustness and safety of AI systems.

The newly introduced Foundation Model Transparency Index shows that AI developers lack transparency, especially regarding the disclosure of training data and methodologies. This lack of openness hinders efforts to further understand the robustness and safety of AI systems.  Over the past year, a substantial debate has emerged among AI scholars and practitioners regarding the focus on immediate model risks, like algorithmic discrimination, versus potential long-term existential threats. It has become challenging to distinguish which claims are scientifically founded and should inform policymaking. This difficulty is compounded by the tangible nature of already present short-term risks in contrast with the theoretical nature of existential threats.

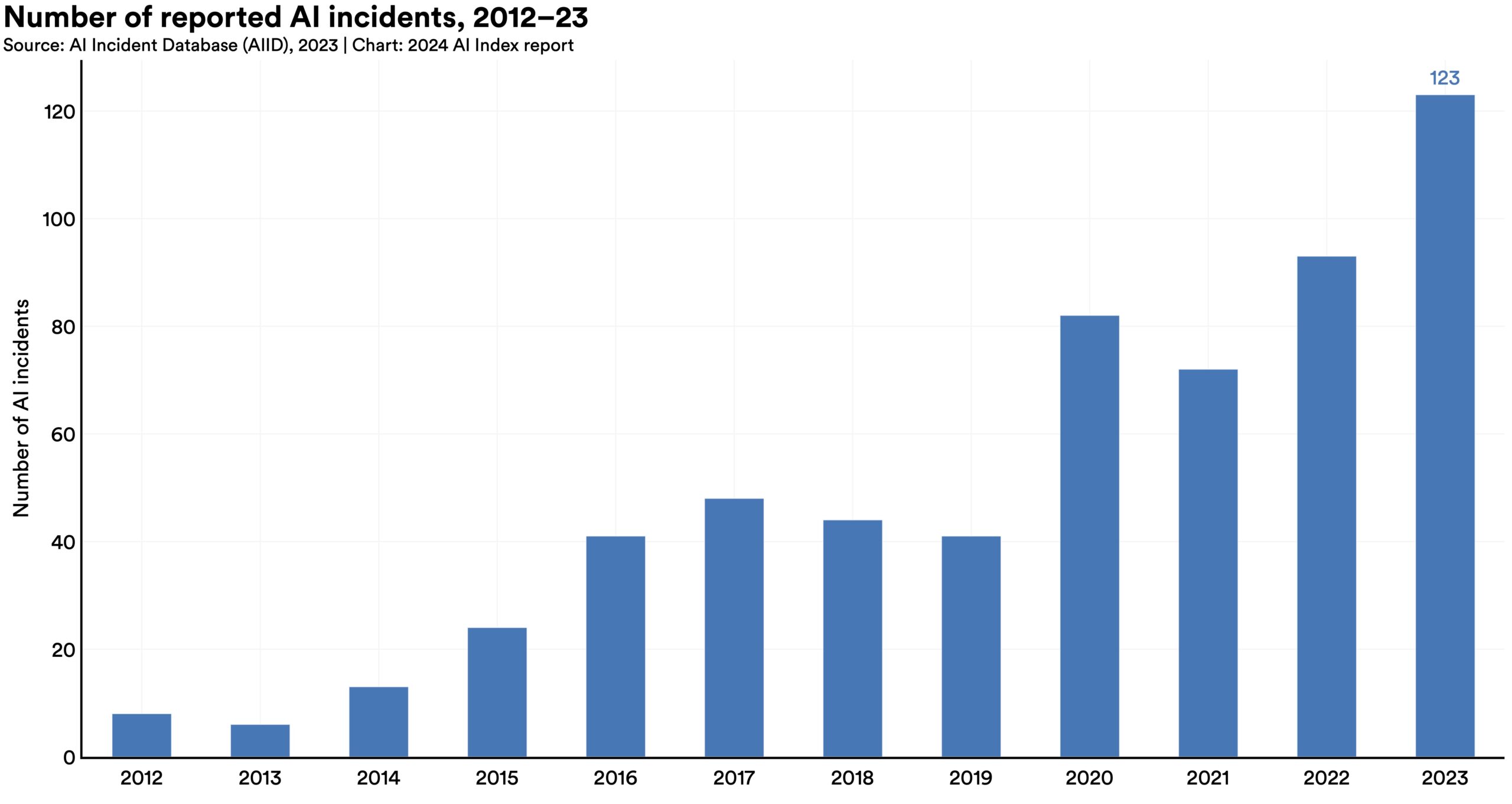

Over the past year, a substantial debate has emerged among AI scholars and practitioners regarding the focus on immediate model risks, like algorithmic discrimination, versus potential long-term existential threats. It has become challenging to distinguish which claims are scientifically founded and should inform policymaking. This difficulty is compounded by the tangible nature of already present short-term risks in contrast with the theoretical nature of existential threats.  According to the AI Incident Database, which tracks incidents related to the misuse of AI, 123 incidents were reported in 2023, a 32.3% increase from 2022. Since 2013, AI incidents have grown by over twentyfold. A notable example includes AI-generated, sexually explicit deepfakes of Taylor Swift that were widely shared online.

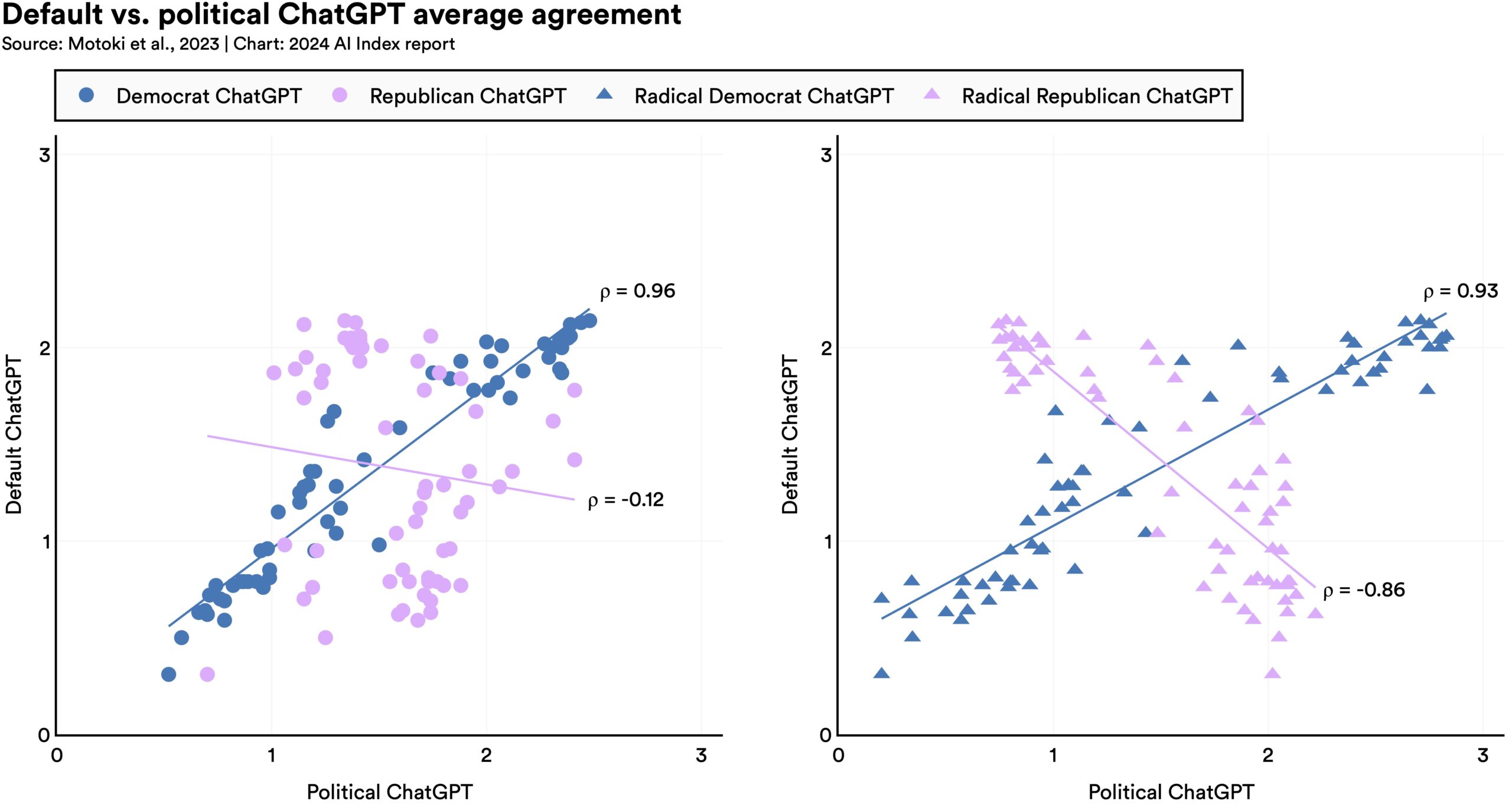

According to the AI Incident Database, which tracks incidents related to the misuse of AI, 123 incidents were reported in 2023, a 32.3% increase from 2022. Since 2013, AI incidents have grown by over twentyfold. A notable example includes AI-generated, sexually explicit deepfakes of Taylor Swift that were widely shared online.  Researchers find a significant bias in ChatGPT toward Democrats in the United States and the Labour Party in the U.K. This finding raises concerns about the tool’s potential to influence users’ political views, particularly in a year marked by major global elections.

Researchers find a significant bias in ChatGPT toward Democrats in the United States and the Labour Party in the U.K. This finding raises concerns about the tool’s potential to influence users’ political views, particularly in a year marked by major global elections. The integration of AI into the economy raises many compelling questions. Some predict that AI will drive productivity improvements, but the extent of its impact remains uncertain. A major concern is the potential for massive labor displacement—to what degree will jobs be automated versus augmented by AI? Companies are already utilizing AI in various ways across industries, but some regions of the world are witnessing greater investment inflows into this transformative technology. Moreover, investor interest appears to be gravitating toward specific AI subfields like natural language processing and data management.

This chapter examines AI-related economic trends using data from Lightcast, LinkedIn, Quid, McKinsey, Stack Overflow, and the International Federation of Robotics (IFR). It begins by analyzing AI-related occupations, covering labor demand, hiring trends, skill penetration, and talent availability. The chapter then explores corporate investment in AI, introducing a new section focused specifically on generative AI. It further examines corporate adoption of AI, assessing current usage and how developers adopt these technologies. Finally, it assesses AI’s current and projected economic impact and robot installations across various sectors.

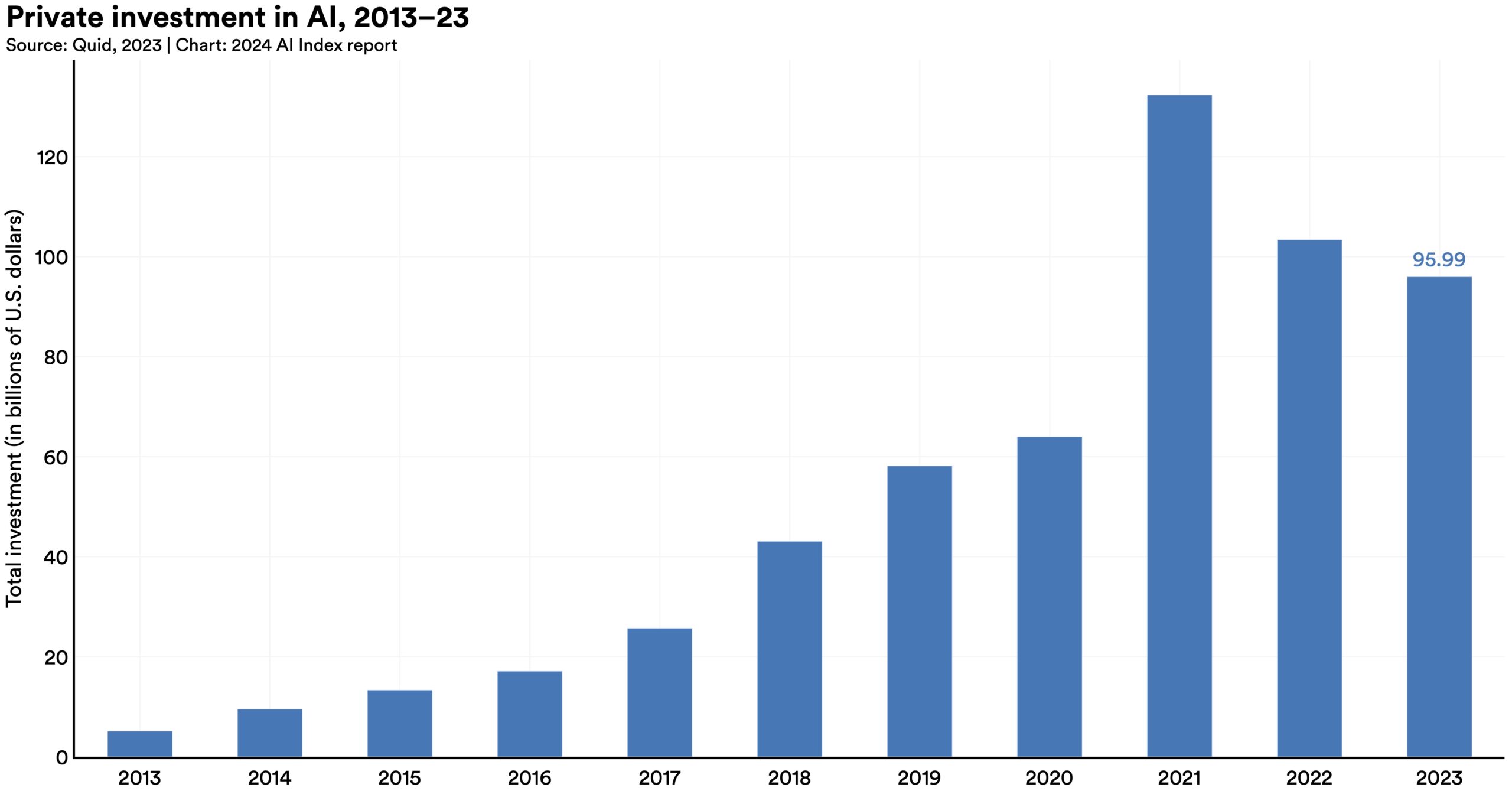

Despite a decline in overall AI private investment last year, funding for generative AI surged, nearly octupling from 2022 to reach $25.2 billion. Major players in the generative AI space, including OpenAI, Anthropic, Hugging Face, and Inflection, reported substantial fundraising rounds.

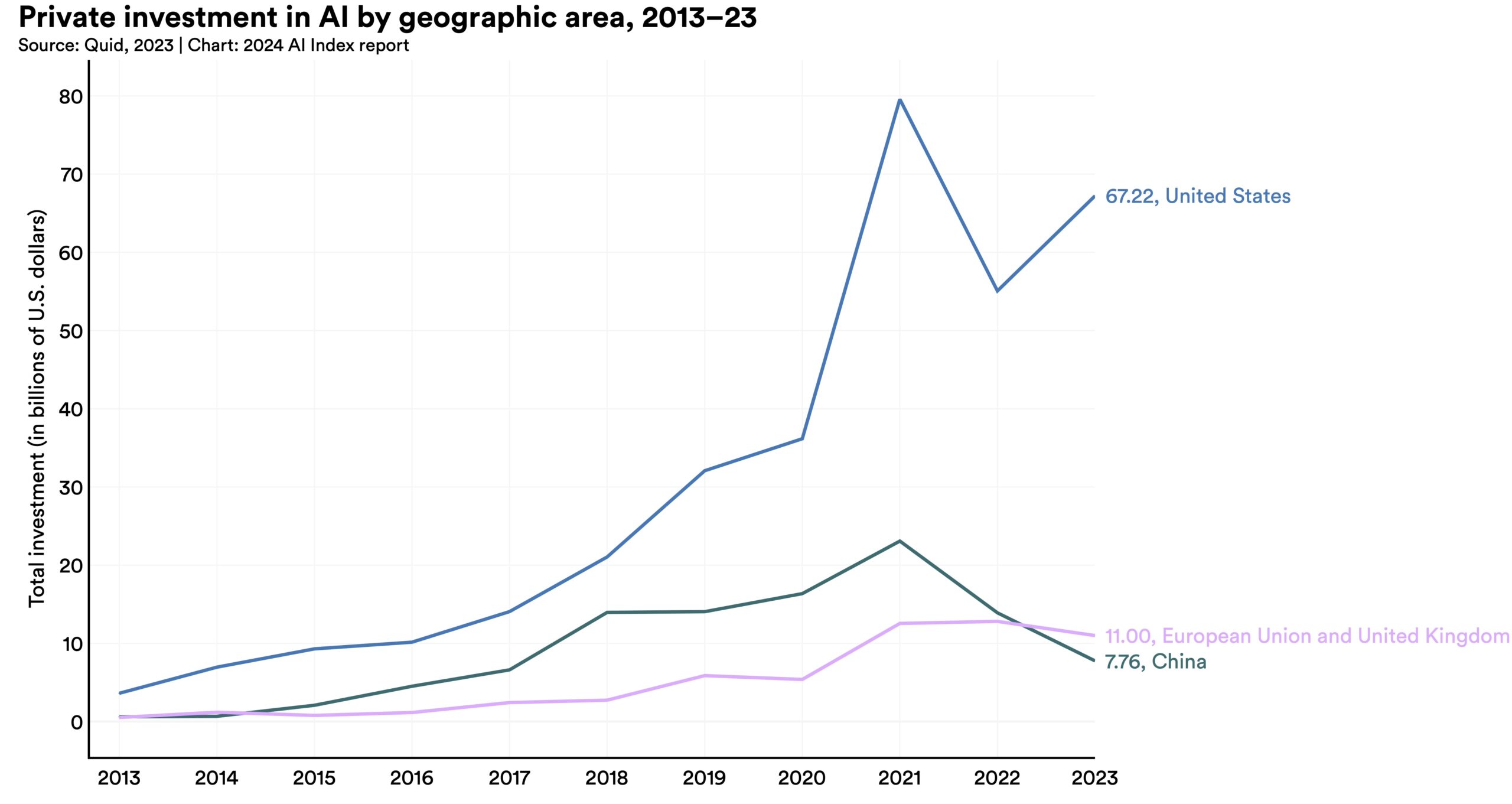

Despite a decline in overall AI private investment last year, funding for generative AI surged, nearly octupling from 2022 to reach $25.2 billion. Major players in the generative AI space, including OpenAI, Anthropic, Hugging Face, and Inflection, reported substantial fundraising rounds.  In 2023, the United States saw AI investments reach $67.2 billion, nearly 8.7 times more than China, the next highest investor. While private AI investment in China and the European Union, including the United Kingdom, declined by 44.2% and 14.1%, respectively, since 2022, the United States experienced a notable increase of 22.1% in the same time frame.

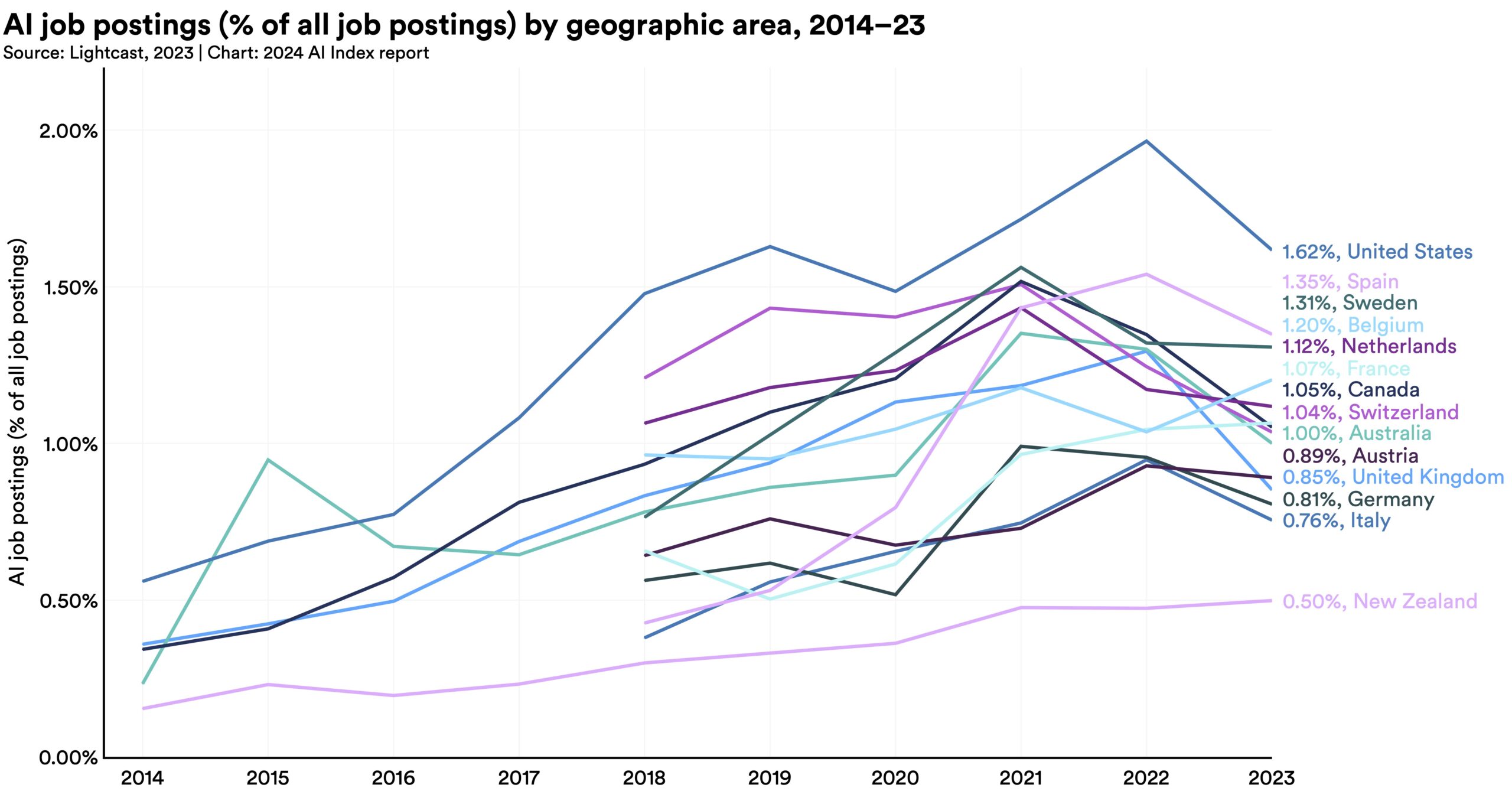

In 2023, the United States saw AI investments reach $67.2 billion, nearly 8.7 times more than China, the next highest investor. While private AI investment in China and the European Union, including the United Kingdom, declined by 44.2% and 14.1%, respectively, since 2022, the United States experienced a notable increase of 22.1% in the same time frame.  In 2022, AI-related positions made up 2.0% of all job postings in America, a figure that decreased to 1.6% in 2023. This decline in AI job listings is attributed to fewer postings from leading AI firms and a reduced proportion of tech roles within these companies.

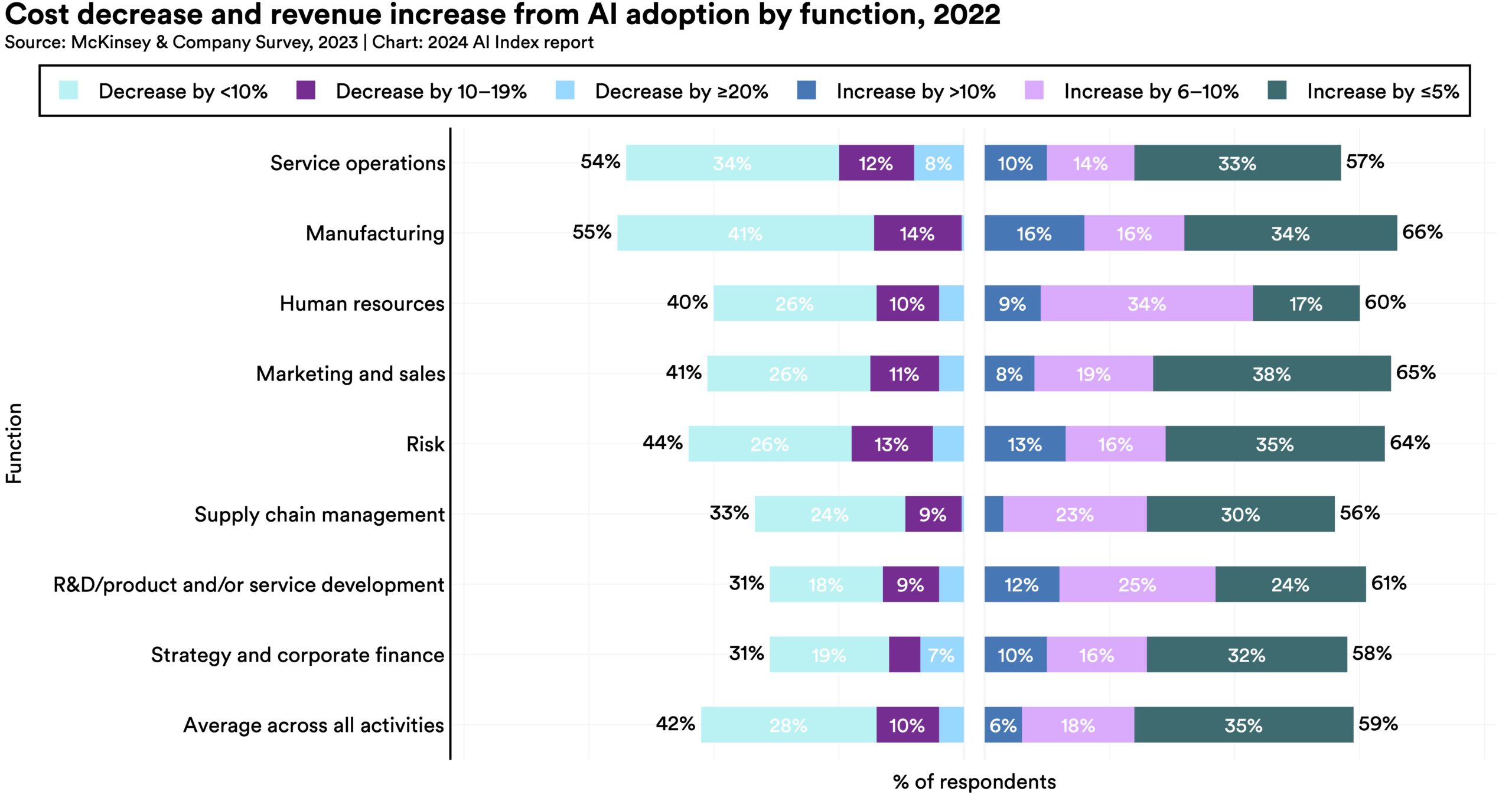

In 2022, AI-related positions made up 2.0% of all job postings in America, a figure that decreased to 1.6% in 2023. This decline in AI job listings is attributed to fewer postings from leading AI firms and a reduced proportion of tech roles within these companies.  A new McKinsey survey reveals that 42% of surveyed organizations report cost reductions from implementing AI (including generative AI), and 59% report revenue increases. Compared to the previous year, there was a 10 percentage point increase in respondents reporting decreased costs, suggesting AI is driving significant business efficiency gains.

A new McKinsey survey reveals that 42% of surveyed organizations report cost reductions from implementing AI (including generative AI), and 59% report revenue increases. Compared to the previous year, there was a 10 percentage point increase in respondents reporting decreased costs, suggesting AI is driving significant business efficiency gains.  Global private AI investment has fallen for the second year in a row, though less than the sharp decrease from 2021 to 2022. The count of newly funded AI companies spiked to 1,812, up 40.6% from the previous year.

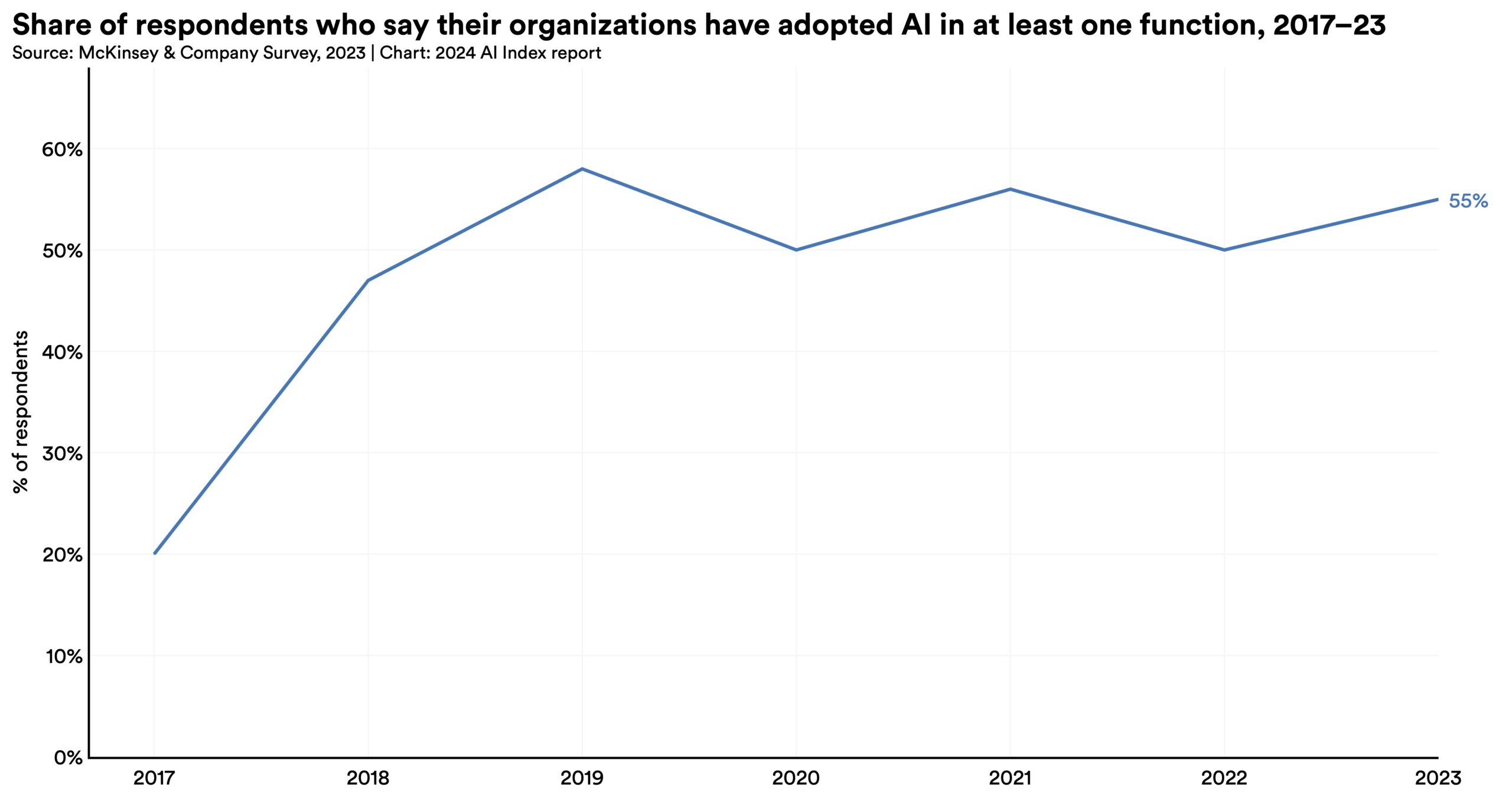

Global private AI investment has fallen for the second year in a row, though less than the sharp decrease from 2021 to 2022. The count of newly funded AI companies spiked to 1,812, up 40.6% from the previous year.  A 2023 McKinsey report reveals that 55% of organizations now use AI (including generative AI) in at least one business unit or function, up from 50% in 2022 and 20% in 2017.

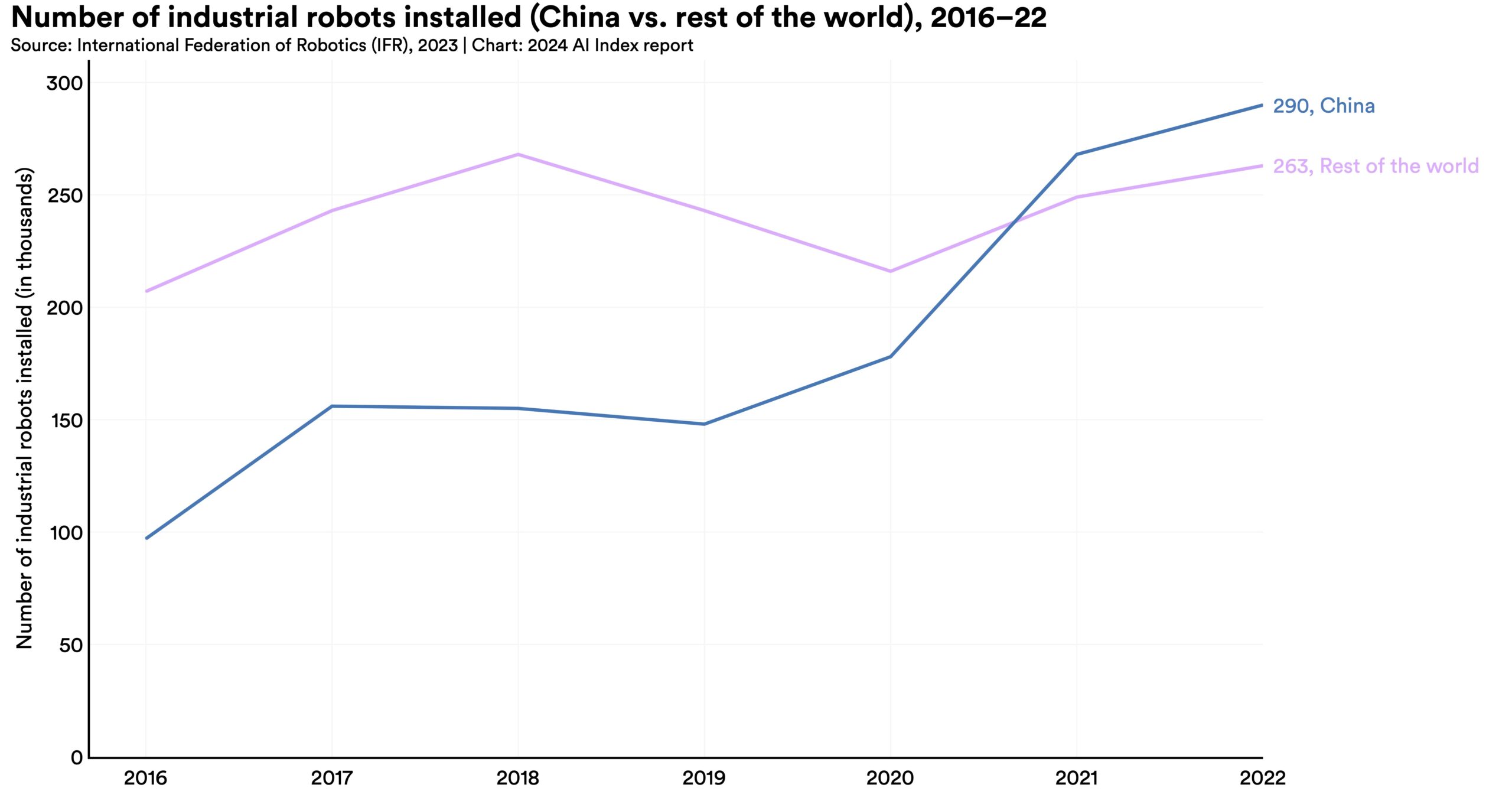

A 2023 McKinsey report reveals that 55% of organizations now use AI (including generative AI) in at least one business unit or function, up from 50% in 2022 and 20% in 2017.  Since surpassing Japan in 2013 as the leading installer of industrial robots, China has significantly widened the gap with the nearest competitor nation. In 2013, China’s installations accounted for 20.8% of the global total, a share that rose to 52.4% by 2022.

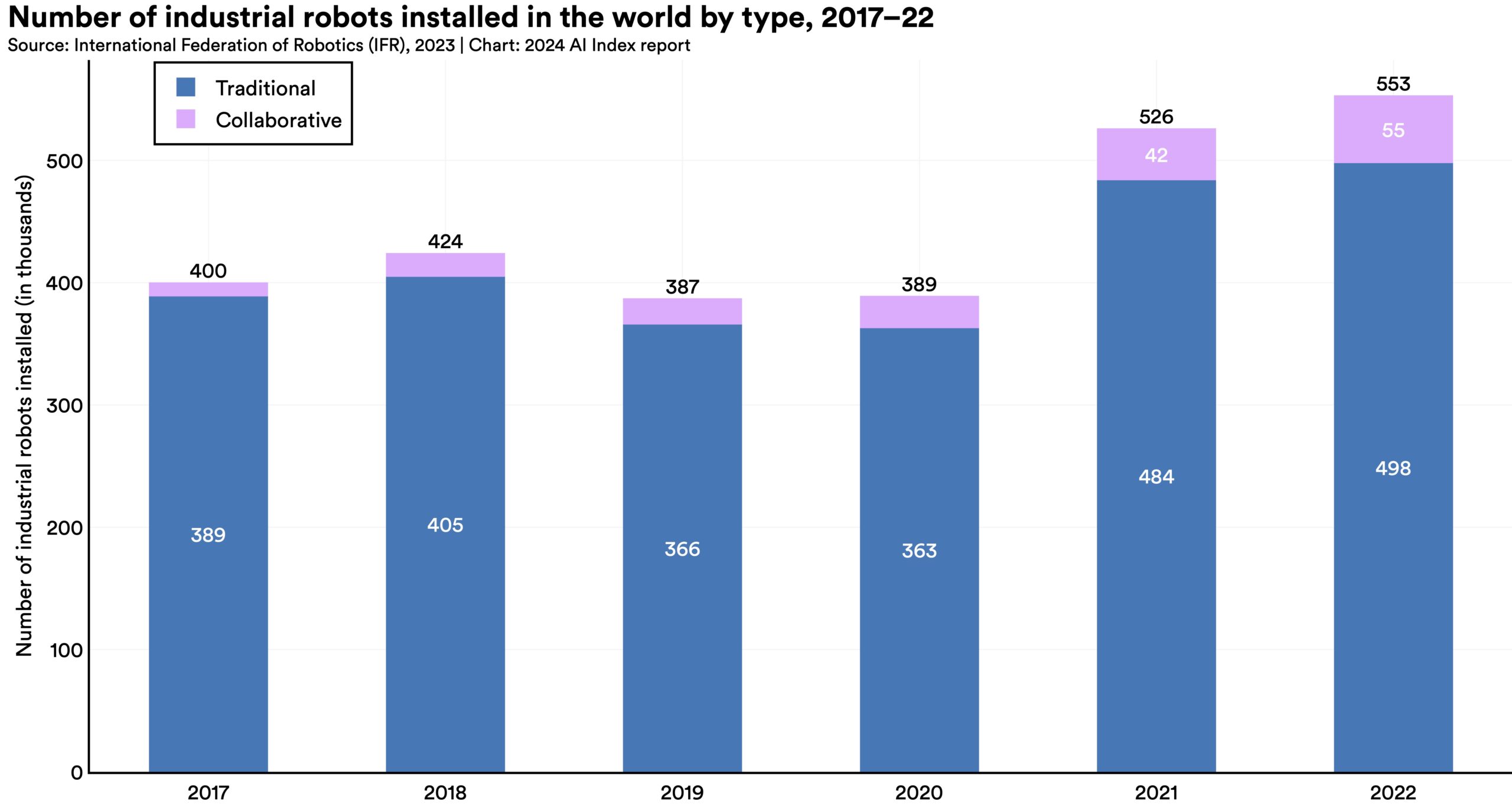

Since surpassing Japan in 2013 as the leading installer of industrial robots, China has significantly widened the gap with the nearest competitor nation. In 2013, China’s installations accounted for 20.8% of the global total, a share that rose to 52.4% by 2022.  In 2017, collaborative robots represented a mere 2.8% of all new industrial robot installations, a figure that climbed to 9.9% by 2022. Similarly, 2022 saw a rise in service robot installations across all application categories, except for medical robotics. This trend indicates not just an overall increase in robot installations but also a growing emphasis on deploying robots for human-facing roles.

In 2017, collaborative robots represented a mere 2.8% of all new industrial robot installations, a figure that climbed to 9.9% by 2022. Similarly, 2022 saw a rise in service robot installations across all application categories, except for medical robotics. This trend indicates not just an overall increase in robot installations but also a growing emphasis on deploying robots for human-facing roles.  In 2023, several studies assessed AI’s impact on labor, suggesting that AI enables workers to complete tasks more quickly and to improve the quality of their output. These studies also demonstrated AI’s potential to bridge the skill gap between low- and high-skilled workers. Still other studies caution that using AI without proper oversight can lead to diminished performance.

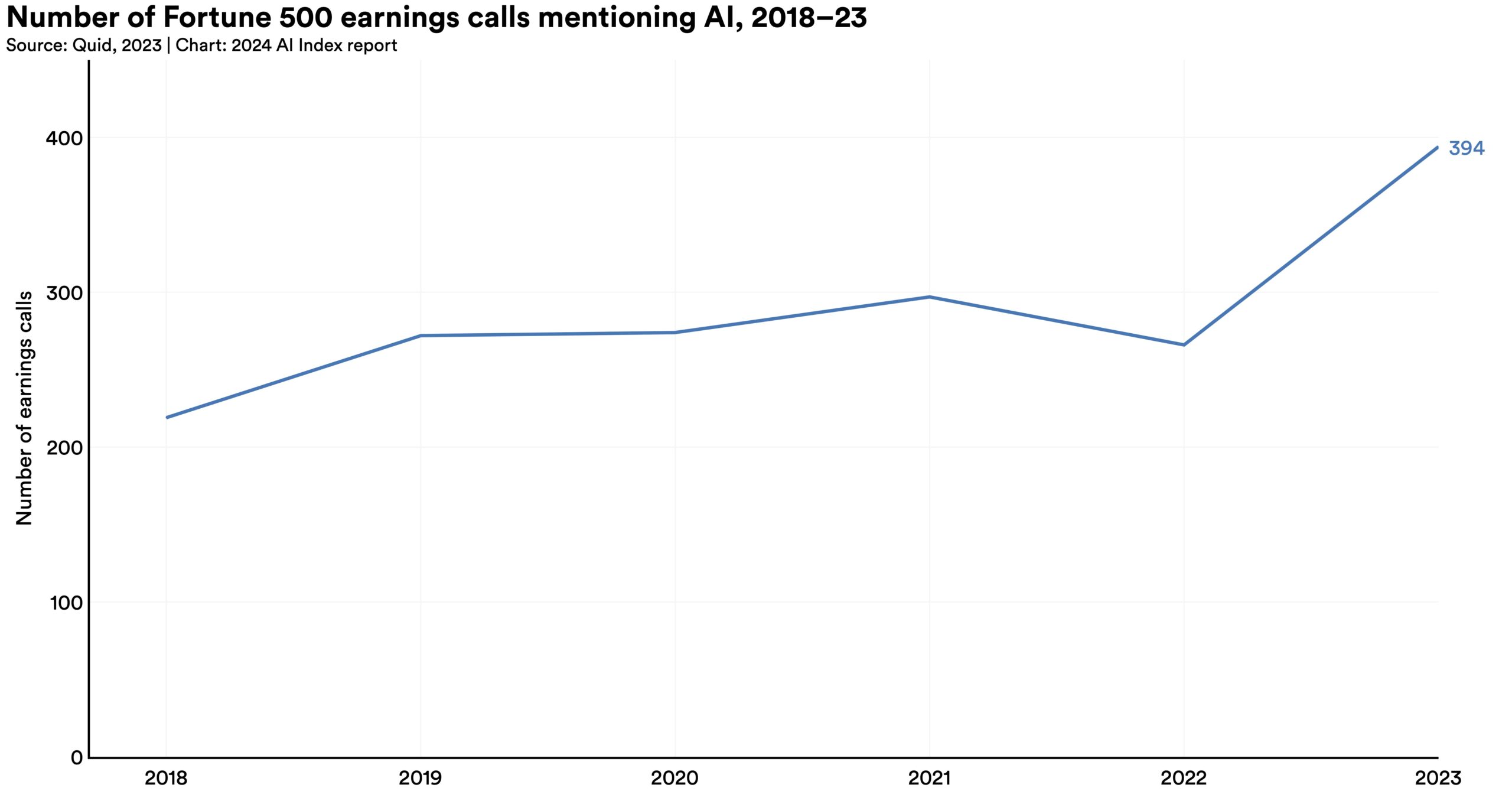

In 2023, several studies assessed AI’s impact on labor, suggesting that AI enables workers to complete tasks more quickly and to improve the quality of their output. These studies also demonstrated AI’s potential to bridge the skill gap between low- and high-skilled workers. Still other studies caution that using AI without proper oversight can lead to diminished performance.  In 2023, AI was mentioned in 394 earnings calls (nearly 80% of all Fortune 500 companies), a notable increase from 266 mentions in 2022. Since 2018, mentions of AI in Fortune 500 earnings calls have nearly doubled. The most frequently cited theme, appearing in 19.7% of all earnings calls, was generative AI.

In 2023, AI was mentioned in 394 earnings calls (nearly 80% of all Fortune 500 companies), a notable increase from 266 mentions in 2022. Since 2018, mentions of AI in Fortune 500 earnings calls have nearly doubled. The most frequently cited theme, appearing in 19.7% of all earnings calls, was generative AI. This year’s AI Index introduces a new chapter on AI in science and medicine in recognition of AI’s growing role in scientific and medical discovery. It explores 2023’s standout AI-facilitated scientific achievements, including advanced weather forecasting systems like GraphCast and improved material discovery algorithms like GNoME. The chapter also examines medical AI system performance, important 2023 AI-driven medical innovations like SynthSR and ImmunoSEIRA, and trends in the approval of FDA AI-related medical devices.

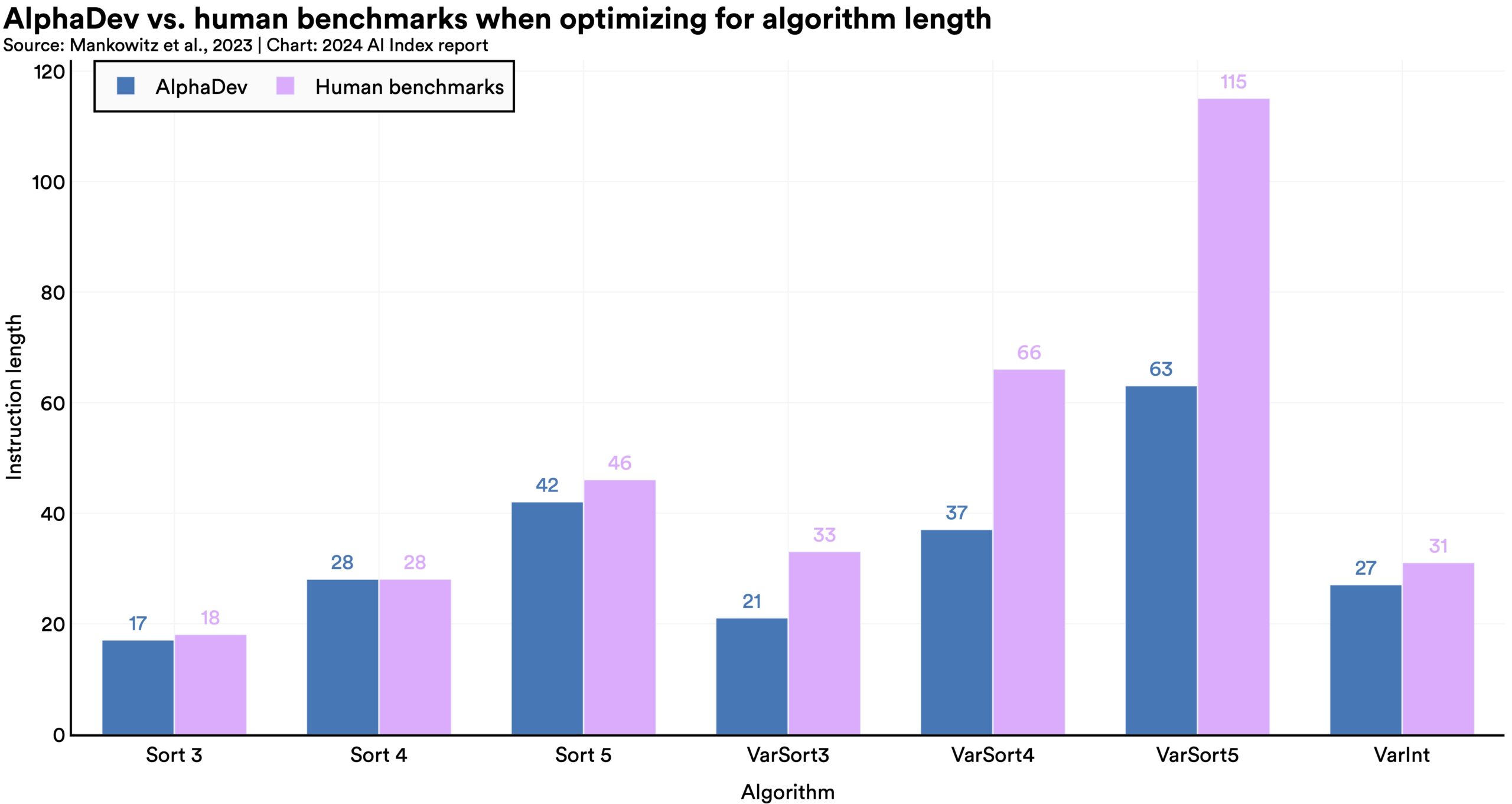

In 2022, AI began to advance scientific discovery. 2023, however, saw the launch of even more significant science-related AI applications—from AlphaDev, which makes algorithmic sorting more efficient, to GNoME, which facilitates the process of materials discovery.

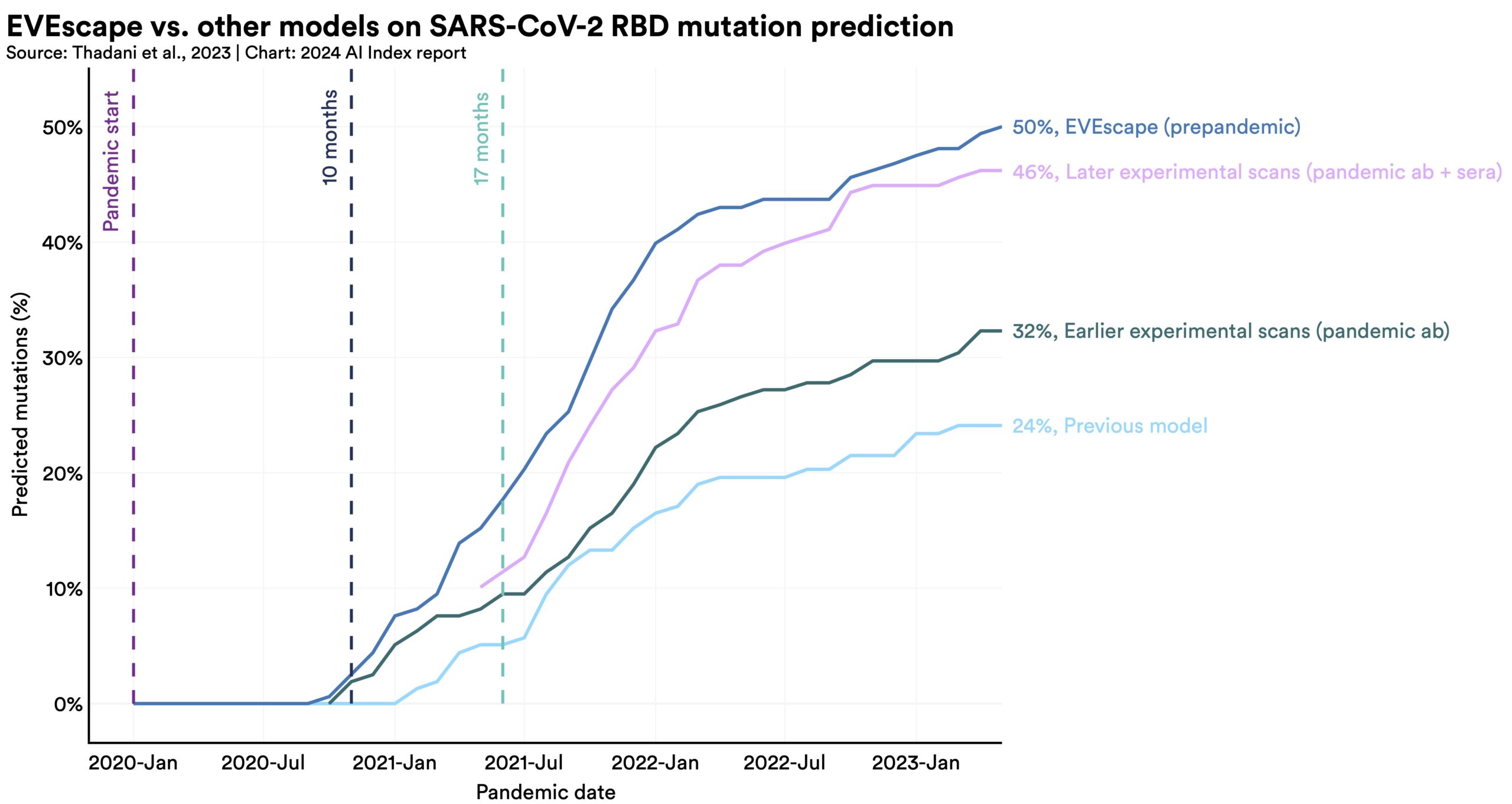

In 2022, AI began to advance scientific discovery. 2023, however, saw the launch of even more significant science-related AI applications—from AlphaDev, which makes algorithmic sorting more efficient, to GNoME, which facilitates the process of materials discovery.  In 2023, several significant medical systems were launched, including EVEscape, which enhances pandemic prediction, and AlphaMissence, which assists in AI-driven mutation classification. AI is increasingly being utilized to propel medical advancements.

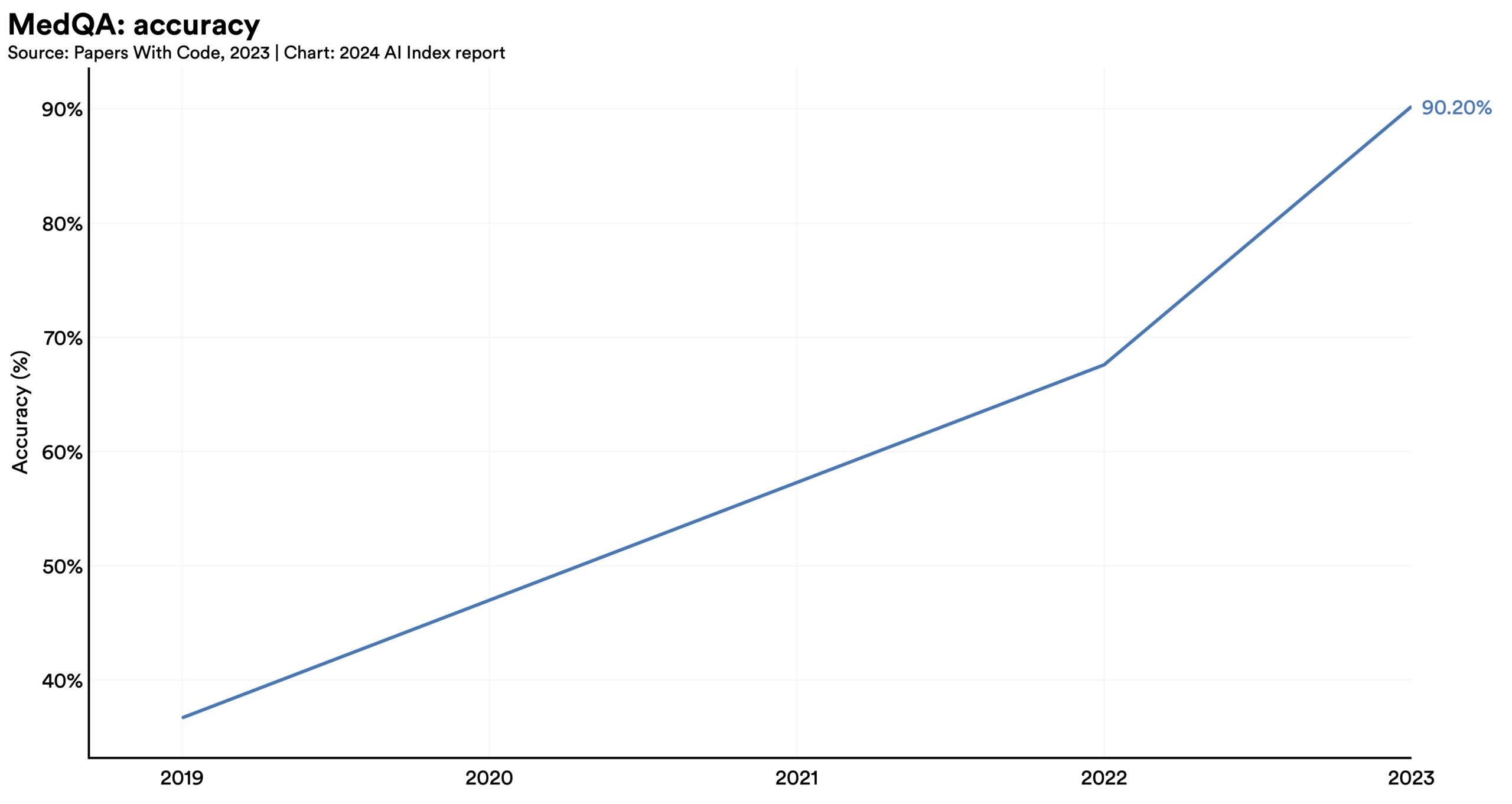

In 2023, several significant medical systems were launched, including EVEscape, which enhances pandemic prediction, and AlphaMissence, which assists in AI-driven mutation classification. AI is increasingly being utilized to propel medical advancements.  Over the past few years, AI systems have shown remarkable improvement on the MedQA benchmark, a key test for assessing AI’s clinical knowledge. The standout model of 2023, GPT-4 Medprompt, reached an accuracy rate of 90.2%, marking a 22.6 percentage point increase from the highest score in 2022. Since the benchmark’s introduction in 2019, AI performance on MedQA has nearly tripled.

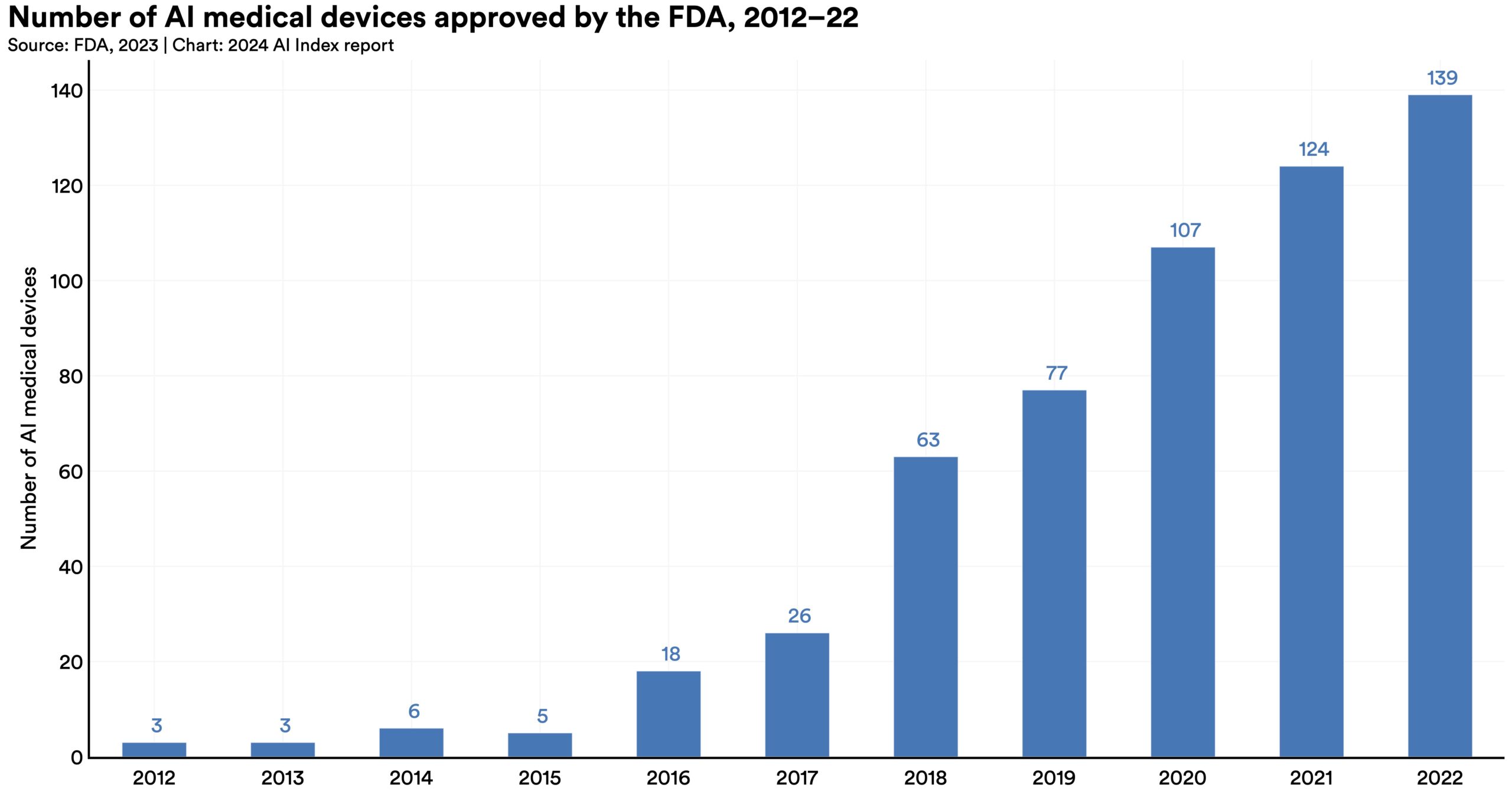

Over the past few years, AI systems have shown remarkable improvement on the MedQA benchmark, a key test for assessing AI’s clinical knowledge. The standout model of 2023, GPT-4 Medprompt, reached an accuracy rate of 90.2%, marking a 22.6 percentage point increase from the highest score in 2022. Since the benchmark’s introduction in 2019, AI performance on MedQA has nearly tripled.  In 2022, the FDA approved 139 AI-related medical devices, a 12.1% increase from 2021. Since 2012, the number of FDA-approved AI-related medical devices has increased by more than 45-fold. AI is increasingly being used for real-world medical purposes.

In 2022, the FDA approved 139 AI-related medical devices, a 12.1% increase from 2021. Since 2012, the number of FDA-approved AI-related medical devices has increased by more than 45-fold. AI is increasingly being used for real-world medical purposes. This chapter examines trends in AI and computer science (CS) education, focusing on who is learning, where they are learning, and how these trends have evolved over time. Amid growing concerns about AI’s impact on education, it also investigates the use of new AI tools like ChatGPT by teachers and students.

The analysis begins with an overview of the state of postsecondary CS and AI education in the United States and Canada, based on the Computing Research Association’s annual Taulbee Survey. It then reviews data from Informatics Europe regarding CS education in Europe. This year introduces a new section with data from Studyportals on the global count of AI-related English-language study programs.

The chapter wraps up with insights into K–12 CS education in the United States from Code.org and findings from the Walton Foundation survey on ChatGPT’s use in schools.

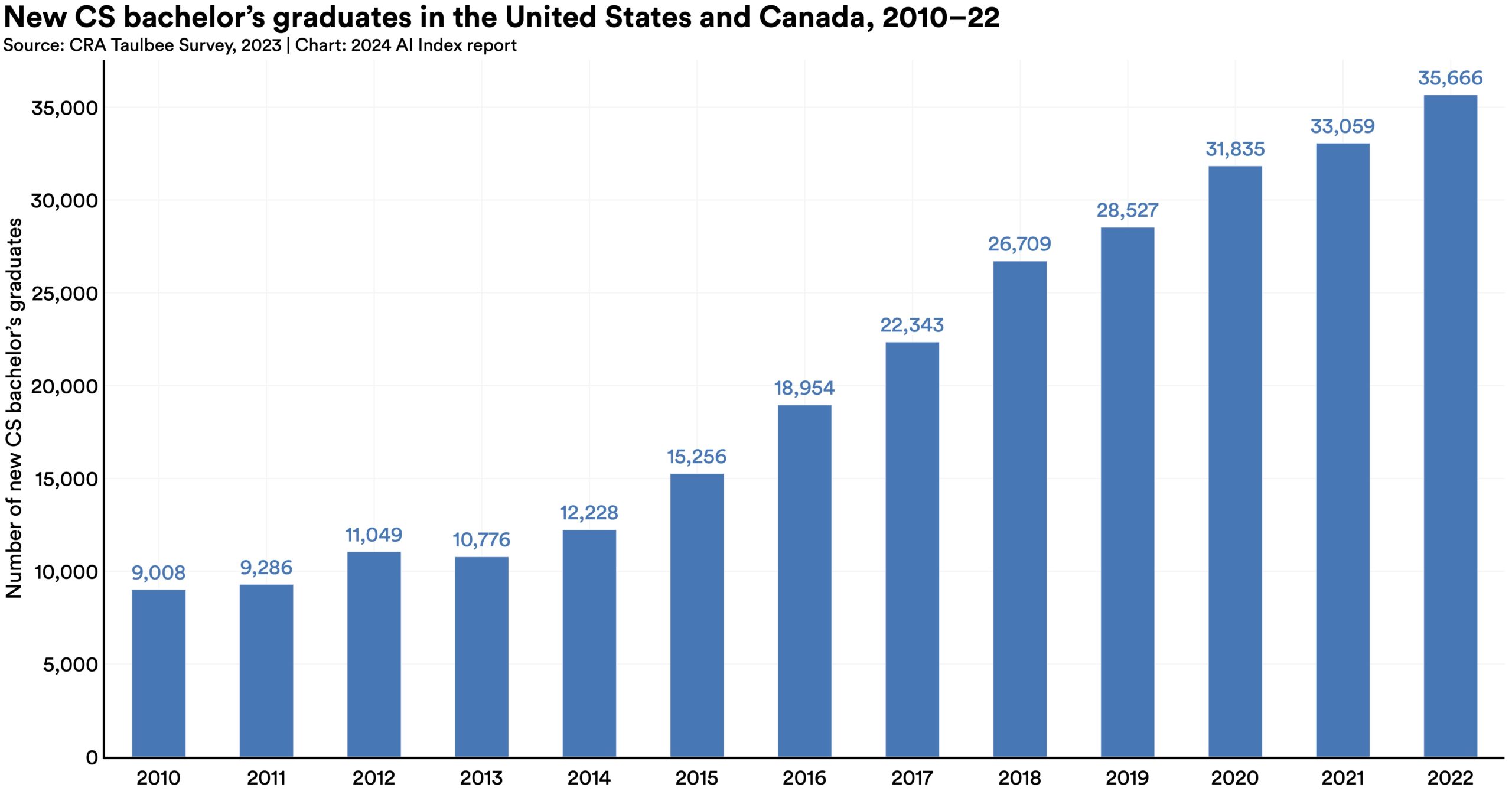

While the number of new American and Canadian bachelor’s graduates has consistently risen for more than a decade, the number of students opting for graduate education in CS has flattened. Since 2018, the number of CS master’s and PhD graduates has slightly declined.

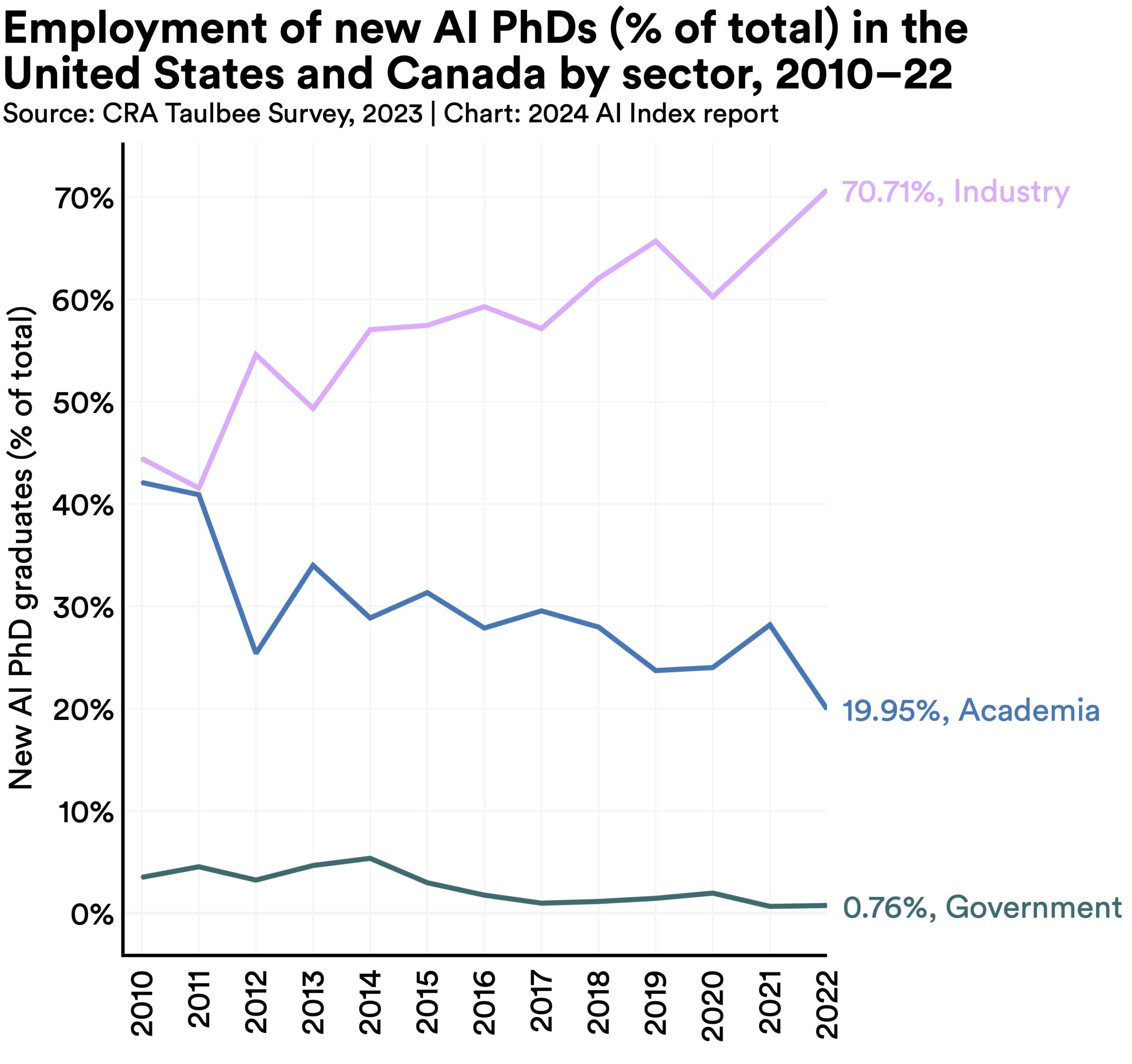

While the number of new American and Canadian bachelor’s graduates has consistently risen for more than a decade, the number of students opting for graduate education in CS has flattened. Since 2018, the number of CS master’s and PhD graduates has slightly declined.  In 2011, roughly equal percentages of new AI PhDs took jobs in industry (40.9%) and academia (41.6%). However, by 2022, a significantly larger proportion (70.7%) joined industry after graduation compared to those entering academia (20.0%). Over the past year alone, the share of industry-bound AI PhDs has risen by 5.3 percentage points, indicating an intensifying brain drain from universities into industry.

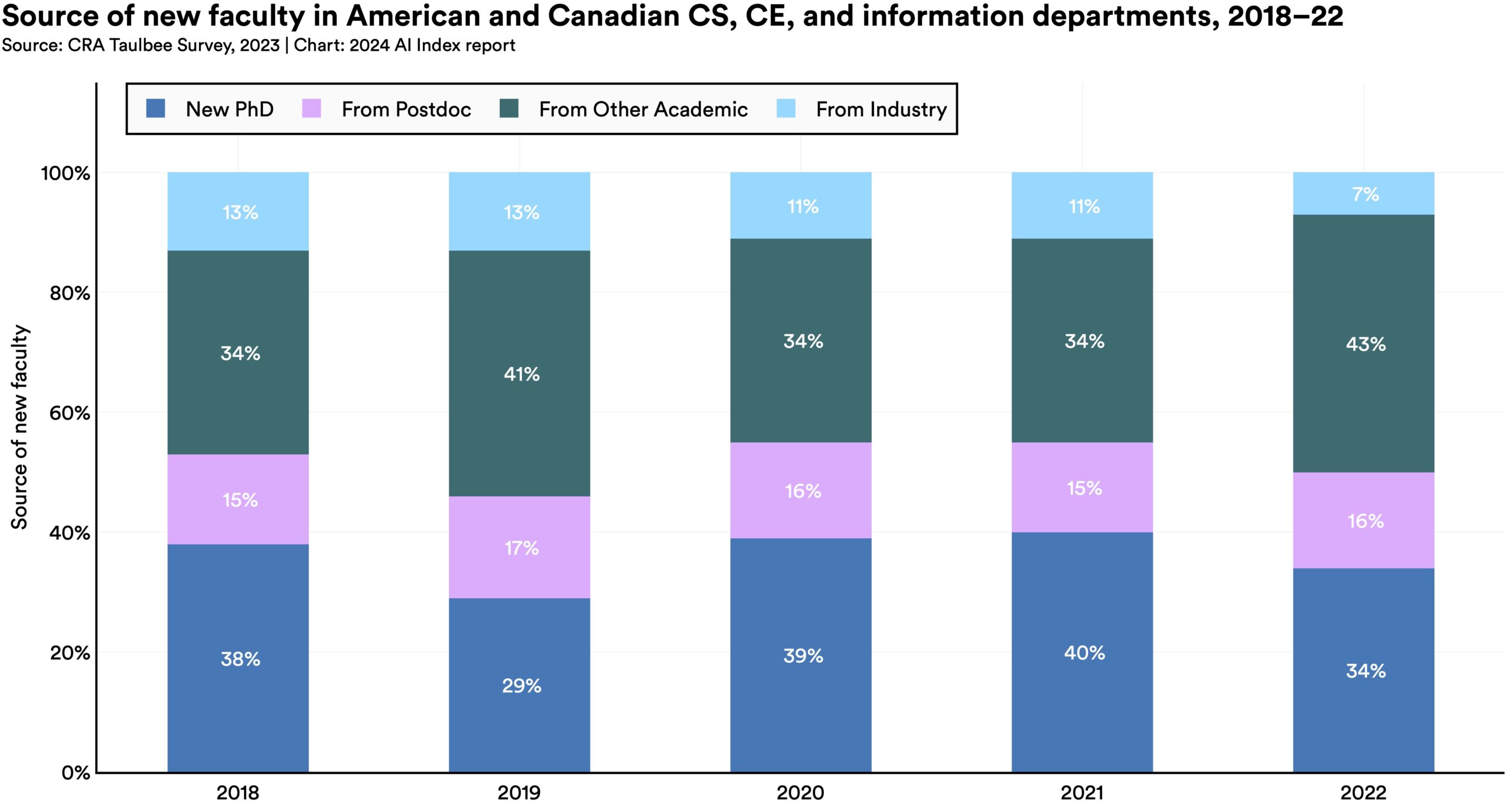

In 2011, roughly equal percentages of new AI PhDs took jobs in industry (40.9%) and academia (41.6%). However, by 2022, a significantly larger proportion (70.7%) joined industry after graduation compared to those entering academia (20.0%). Over the past year alone, the share of industry-bound AI PhDs has risen by 5.3 percentage points, indicating an intensifying brain drain from universities into industry.  In 2019, 13% of new AI faculty in the United States and Canada were from industry. By 2021, this figure had declined to 11%, and in 2022, it further dropped to 7%. This trend indicates a progressively lower migration of high-level AI talent from industry into academia.

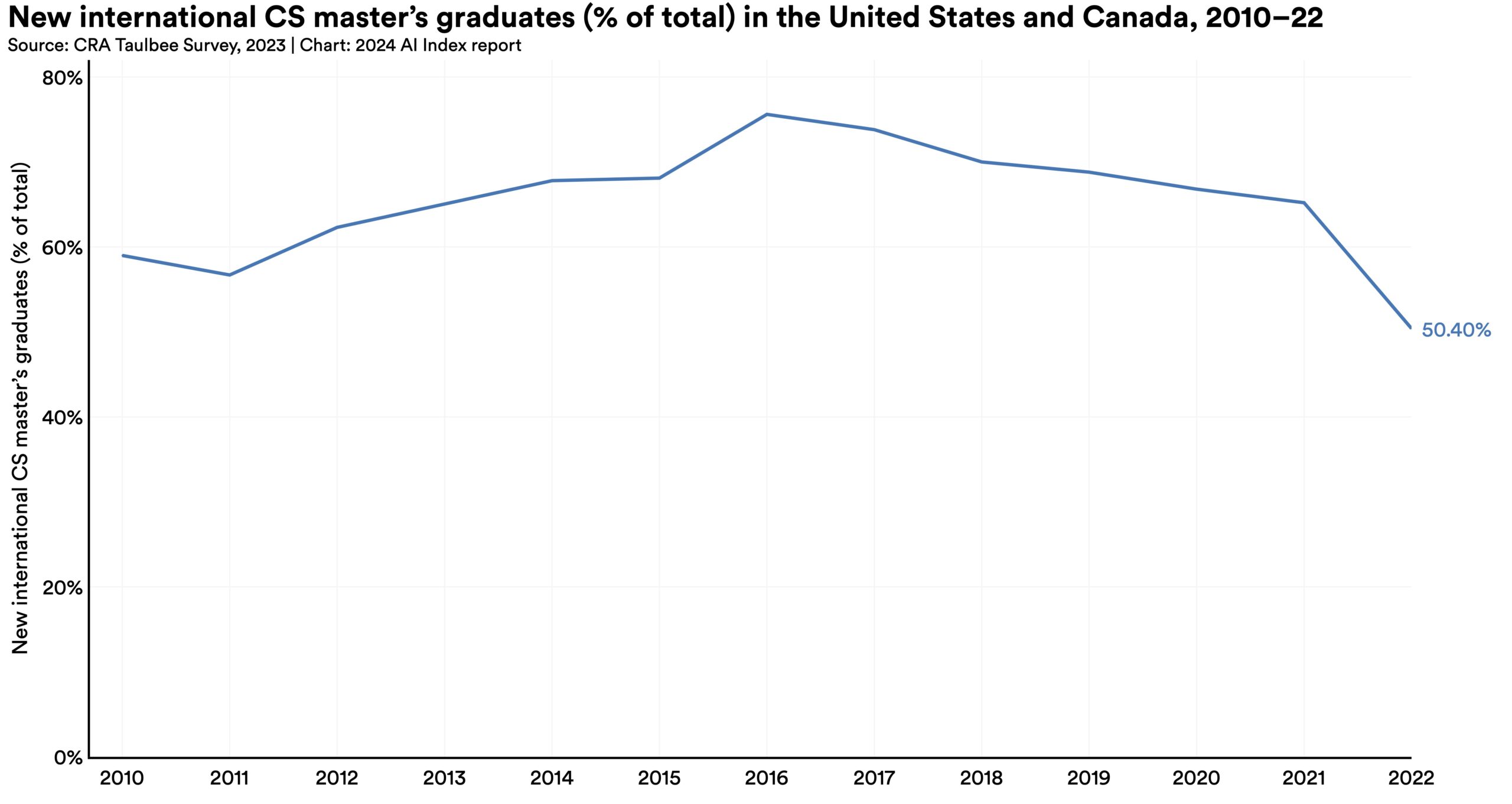

In 2019, 13% of new AI faculty in the United States and Canada were from industry. By 2021, this figure had declined to 11%, and in 2022, it further dropped to 7%. This trend indicates a progressively lower migration of high-level AI talent from industry into academia.  Proportionally fewer international CS bachelor’s, master’s, and PhDs graduated in 2022 than in 2021. The drop in international students in the master’s category was especially pronounced.

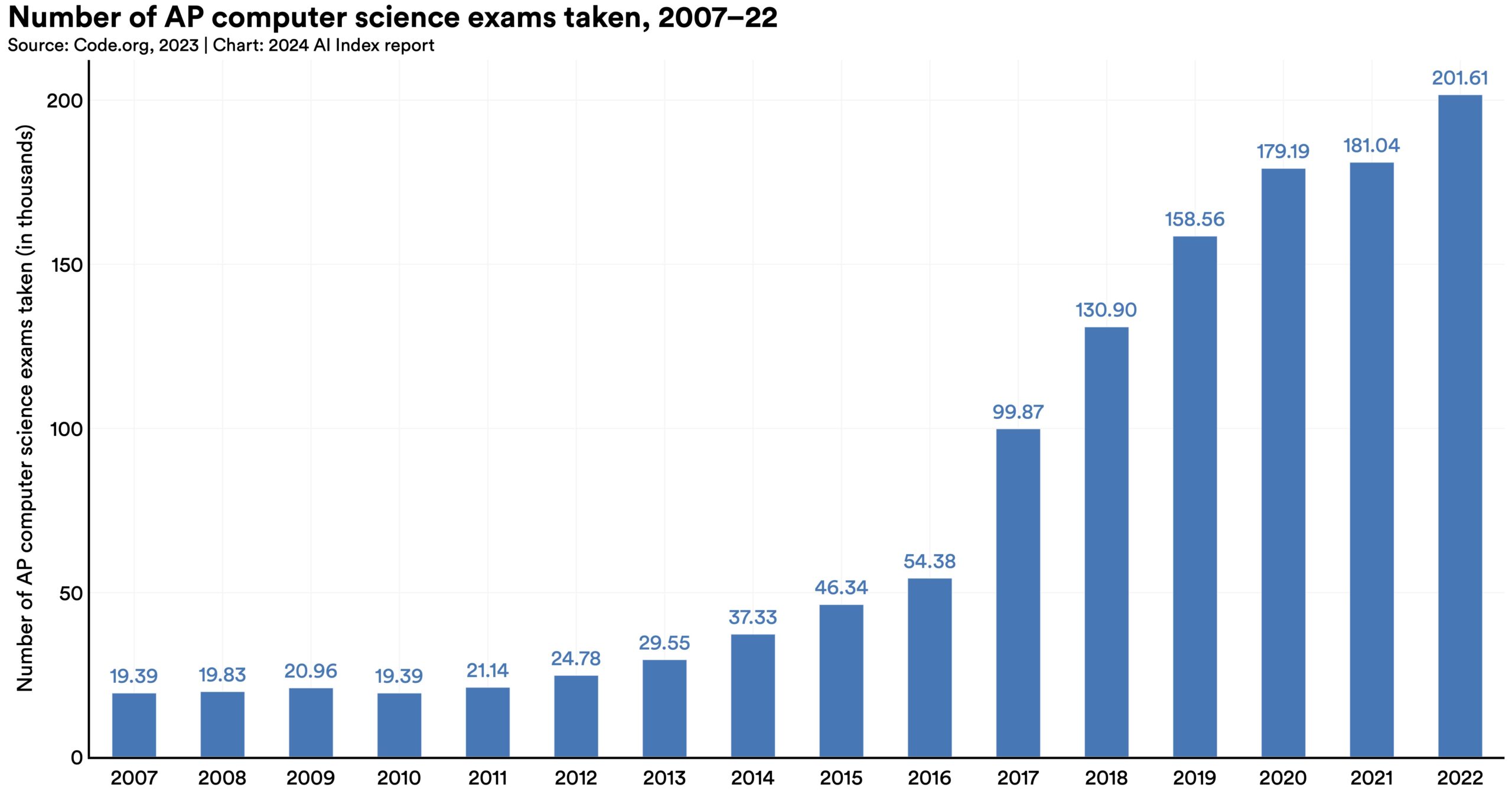

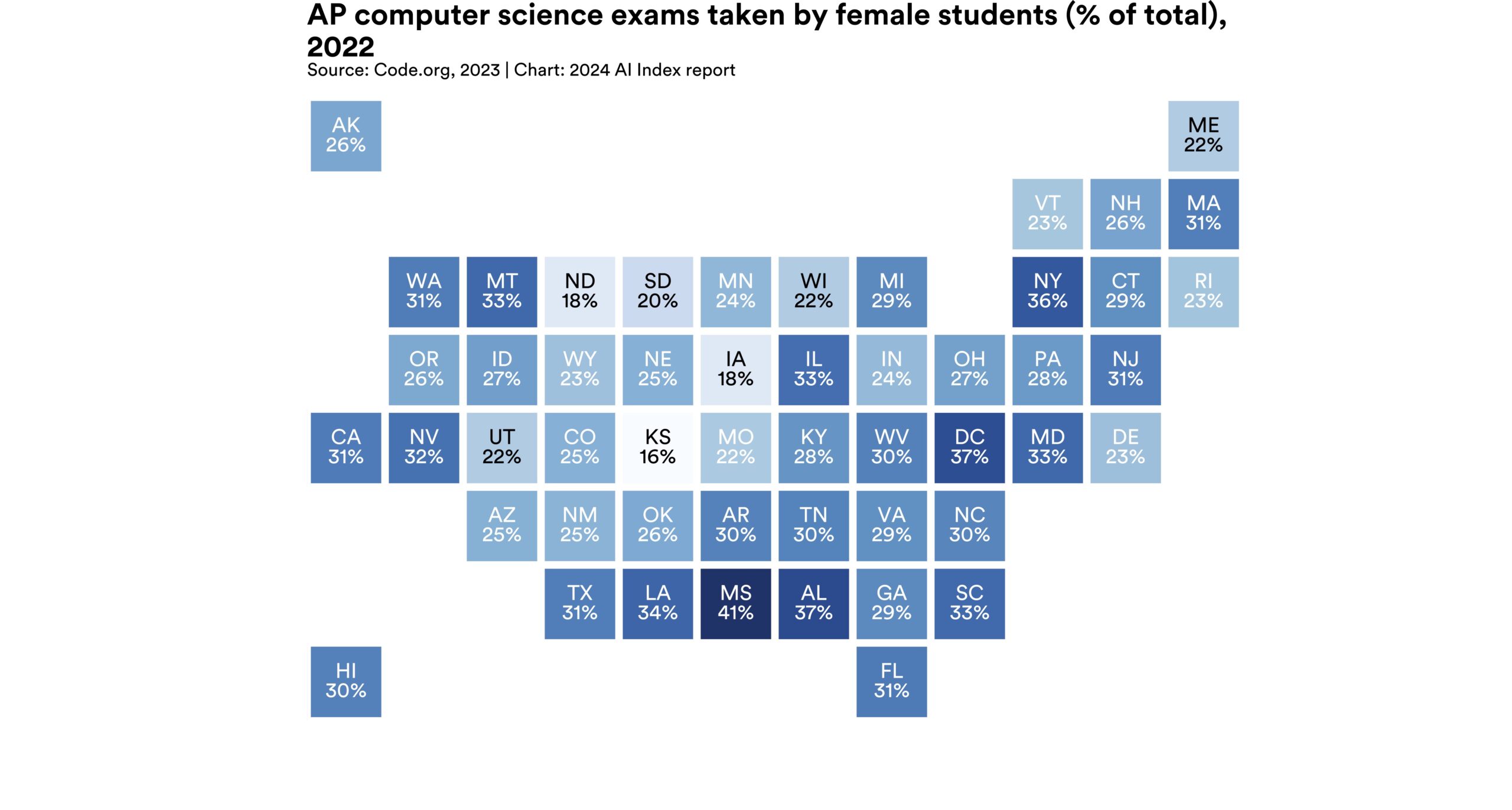

Proportionally fewer international CS bachelor’s, master’s, and PhDs graduated in 2022 than in 2021. The drop in international students in the master’s category was especially pronounced.  In 2022, 201,000 AP CS exams were administered. Since 2007, the number of students taking these exams has increased more than tenfold. However, recent evidence indicates that students in larger high schools and those in suburban areas are more likely to have access to CS courses.

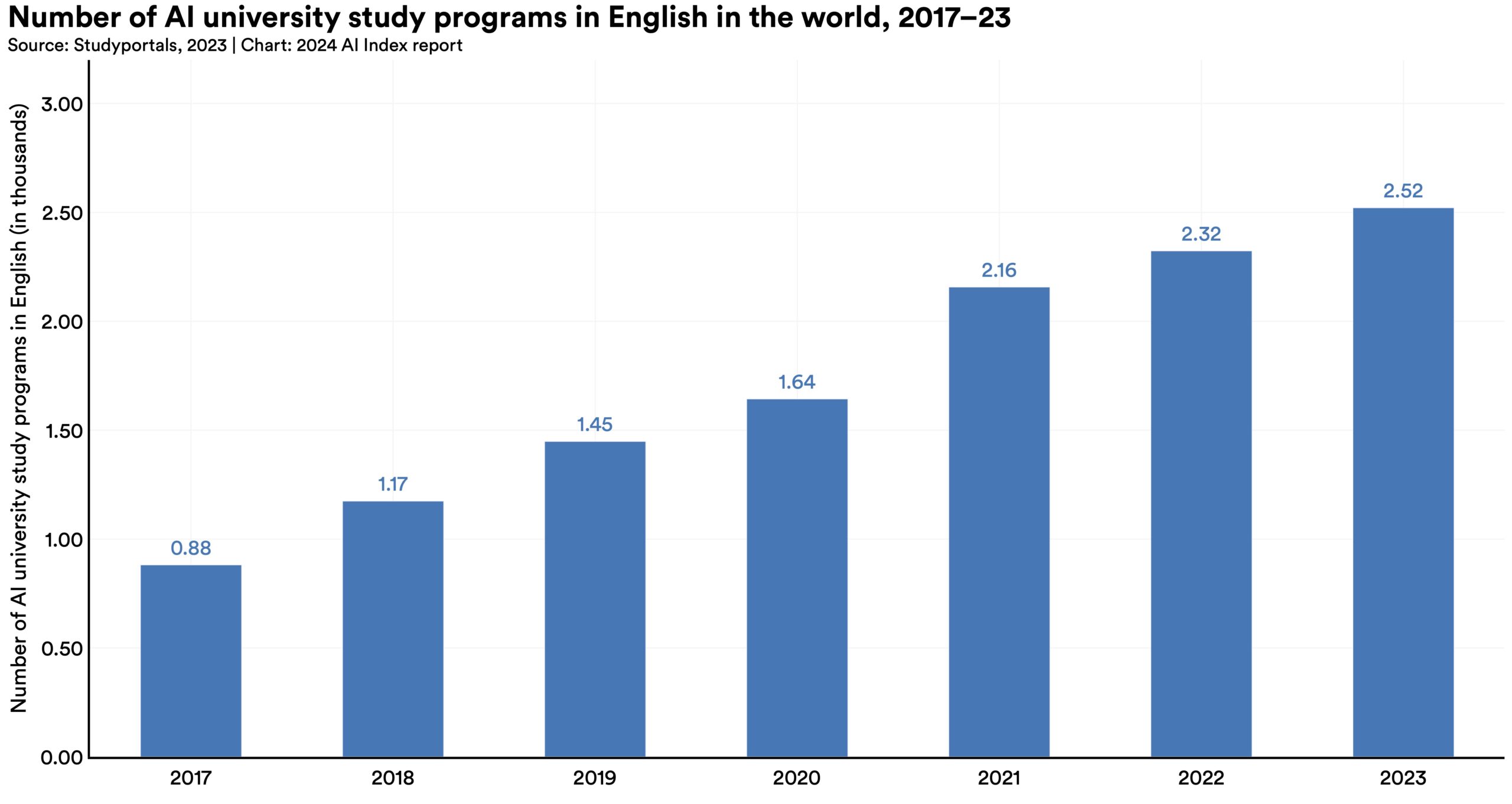

In 2022, 201,000 AP CS exams were administered. Since 2007, the number of students taking these exams has increased more than tenfold. However, recent evidence indicates that students in larger high schools and those in suburban areas are more likely to have access to CS courses.  The number of English-language, AI-related postsecondary degree programs has tripled since 2017, showing a steady annual increase over the past five years. Universities worldwide are offering more AI-focused degree programs.

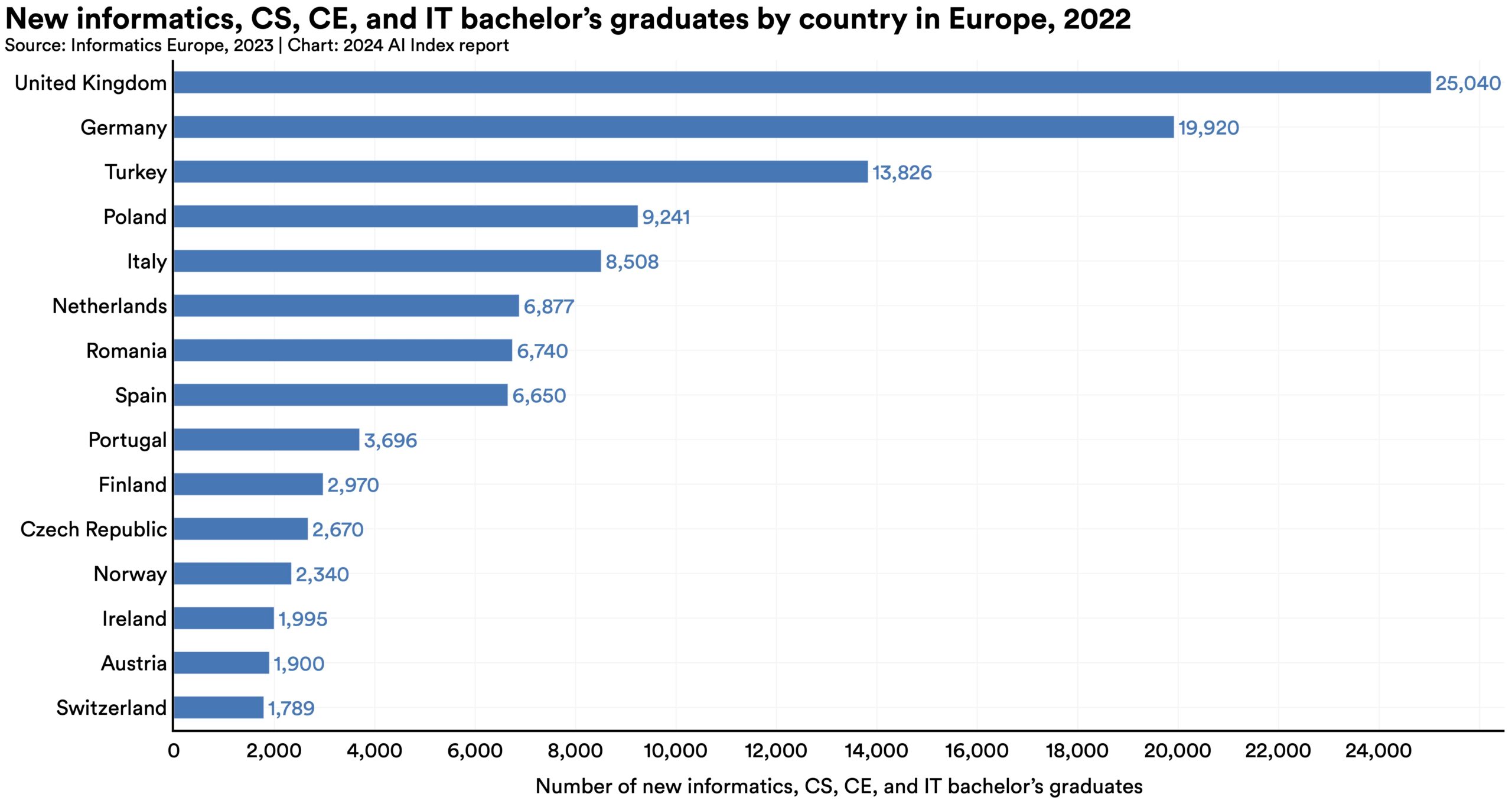

The number of English-language, AI-related postsecondary degree programs has tripled since 2017, showing a steady annual increase over the past five years. Universities worldwide are offering more AI-focused degree programs.  The United Kingdom and Germany lead Europe in producing the highest number of new informatics, CS, CE, and information bachelor’s, master’s, and PhD graduates. On a per capita basis, Finland leads in the production of both bachelor’s and PhD graduates, while Ireland leads in the production of master’s graduates.

The United Kingdom and Germany lead Europe in producing the highest number of new informatics, CS, CE, and information bachelor’s, master’s, and PhD graduates. On a per capita basis, Finland leads in the production of both bachelor’s and PhD graduates, while Ireland leads in the production of master’s graduates. AI’s increasing capabilities have captured policymakers’ attention. Over the past year, several nations and political bodies, such as the United States and the European Union, have enacted significant AI-related policies. The proliferation of these policies reflect policymakers’ growing awareness of the need to regulate AI and improve their respective countries’ ability to capitalize on its transformative potential.

This chapter begins examining global AI governance starting with a timeline of significant AI policymaking events in 2023. It then analyzes global and U.S. AI legislative efforts, studies AI legislative mentions, and explores how lawmakers across the globe perceive and discuss AI. Next, the chapter profiles national AI strategies and regulatory efforts in the United States and the European Union. Finally, it concludes with a study of public investment in AI within the United States.

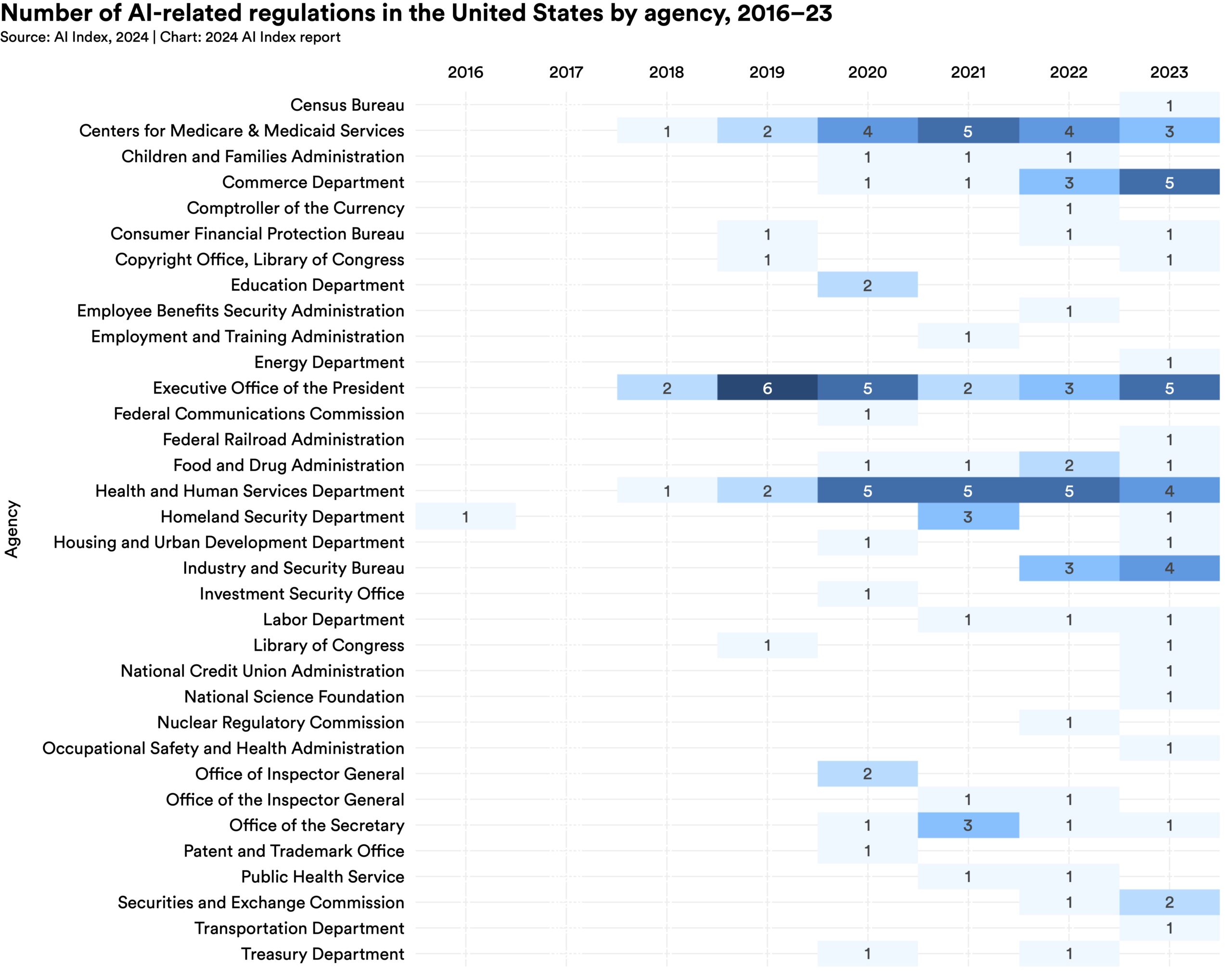

The number of AI-related regulations in the U.S. has risen significantly in the past year and over the last five years. In 2023, there were 25 AI-related regulations, up from just one in 2016. Last year alone, the total number of AI-related regulations grew by 56.3%.

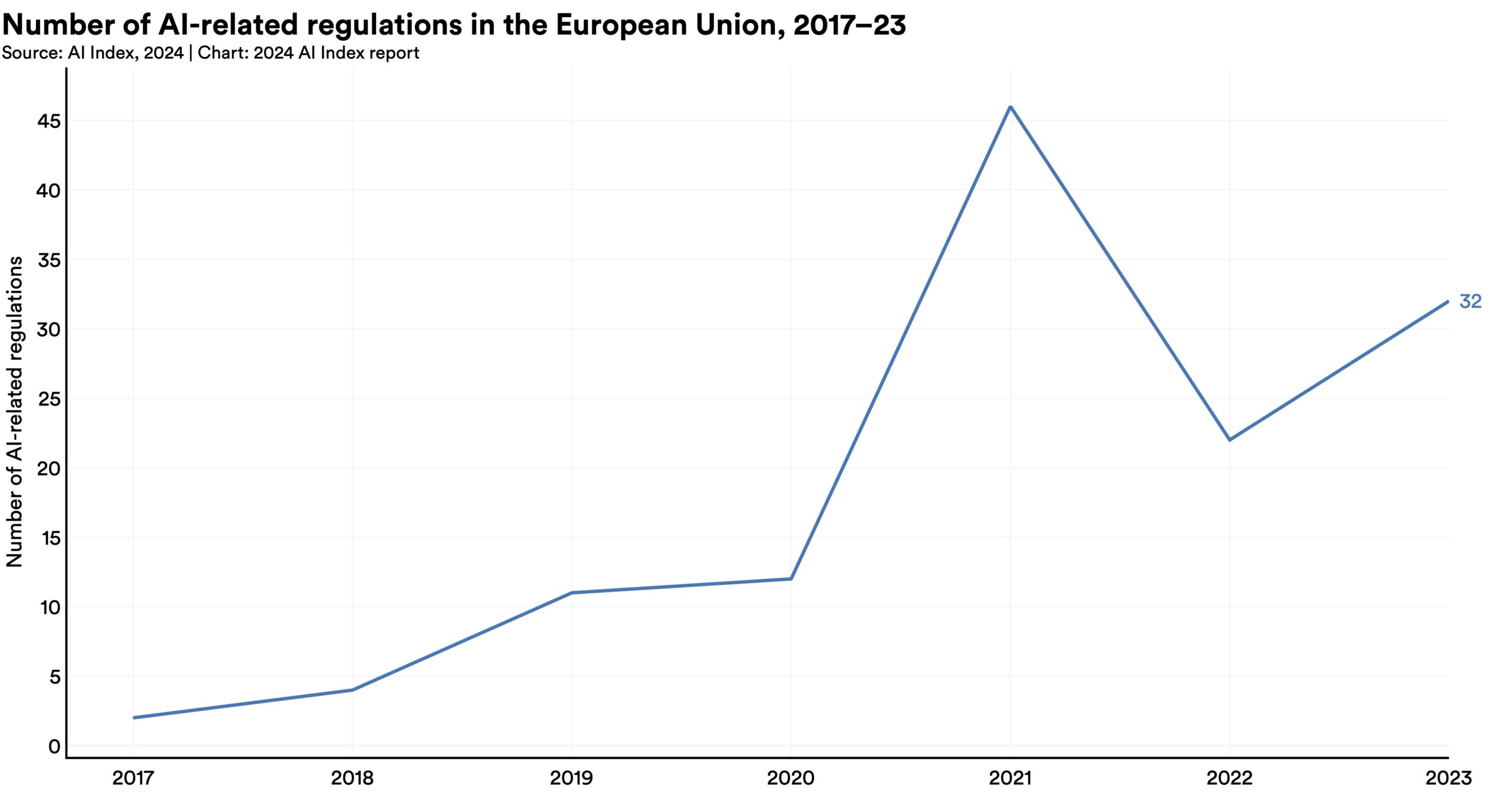

The number of AI-related regulations in the U.S. has risen significantly in the past year and over the last five years. In 2023, there were 25 AI-related regulations, up from just one in 2016. Last year alone, the total number of AI-related regulations grew by 56.3%.  In 2023, policymakers on both sides of the Atlantic put forth substantial AI regulatory proposals. The European Union reached a deal on the terms of the AI Act, a landmark piece of legislation enacted in 2024. Meanwhile, President Biden signed an Executive Order on AI, the most notable AI policy initiative in the United States that year.

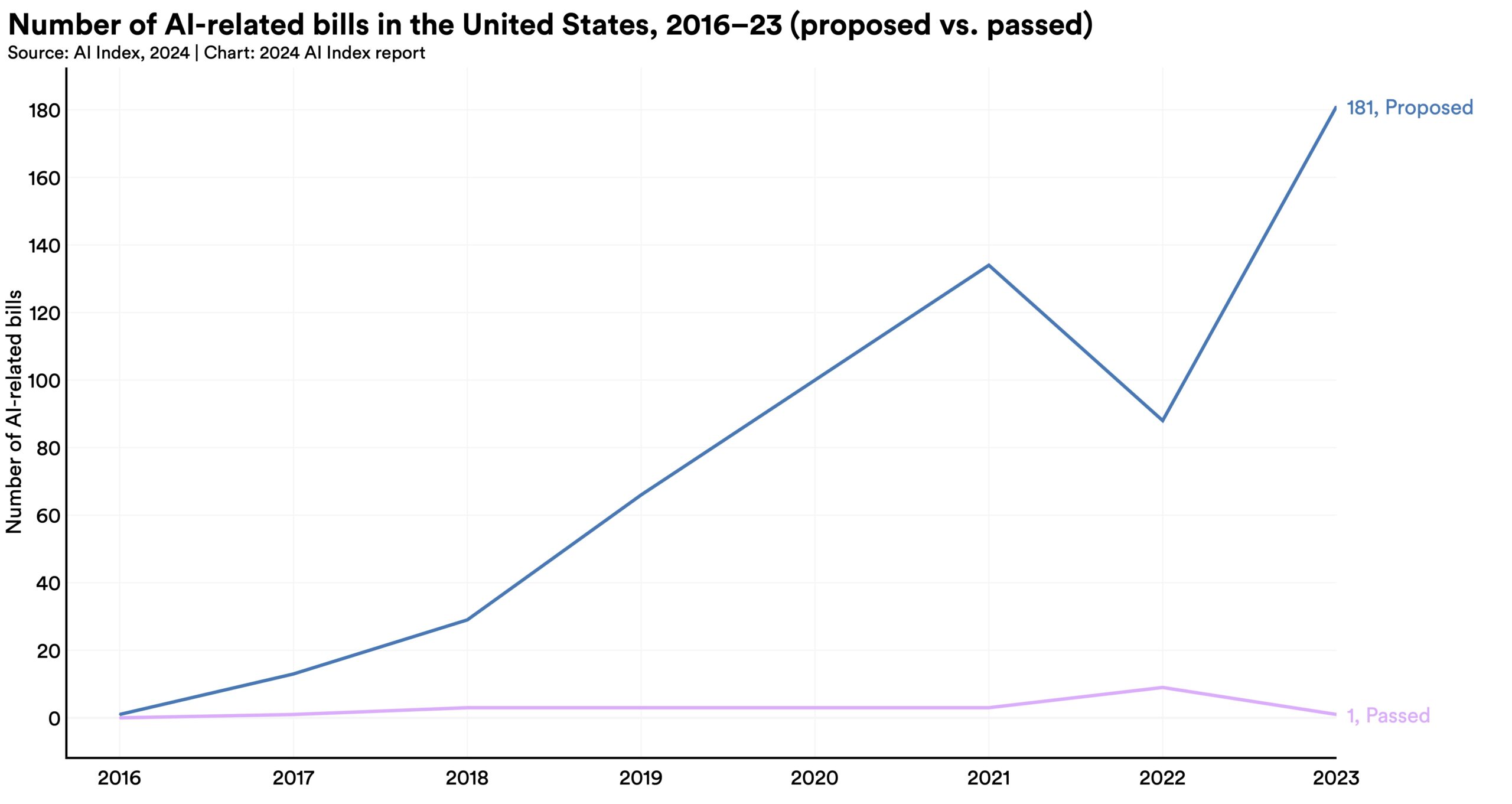

In 2023, policymakers on both sides of the Atlantic put forth substantial AI regulatory proposals. The European Union reached a deal on the terms of the AI Act, a landmark piece of legislation enacted in 2024. Meanwhile, President Biden signed an Executive Order on AI, the most notable AI policy initiative in the United States that year.  The year 2023 witnessed a remarkable increase in AI-related legislation at the federal level, with 181 bills proposed, more than double the 88 proposed in 2022.

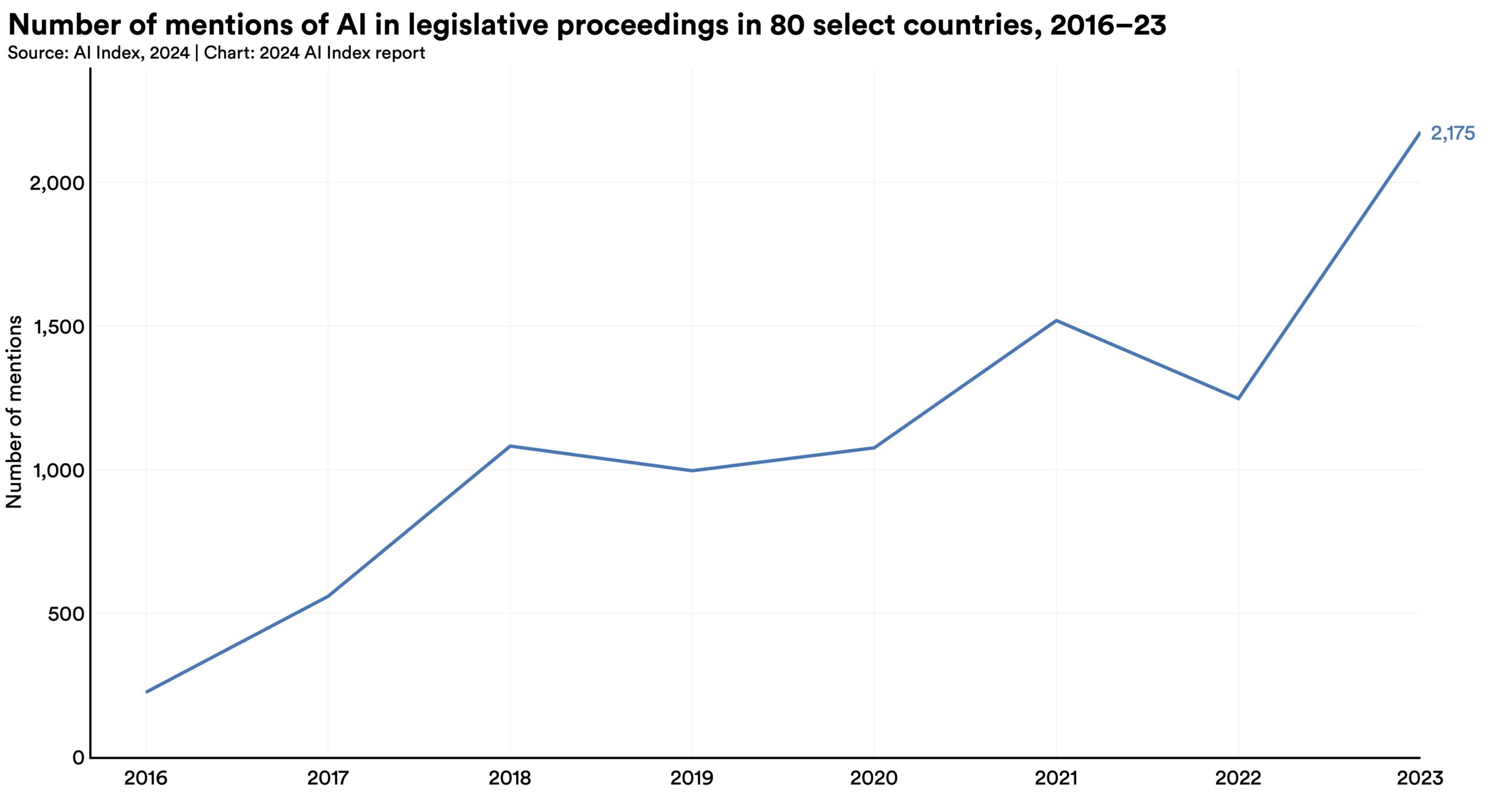

The year 2023 witnessed a remarkable increase in AI-related legislation at the federal level, with 181 bills proposed, more than double the 88 proposed in 2022.  Mentions of AI in legislative proceedings across the globe have nearly doubled, rising from 1,247 in 2022 to 2,175 in 2023. AI was mentioned in the legislative proceedings of 49 countries in 2023. Moreover, at least one country from every continent discussed AI in 2023, underscoring the truly global reach of AI policy discourse.

Mentions of AI in legislative proceedings across the globe have nearly doubled, rising from 1,247 in 2022 to 2,175 in 2023. AI was mentioned in the legislative proceedings of 49 countries in 2023. Moreover, at least one country from every continent discussed AI in 2023, underscoring the truly global reach of AI policy discourse.  The number of U.S. regulatory agencies issuing AI regulations increased to 21 in 2023 from 17 in 2022, indicating a growing concern over AI regulation among a broader array of American regulatory bodies. Some of the new regulatory agencies that enacted AI-related regulations for the first time in 2023 include the Department of Transportation, the Department of Energy, and the Occupational Safety and Health Administration.

The number of U.S. regulatory agencies issuing AI regulations increased to 21 in 2023 from 17 in 2022, indicating a growing concern over AI regulation among a broader array of American regulatory bodies. Some of the new regulatory agencies that enacted AI-related regulations for the first time in 2023 include the Department of Transportation, the Department of Energy, and the Occupational Safety and Health Administration. The demographics of AI developers often differ from those of users. For instance, a considerable number of prominent AI companies and the datasets utilized for model training originate from Western nations, thereby reflecting Western perspectives. The lack of diversity can perpetuate or even exacerbate societal inequalities and biases.

This chapter delves into diversity trends in AI. The chapter begins by drawing on data from the Computing Research Association (CRA) to provide insights into the state of diversity in American and Canadian computer science (CS) departments. A notable addition to this year’s analysis is data sourced from Informatics Europe, which sheds light on diversity trends within European CS education. Next, the chapter examines participation rates at the Women in Machine Learning (WiML) workshop held annually at NeurIPS. Finally, the chapter analyzes data from Code.org, offering insights into the current state of diversity in secondary CS education across the United States.

The AI Index is dedicated to enhancing the coverage of data shared in this chapter. Demographic data regarding AI trends, particularly in areas such as sexual orientation, remains scarce. The AI Index urges other stakeholders in the AI domain to intensify their endeavors to track diversity trends associated with AI and hopes to comprehensively cover such trends in future reports.

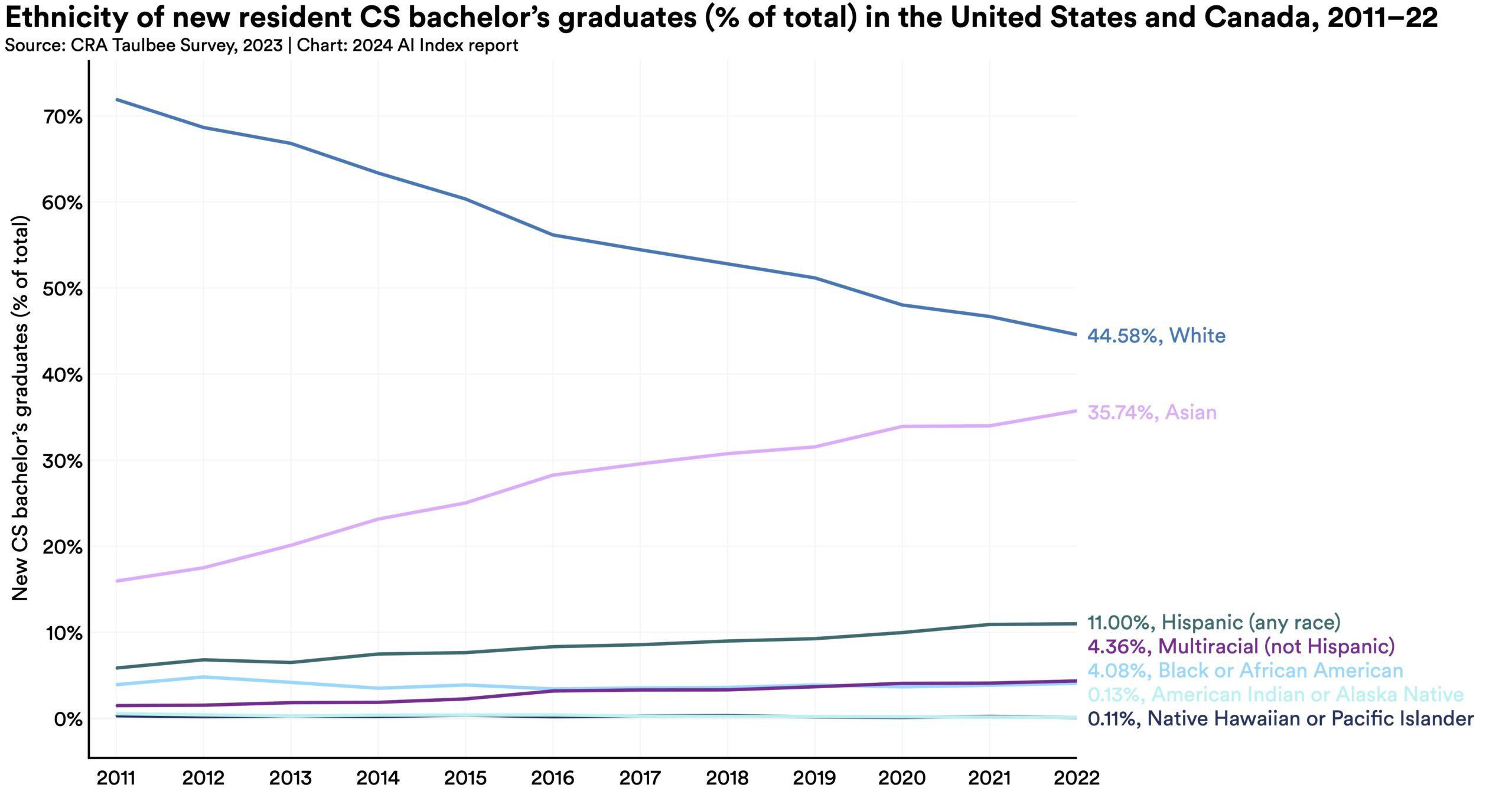

While white students continue to be the most represented ethnicity among new resident graduates at all three levels, the representation from other ethnic groups, such as Asian, Hispanic, and Black or African American students, continues to grow. For instance, since 2011, the proportion of Asian CS bachelor’s degree graduates has increased by 19.8 percentage points, and the proportion of Hispanic CS bachelor’s degree graduates has grown by 5.2 percentage points.

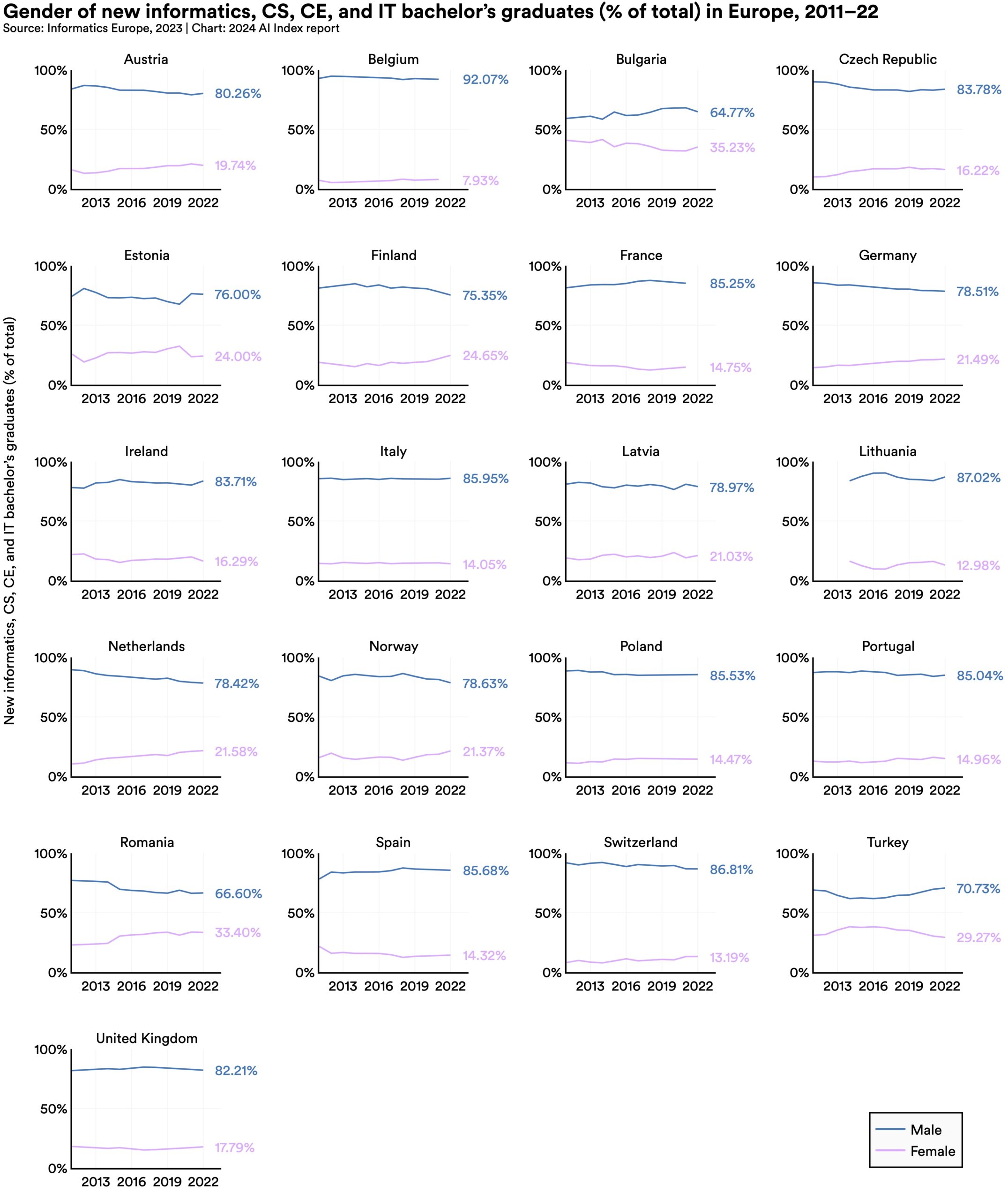

While white students continue to be the most represented ethnicity among new resident graduates at all three levels, the representation from other ethnic groups, such as Asian, Hispanic, and Black or African American students, continues to grow. For instance, since 2011, the proportion of Asian CS bachelor’s degree graduates has increased by 19.8 percentage points, and the proportion of Hispanic CS bachelor’s degree graduates has grown by 5.2 percentage points.  Every surveyed European country reported more male than female graduates in bachelor’s, master’s, and PhD programs for informatics, CS, CE, and IT. While the gender gaps have narrowed in most countries over the last decade, the rate of this narrowing has been slow.

Every surveyed European country reported more male than female graduates in bachelor’s, master’s, and PhD programs for informatics, CS, CE, and IT. While the gender gaps have narrowed in most countries over the last decade, the rate of this narrowing has been slow.  The proportion of AP CS exams taken by female students rose from 16.8% in 2007 to 30.5% in 2022. Similarly, the participation of Asian, Hispanic/Latino/Latina, and Black/African American students in AP CS has consistently increased year over year.

The proportion of AP CS exams taken by female students rose from 16.8% in 2007 to 30.5% in 2022. Similarly, the participation of Asian, Hispanic/Latino/Latina, and Black/African American students in AP CS has consistently increased year over year.

As AI becomes increasingly ubiquitous, it is important to understand how public perceptions regarding the technology evolve. Understanding this public opinion is vital in better anticipating AI’s societal impacts and how the integration of the technology may differ across countries and demographic groups.

This chapter examines public opinion on AI through global, national, demographic, and ethnic perspectives. It draws upon several data sources: longitudinal survey data from Ipsos profiling global AI attitudes over time, survey data from the University of Toronto exploring public perception of ChatGPT, and data from Pew examining American attitudes regarding AI. The chapter concludes by analyzing mentions of significant AI models on Twitter, using data from Quid.

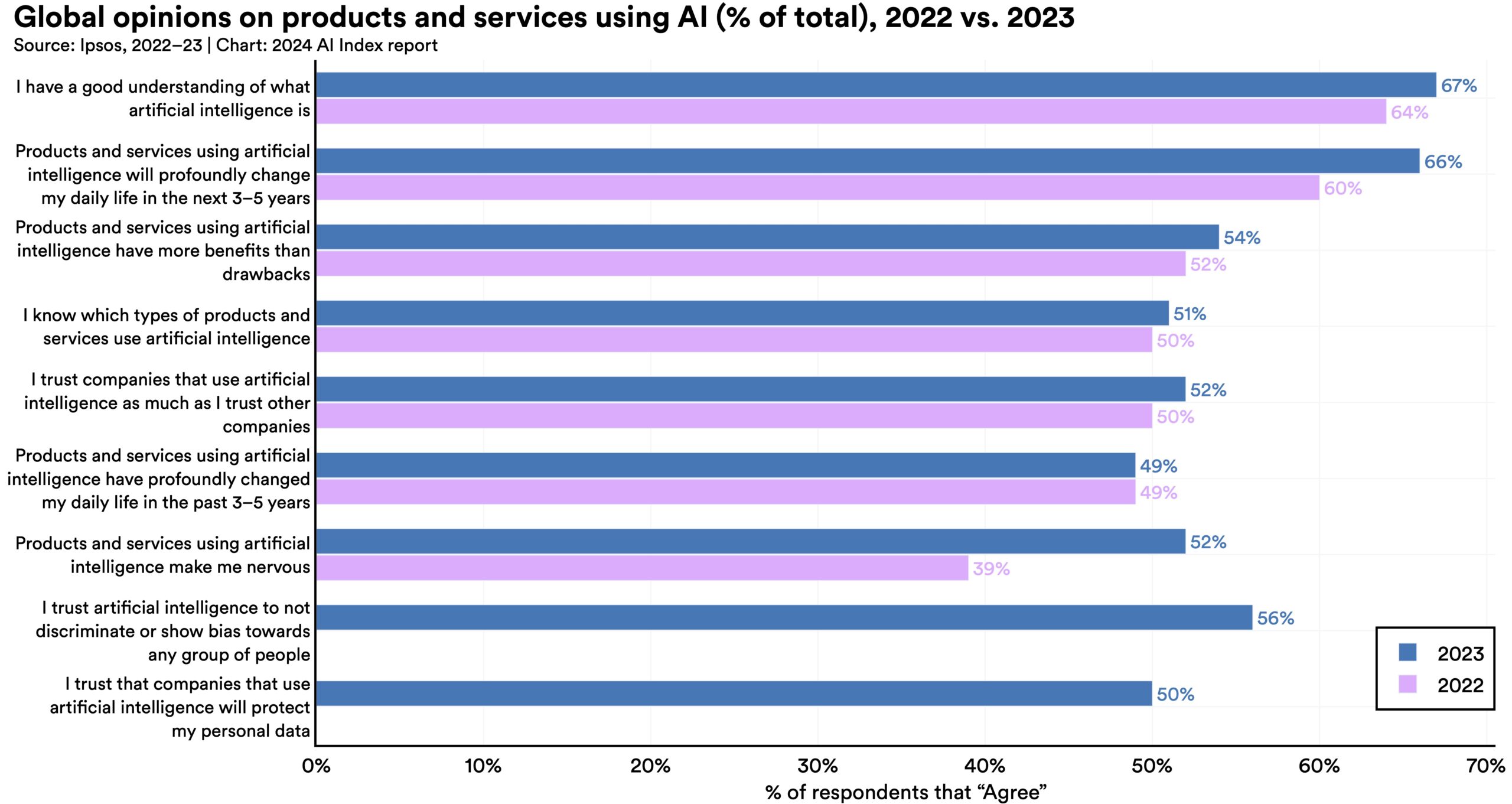

A survey from Ipsos shows that, over the last year, the proportion of those who think AI will dramatically affect their lives in the next three to five years has increased from 60% to 66%. Moreover, 52% express nervousness toward AI products and services, marking a 13 percentage point rise from 2022. In America, Pew data suggests that 52% of Americans report feeling more concerned than excited about AI, rising from 38% in 2022.

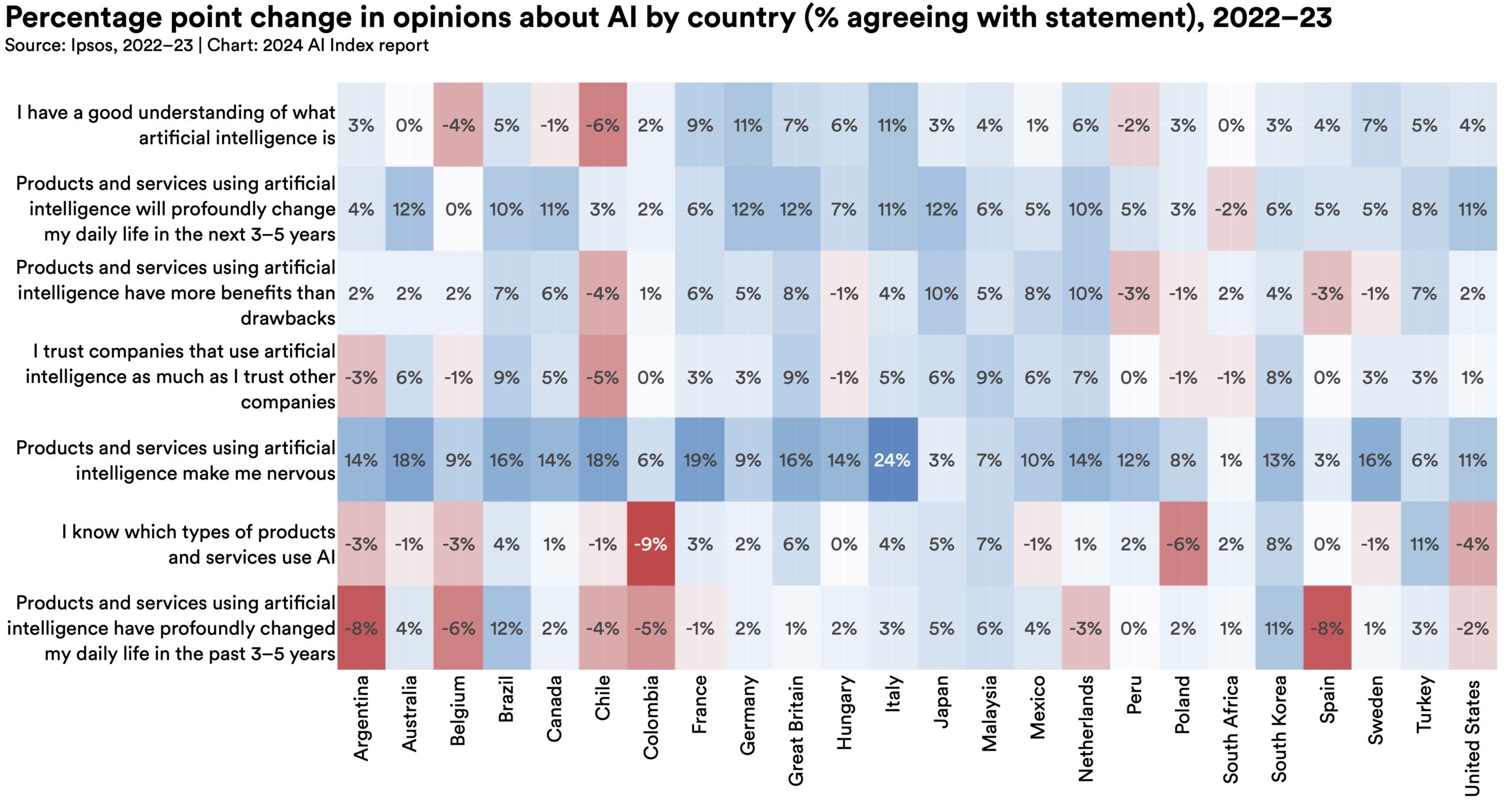

A survey from Ipsos shows that, over the last year, the proportion of those who think AI will dramatically affect their lives in the next three to five years has increased from 60% to 66%. Moreover, 52% express nervousness toward AI products and services, marking a 13 percentage point rise from 2022. In America, Pew data suggests that 52% of Americans report feeling more concerned than excited about AI, rising from 38% in 2022.  In 2022, several developed Western nations, including Germany, the Netherlands, Australia, Belgium, Canada, and the United States, were among the least positive about AI products and services. Since then, each of these countries has seen a rise in the proportion of respondents acknowledging the benefits of AI, with the Netherlands experiencing the most significant shift.

In 2022, several developed Western nations, including Germany, the Netherlands, Australia, Belgium, Canada, and the United States, were among the least positive about AI products and services. Since then, each of these countries has seen a rise in the proportion of respondents acknowledging the benefits of AI, with the Netherlands experiencing the most significant shift.  In an Ipsos survey, only 37% of respondents feel AI will improve their job. Only 34% anticipate AI will boost the economy, and 32% believe it will enhance the job market.

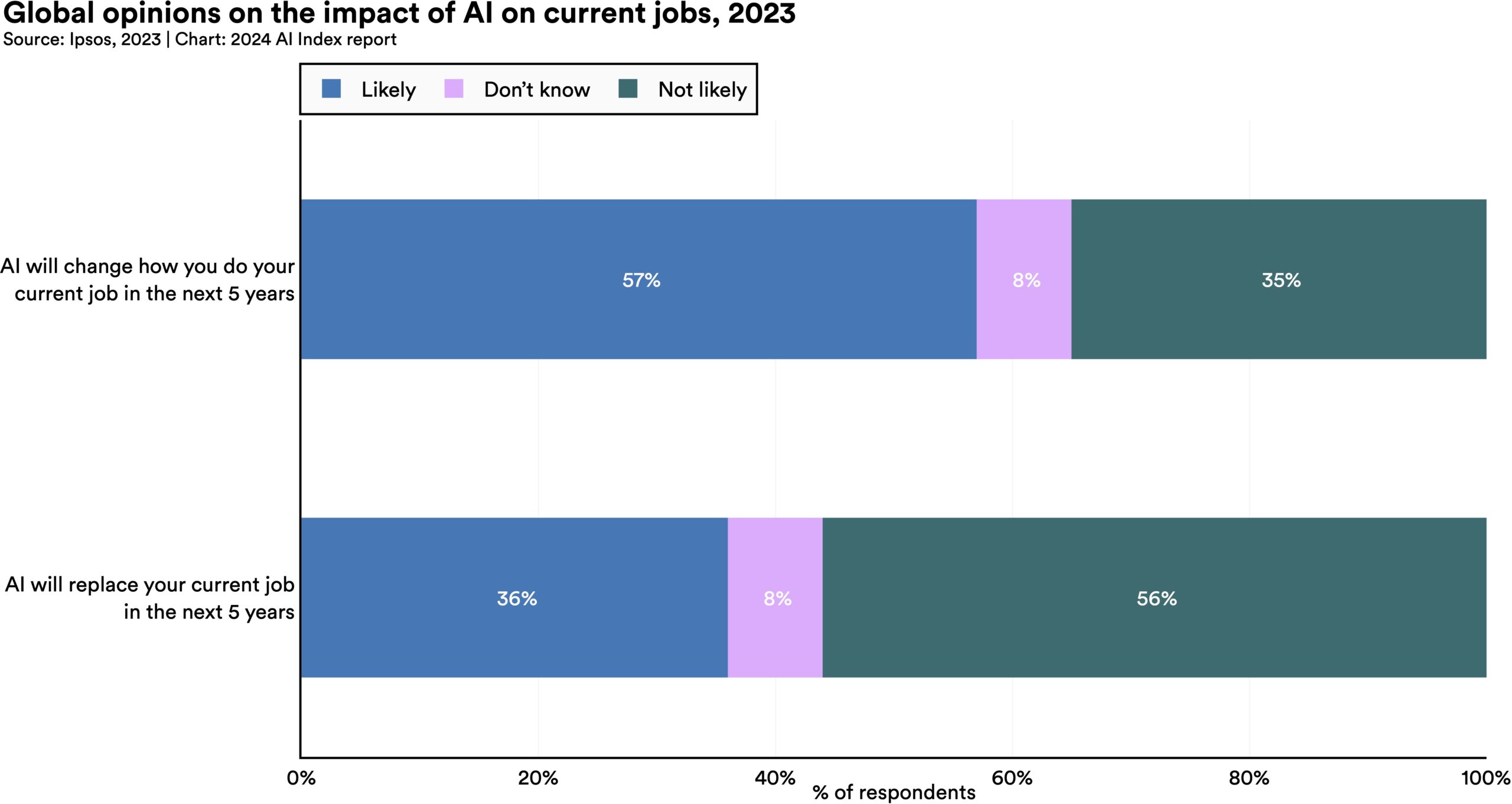

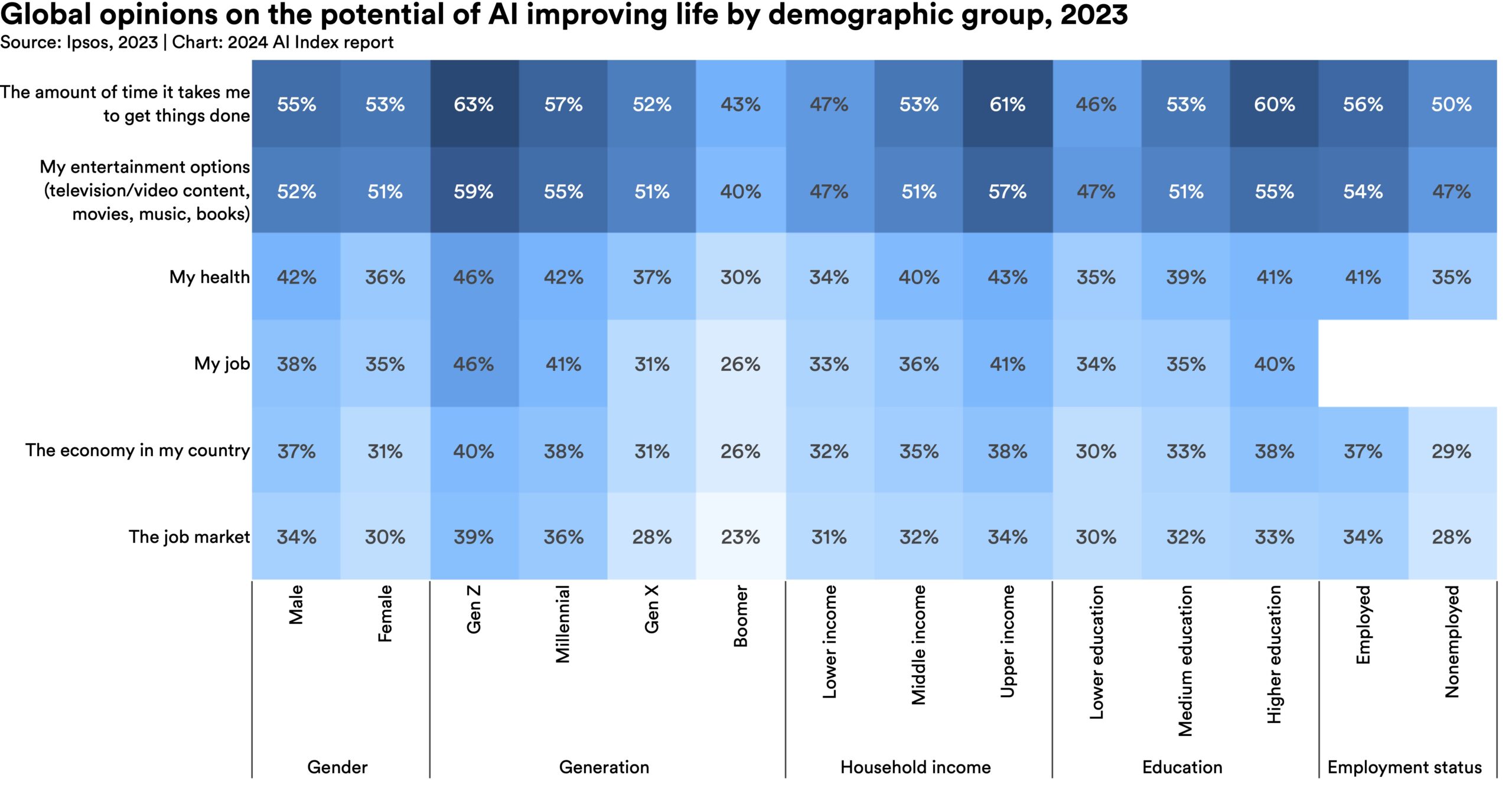

In an Ipsos survey, only 37% of respondents feel AI will improve their job. Only 34% anticipate AI will boost the economy, and 32% believe it will enhance the job market.  Significant demographic differences exist in perceptions of AI’s potential to enhance livelihoods, with younger generations generally more optimistic. For instance, 59% of Gen Z respondents believe AI will improve entertainment options, versus only 40% of baby boomers. Additionally, individuals with higher incomes and education levels are more optimistic about AI’s positive impacts on entertainment, health, and the economy than their lower-income and less-educated counterparts.

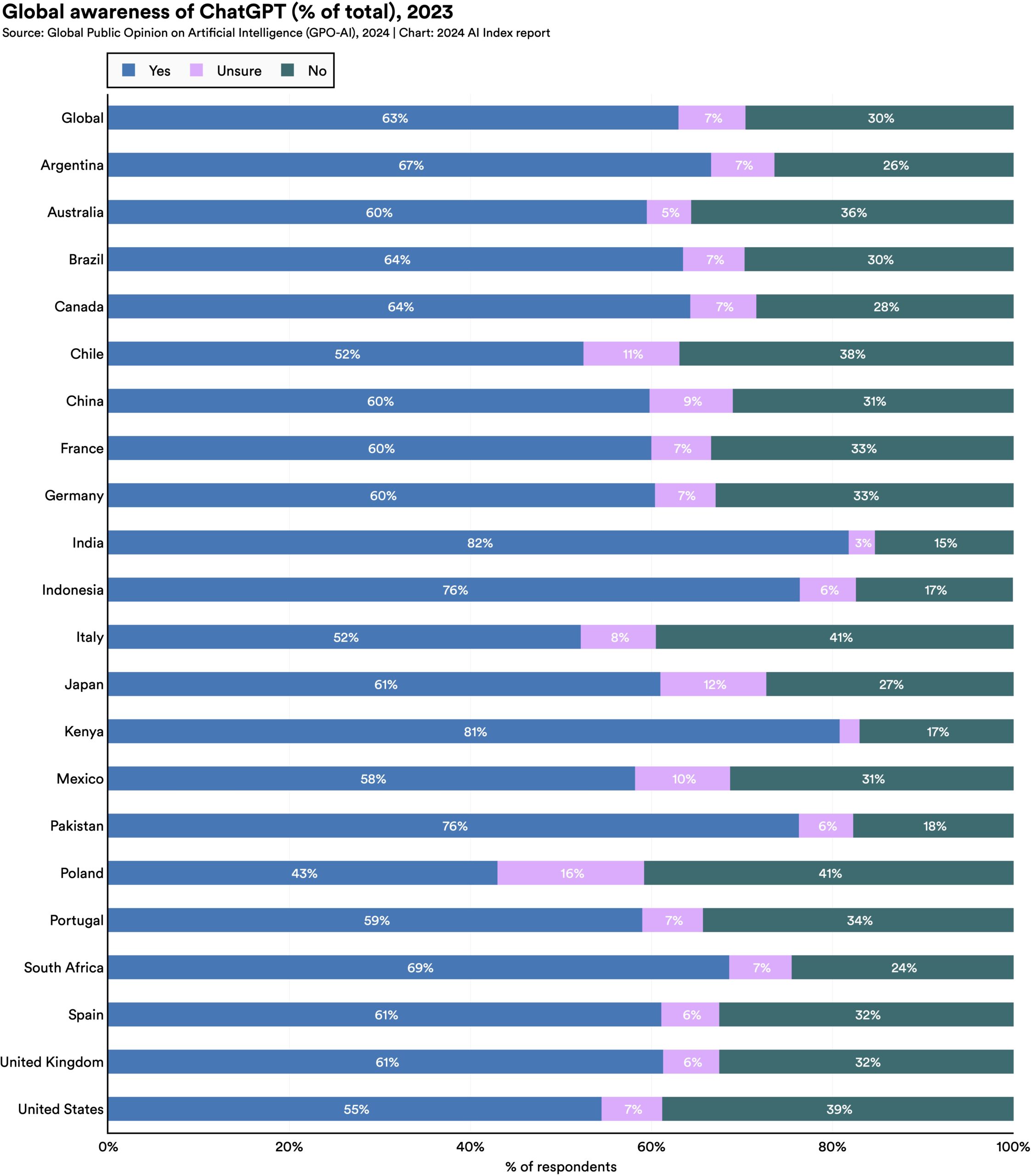

Significant demographic differences exist in perceptions of AI’s potential to enhance livelihoods, with younger generations generally more optimistic. For instance, 59% of Gen Z respondents believe AI will improve entertainment options, versus only 40% of baby boomers. Additionally, individuals with higher incomes and education levels are more optimistic about AI’s positive impacts on entertainment, health, and the economy than their lower-income and less-educated counterparts.  An international survey from the University of Toronto suggests that 63% of respondents are aware of ChatGPT. Of those aware, around half report using ChatGPT at least once weekly.

An international survey from the University of Toronto suggests that 63% of respondents are aware of ChatGPT. Of those aware, around half report using ChatGPT at least once weekly.